Chapter 4. Feed-Forward Networks for Natural Language Processing

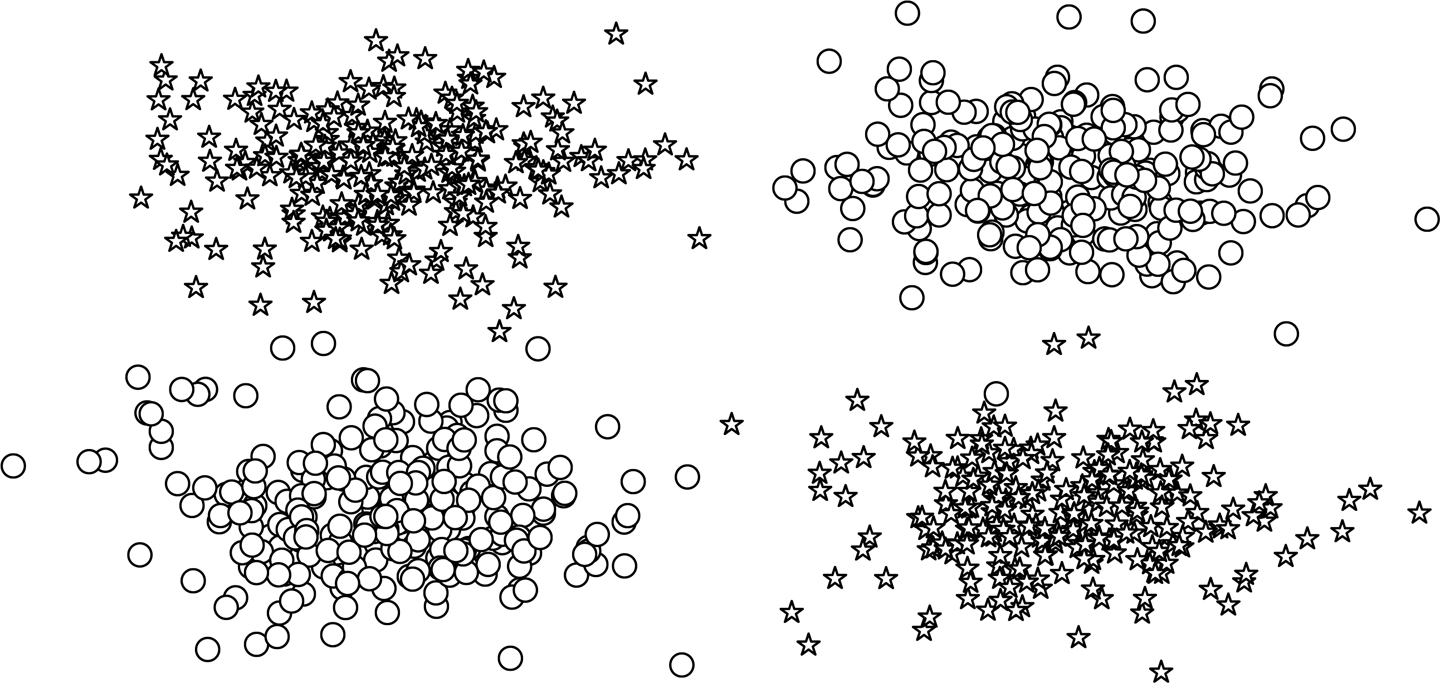

In Chapter 3, we covered the foundations of neural networks by looking at the perceptron, the simplest neural network that can exist. One of the historic downfalls of the perceptron was that it cannot learn modestly nontrivial patterns present in data. For example, take a look at the plotted data points in Figure 4-1. This is equivalent to an either-or (XOR) situation in which the decision boundary cannot be a single straight line (otherwise known as being linearly separable). In this case, the perceptron fails.

Figure 4-1. Two classes in the XOR dataset plotted as circles and stars. Notice how no single line can separate the two classes.

In this chapter, we explore a family of neural network models traditionally called feed-forward networks. We focus on two kinds of feed-forward neural networks: the multilayer perceptron (MLP) and the convolutional neural network (CNN).1 The multilayer perceptron structurally extends the simpler perceptron we studied in Chapter 3 by grouping many perceptrons in a single layer and stacking multiple layers together. We cover multilayer perceptrons in just a moment and show their use in multiclass classification in “Example: Surname Classification with an MLP”.

The second kind of feed-forward neural networks studied in this chapter, the convolutional neural network, is deeply inspired ...

Become an O’Reilly member and get unlimited access to this title plus top books and audiobooks from O’Reilly and nearly 200 top publishers, thousands of courses curated by job role, 150+ live events each month,

and much more.

Read now

Unlock full access