Chapter 9. Twitter Cookbook

This cookbook is a collection of recipes for mining Twitter data. Each recipe is designed to be as simple and atomic as possible in solving a particular problem so that multiple recipes can be composed into more complex recipes with minimal effort. Think of each recipe as being a building block that, while useful in its own right, is even more useful in concert with other building blocks that collectively constitute more complex units of analysis. Unlike previous chapters, which contain a lot more prose than code, this cookbook provides a relatively light discussion and lets the code do more of the talking. The thought is that you’ll likely be manipulating and composing the code in various ways to achieve particular objectives.

While most recipes involve little more than issuing a parameterized API call and post-processing the response into a convenient format, some recipes are even simpler (involving little more than a couple of lines of code), and others are considerably more complex. This cookbook is designed to help you by presenting some common problems and their solutions. In some cases, it may not be common knowledge that the data you desire is really just a couple of lines of code away. The value proposition is in giving you code that you can trivially adapt to suit your own purposes.

One fundamental software dependency you’ll need for all of the

recipes in this chapter is the twitter

package, which you can install with pip

per the rather predictable pip install

twitter command from a terminal. Other software dependencies

will be noted as they are introduced in individual recipes. If you’re taking

advantage of the book’s virtual machine (which you are highly encouraged to

do), the twitter package and all other

dependencies will be preinstalled for you.

As you know from Chapter 1, Twitter’s v1.1 API requires all requests to be authenticated, so each recipe assumes that you take advantage of Accessing Twitter’s API for Development Purposes or Doing the OAuth Dance to Access Twitter’s API for Production Purposes to first gain an authenticated API connector to use in each of the other recipes.

Note

Always get the latest bug-fixed source code for this chapter (and every other chapter) online at http://bit.ly/MiningTheSocialWeb2E. Be sure to also take advantage of this book’s virtual machine experience, as described in Appendix A, to maximize your enjoyment of the sample code.

Accessing Twitter’s API for Development Purposes

Problem

You want to mine your own account data or otherwise gain quick and easy API access for development purposes.

Solution

Use the twitter package and

the OAuth 1.0a credentials provided in the application’s settings to gain

API access to your own account without any HTTP redirects.

Discussion

Twitter implements OAuth 1.0a, an authorization mechanism that’s expressly designed so that users can grant third parties access to their data without having to do the unthinkable—doling out their usernames and passwords. While you can certainly take advantage of Twitter’s OAuth implementation for production situations in which you’ll need users to authorize your application to access their accounts, you can also use the credentials in your application’s settings to gain instant access for development purposes or to mine the data in your own account.

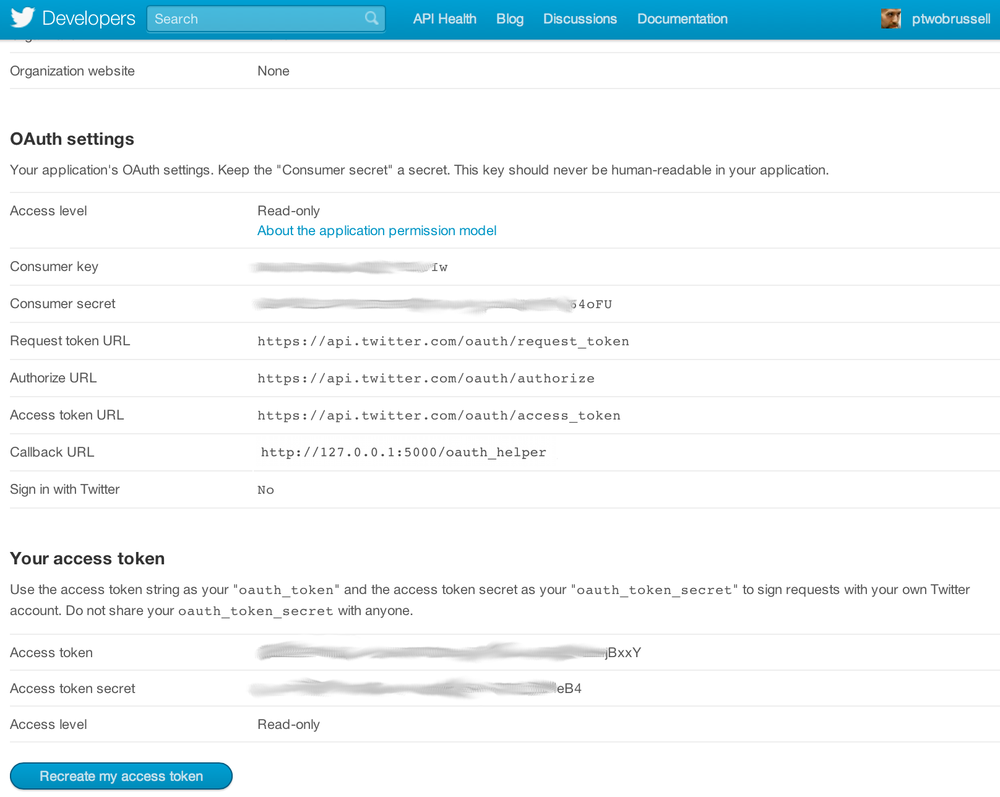

Register an application under your Twitter account at http://dev.twitter.com/apps

and take note of the consumer key,

consumer secret, access token,

and access token secret, which constitute the four

credentials that any OAuth 1.0a–enabled application needs to ultimately

gain account access. Figure 9-1 provides

a screen capture of a Twitter application’s settings. With these

credentials in hand, you can use any OAuth 1.0a library to access

Twitter’s RESTful API, but

we’ll opt to use the twitter package,

which provides a minimalist and Pythonic API wrapper around Twitter’s

RESTful API interface. When registering your application, you don’t need

to specify the callback URL since we are effectively bypassing the

entire OAuth flow and simply using the credentials to immediately access

the API. Example 9-1

demonstrates how to use these credentials to instantiate a connector to

the API.

importdefoauth_login():# XXX: Go to http://twitter.com/apps/new to create an app and get values# for these credentials that you'll need to provide in place of these# empty string values that are defined as placeholders.# See https://dev.twitter.com/docs/auth/oauth for more information# on Twitter's OAuth implementation.CONSUMER_KEY=''CONSUMER_SECRET=''OAUTH_TOKEN=''OAUTH_TOKEN_SECRET=''auth=.oauth.OAuth(OAUTH_TOKEN,OAUTH_TOKEN_SECRET,CONSUMER_KEY,CONSUMER_SECRET)twitter_api=.(auth=auth)returntwitter_api# Sample usagetwitter_api=oauth_login()# Nothing to see by displaying twitter_api except that it's now a# defined variabletwitter_api

Warning

Keep in mind that the credentials used to connect are effectively the same as the username and password combination, so guard them carefully and specify the minimal level of access required in your application’s settings. Read-only access is sufficient for mining your own account data.

While convenient for accessing your own data from your own account, this shortcut provides no benefit if your goal is to write a client program for accessing someone else’s data. You’ll need to perform the full OAuth dance, as demonstrated in Example 9-2, for that situation.

Doing the OAuth Dance to Access Twitter’s API for Production Purposes

Solution

Implement the “OAuth dance” with the twitter package.

Discussion

The twitter package provides

a built-in implementation of the so-called OAuth dance that works for a

console application. It does so by implementing an out of

band (oob) OAuth flow in which an application that does not

run in a browser, such as a Python program, can securely gain these four

credentials to access the API, and allows you to easily request access

to a particular user’s account data as a standard “out of the box”

capability. However, if you’d like to write a web application that

accesses another user’s account data, you may need to lightly adapt its

implementation.

Although there may not be many practical reasons to actually implement an OAuth dance from within IPython Notebook (unless perhaps you were running a hosted IPython Notebook service that was used by other people), this recipe uses Flask as an embedded web server to demonstrate this recipe using the same toolchain as the rest of the book. It could be easily adapted to work with an arbitrary web application framework of your choice since the concepts are the same.

Figure 9-1 provides a screen capture of a Twitter application’s settings. In an OAuth 1.0a flow, the consumer key and consumer secret values that were introduced as part of Accessing Twitter’s API for Development Purposes uniquely identify your application. You provide these values to Twitter when requesting access to a user’s data so that Twitter can then prompt the user with information about the nature of your request. Assuming the user approves your application, Twitter redirects back to the callback URL that you specify in your application settings and includes an OAuth verifier that is then exchanged for an access token and access token secret, which are used in concert with the consumer key and consumer secret to ultimately enable your application to access the account data. (For oob OAuth flows, you don’t need to include a callback URL; Twitter provides the user with a PIN code as an OAuth verifier that must be copied/pasted back into the application as a manual intervention.) See Appendix B for additional details on an OAuth 1.0a flow.

Example 9-2

illustrates how to use the consumer key and consumer secret to do the

OAuth dance with the twitter package

and gain access to a user’s data. The access token and access token

secret are written to disk, which streamlines future authorizations.

According to Twitter’s Development

FAQ, Twitter does not currently expire access tokens, which

means that you can reliably store them and use them on behalf of the

user indefinitely, as long as you comply with the applicable terms of service.

importjsonfromflaskimportFlask,requestimportmultiprocessingfromthreadingimportTimerfromIPython.displayimportIFramefromIPython.displayimportdisplayfromIPython.displayimportJavascriptasJSimportfromtwitter.oauth_danceimportparse_oauth_tokensfromtwitter.oauthimportread_token_file,write_token_file# Note: This code is exactly the flow presented in the _AppendixB notebookOAUTH_FILE="resources/ch09-twittercookbook/twitter_oauth"# XXX: Go to http://twitter.com/apps/new to create an app and get values# for these credentials that you'll need to provide in place of these# empty string values that are defined as placeholders.# See https://dev.twitter.com/docs/auth/oauth for more information# on Twitter's OAuth implementation, and ensure that *oauth_callback*# is defined in your application settings as shown next if you are# using Flask in this IPython Notebook.# Define a few variables that will bleed into the lexical scope of a couple of# functions that followCONSUMER_KEY=''CONSUMER_SECRET=''oauth_callback='http://127.0.0.1:5000/oauth_helper'# Set up a callback handler for when Twitter redirects back to us after the user# authorizes the appwebserver=Flask("TwitterOAuth")@webserver.route("/oauth_helper")defoauth_helper():oauth_verifier=request.args.get('oauth_verifier')# Pick back up credentials from ipynb_oauth_danceoauth_token,oauth_token_secret=read_token_file(OAUTH_FILE)_twitter=.(auth=.OAuth(oauth_token,oauth_token_secret,CONSUMER_KEY,CONSUMER_SECRET),format='',api_version=None)oauth_token,oauth_token_secret=parse_oauth_tokens(_twitter.oauth.access_token(oauth_verifier=oauth_verifier))# This web server only needs to service one request, so shut it downshutdown_after_request=request.environ.get('werkzeug.server.shutdown')shutdown_after_request()# Write out the final credentials that can be picked up after the following# blocking call to webserver.run().write_token_file(OAUTH_FILE,oauth_token,oauth_token_secret)return"%s%swritten to%s"%(oauth_token,oauth_token_secret,OAUTH_FILE)# To handle Twitter's OAuth 1.0a implementation, we'll just need to implement a# custom "oauth dance" and will closely follow the pattern defined in# twitter.oauth_dance.defipynb_oauth_dance():_twitter=.(auth=.OAuth('','',CONSUMER_KEY,CONSUMER_SECRET),format='',api_version=None)oauth_token,oauth_token_secret=parse_oauth_tokens(_twitter.oauth.request_token(oauth_callback=oauth_callback))# Need to write these interim values out to a file to pick up on the callback# from Twitter that is handled by the web server in /oauth_helperwrite_token_file(OAUTH_FILE,oauth_token,oauth_token_secret)oauth_url=('http://api.twitter.com/oauth/authorize?oauth_token='+oauth_token)# Tap the browser's native capabilities to access the web server through a new# window to get user authorizationdisplay(JS("window.open('%s')"%oauth_url))# After the webserver.run() blocking call, start the OAuth Dance that will# ultimately cause Twitter to redirect a request back to it. Once that request# is serviced, the web server will shut down and program flow will resume# with the OAUTH_FILE containing the necessary credentials.Timer(1,lambda:ipynb_oauth_dance()).start()webserver.run(host='0.0.0.0')# The values that are read from this file are written out at# the end of /oauth_helperoauth_token,oauth_token_secret=read_token_file(OAUTH_FILE)# These four credentials are what is needed to authorize the applicationauth=.oauth.OAuth(oauth_token,oauth_token_secret,CONSUMER_KEY,CONSUMER_SECRET)twitter_api=.(auth=auth)twitter_api

You should be able to observe that the access token and access token secret that your application retrieves are the same values as the ones in your application’s settings, and this is no coincidence. Guard these values carefully, as they are effectively the same thing as a username and password combination.

Discovering the Trending Topics

Problem

You want to know what is trending on Twitter for a particular geographic area such as the United States, another country or group of countries, or possibly even the entire world.

Solution

Twitter’s Trends API enables you to get the trending topics for geographic areas that are designated by a Where On Earth (WOE) ID, as defined and maintained by Yahoo!.

Discussion

A place is an essential concept in Twitter’s development platform, and trending topics are accordingly constrained by geography to provide the best API possible for querying for trending topics (as shown in Example 9-3). Like all other APIs, it returns the trending topics as JSON data, which can be converted to standard Python objects and then manipulated with list comprehensions or similar techniques. This means it’s fairly easy to explore the API responses. Try experimenting with a variety of WOE IDs to compare and contrast the trends from various geographic regions. For example, compare and contrast trends in two different countries, or compare a trend in a particular country to a trend in the world.

Note

You’ll need to complete a short registration with Yahoo! in order to access and look up Where On Earth (WOE) IDs as part of one of their developer products. It’s painless and well worth the couple of minutes that it takes to do.

importjsonimportdeftwitter_trends(twitter_api,woe_id):# Prefix ID with the underscore for query string parameterization.# Without the underscore, the twitter package appends the ID value# to the URL itself as a special-case keyword argument.returntwitter_api.trends.place(_id=woe_id)# Sample usagetwitter_api=oauth_login()# See https://dev.twitter.com/docs/api/1.1/get/trends/place and# http://developer.yahoo.com/geo/geoplanet/ for details on# Yahoo! Where On Earth IDWORLD_WOE_ID=1world_trends=twitter_trends(twitter_api,WORLD_WOE_ID)json.dumps(world_trends,indent=1)US_WOE_ID=23424977us_trends=twitter_trends(twitter_api,US_WOE_ID)json.dumps(us_trends,indent=1)

Searching for Tweets

Discussion

Example 9-4 illustrates how

to use the Search API to

perform a custom query against the entire Twitterverse. Similar to the

way that search engines work, Twitter’s Search API returns results in

batches, and you can configure the number of results per batch to a

maximum value of 200 by using the count keyword parameter. It is possible that

more than 200 results (or the maximum value that you specify for

count) may be available for any given

query, and in the parlance of Twitter’s API, you’ll need to use a

cursor to navigate to the next batch of

results.

Cursors are a new enhancement to Twitter’s v1.1 API and provide a more robust scheme than the pagination paradigm offered by the v1.0 API, which involved specifying a page number and a results per page constraint. The essence of the cursor paradigm is that it is able to better accommodate the dynamic and real-time nature of the Twitter platform. For example, Twitter’s API cursors are designed to inherently take into account the possibility that updated information may become available in real time while you are navigating a batch of search results. In other words, it could be the case that while you are navigating a batch of query results, relevant information becomes available that you would want to have included in your current results while you are navigating them, rather than needing to dispatch a new query.

Example 9-4 illustrates how to use the Search API and navigate the cursor that’s included in a response to fetch more than one batch of results.

deftwitter_search(twitter_api,q,max_results=200,**kw):# See https://dev.twitter.com/docs/api/1.1/get/search/tweets and# https://dev.twitter.com/docs/using-search for details on advanced# search criteria that may be useful for keyword arguments# See https://dev.twitter.com/docs/api/1.1/get/search/tweetssearch_results=twitter_api.search.tweets(q=q,count=100,**kw)statuses=search_results['statuses']# Iterate through batches of results by following the cursor until we# reach the desired number of results, keeping in mind that OAuth users# can "only" make 180 search queries per 15-minute interval. See# https://dev.twitter.com/docs/rate-limiting/1.1/limits# for details. A reasonable number of results is ~1000, although# that number of results may not exist for all queries.# Enforce a reasonable limitmax_results=min(1000,max_results)for_inrange(10):# 10*100 = 1000try:next_results=search_results['search_metadata']['next_results']exceptKeyError,e:# No more results when next_results doesn't existbreak# Create a dictionary from next_results, which has the following form:# ?max_id=313519052523986943&q=NCAA&include_entities=1kwargs=dict([kv.split('=')forkvinnext_results[1:].split("&")])search_results=twitter_api.search.tweets(**kwargs)statuses+=search_results['statuses']iflen(statuses)>max_results:breakreturnstatuses# Sample usagetwitter_api=oauth_login()q="CrossFit"results=twitter_search(twitter_api,q,max_results=10)# Show one sample search result by slicing the list...json.dumps(results[0],indent=1)

Constructing Convenient Function Calls

Problem

You want to bind certain parameters to function calls and pass around a reference to the bound function in order to simplify coding patterns.

Solution

Use Python’s functools.partial to create fully or partially bound functions that can be

elegantly passed around and invoked by other code without the need to

pass additional parameters.

Discussion

Although not a technique that is exclusive to design patterns

with the Twitter API, functools.partial is a pattern that you’ll

find incredibly convenient to use in combination with the twitter package and many of the patterns in

this cookbook and in your other Python programming experiences. For

example, you may find it cumbersome to continually pass around a

reference to an authenticated Twitter API connector (twitter_api, as illustrated in these recipes,

is usually the first argument to most functions) and want to create a

function that partially satisfies the function

arguments so that you can freely pass around a function that can be

invoked with its remaining parameters.

Another example that illustrates the convenience of partially

binding parameters is that you may want to bind a Twitter API connector

and a WOE ID for a geographic area to the Trends API as a single

function call that can be passed around and simply invoked as is. Yet another possibility is that you may find

that routinely typing json.dumps({...}, indent=1) is rather

cumbersome, so you could go ahead and partially apply the keyword

argument and rename the function to something shorter like pp (pretty-print) to save some repetitive

typing.

The possibilities are vast, and while you could opt to use

Python’s def keyword to define

functions as a possibility that usually achieves the same end, you may

find that it’s more concise and elegant to use functools.partial in some situations. Example 9-5 demonstrates

a few possibilities that you may find useful.

fromfunctoolsimportpartialpp=partial(json.dumps,indent=1)twitter_world_trends=partial(twitter_trends,twitter_api,WORLD_WOE_ID)pp(twitter_world_trends())authenticated_twitter_search=partial(twitter_search,twitter_api)results=authenticated_twitter_search("iPhone")pp(results)authenticated_iphone_twitter_search=partial(authenticated_twitter_search,"iPhone")results=authenticated_iphone_twitter_search()pp(results)

Saving and Restoring JSON Data with Text Files

Problem

You want to store relatively small amounts of data that you’ve fetched from Twitter’s API for recurring analysis or archival purposes.

Solution

Write the data out to a text file in a convenient and portable JSON representation.

Discussion

Although text files won’t be appropriate for every occasion, they are a portable and convenient option to consider if you need to just dump some data out to disk to save it for experimentation or analysis. In fact, this would be considered a best practice so that you minimize the number of requests to Twitter’s API and avoid the inevitable rate-limiting issues that you’ll likely encounter. After all, it certainly would not be in your best interest or Twitter’s best interest to repetitively hit the API and request the same data over and over again.

Example 9-6

demonstrates a fairly routine use of Python’s io package to ensure that any data that you write to and read from

disk is properly encoded and decoded as UTF-8 so that you can avoid the

(often dreaded and not often well understood) UnicodeDecodeError exceptions that commonly occur with serialization and deserialization of

text data in Python 2.x applications.

importio,jsondefsave_json(filename,data):withio.open('resources/ch09-twittercookbook/{0}.json'.format(filename),'w',encoding='utf-8')asf:f.write(unicode(json.dumps(data,ensure_ascii=False)))defload_json(filename):withio.open('resources/ch09-twittercookbook/{0}.json'.format(filename),encoding='utf-8')asf:returnf.read()# Sample usageq='CrossFit'twitter_api=oauth_login()results=twitter_search(twitter_api,q,max_results=10)save_json(q,results)results=load_json(q)json.dumps(results,indent=1)

Saving and Accessing JSON Data with MongoDB

Problem

You want to store and access nontrivial amounts of JSON data from Twitter API responses.

Solution

Use a document-oriented database such as MongoDB to store the data in a convenient JSON format.

Discussion

While a directory containing a relatively small number of properly encoded JSON files may work well for trivial amounts of data, you may be surprised at how quickly you start to amass enough data that flat files become unwieldy. Fortunately, document-oriented databases such as MongoDB are ideal for storing Twitter API responses, since they are designed to efficiently store JSON data.

MongoDB is a robust and well-documented database that works well for small or large amounts of data. It provides powerful query operators and indexing capabilities that significantly streamline the amount of analysis that you’ll need to do in custom Python code.

In most cases, if you put some thought into how to index and query your data, MongoDB will be able to outperform your custom manipulations through its use of indexes and efficient BSON representation on disk. See Chapter 6 for a fairly extensive introduction to MongoDB in the context of storing (JSONified mailbox) data and using MongoDB’s aggregation framework to query it in nontrivial ways. Example 9-7 illustrates how to connect to a running MongoDB database to save and load data.

Note

MongoDB is easy to install and contains excellent online documentation for both installation/configuration and query/indexing operations. The virtual machine for this book takes care of installing and starting it for you if you’d like to jump right in.

importjsonimportpymongo# pip install pymongodefsave_to_mongo(data,mongo_db,mongo_db_coll,**mongo_conn_kw):# Connects to the MongoDB server running on# localhost:27017 by defaultclient=pymongo.MongoClient(**mongo_conn_kw)# Get a reference to a particular databasedb=client[mongo_db]# Reference a particular collection in the databasecoll=db[mongo_db_coll]# Perform a bulk insert and return the IDsreturncoll.insert(data)defload_from_mongo(mongo_db,mongo_db_coll,return_cursor=False,criteria=None,projection=None,**mongo_conn_kw):# Optionally, use criteria and projection to limit the data that is# returned as documented in# http://docs.mongodb.org/manual/reference/method/db.collection.find/# Consider leveraging MongoDB's aggregations framework for more# sophisticated queries.client=pymongo.MongoClient(**mongo_conn_kw)db=client[mongo_db]coll=db[mongo_db_coll]ifcriteriaisNone:criteria={}ifprojectionisNone:cursor=coll.find(criteria)else:cursor=coll.find(criteria,projection)# Returning a cursor is recommended for large amounts of dataifreturn_cursor:returncursorelse:return[itemforitemincursor]# Sample usageq='CrossFit'twitter_api=oauth_login()results=twitter_search(twitter_api,q,max_results=10)save_to_mongo(results,'search_results',q)load_from_mongo('search_results',q)

Sampling the Twitter Firehose with the Streaming API

Problem

You want to analyze what people are tweeting about right now from a real-time stream of tweets as opposed to querying the Search API for what might be slightly (or very) dated information. Or, you want to begin accumulating nontrivial amounts of data about a particular topic for later analysis.

Solution

Use Twitter’s Streaming API to sample public data from the Twitter firehose.

Discussion

Twitter makes up to 1% of all tweets available in real time through a random sampling technique that represents the larger population of tweets and exposes these tweets through the Streaming API. Unless you want to go to a third-party provider such as GNIP or DataSift (which may actually be well worth the cost in many situations), this is about as good as it gets. Although you might think that 1% seems paltry, take a moment to realize that during peak loads, tweet velocity can be tens of thousands of tweets per second. For a broad enough topic, actually storing all of the tweets you sample could quickly become more of a problem than you might think. Access to up to 1% of all public tweets is significant.

Whereas the Search API is a little bit easier to use and

queries for “historical” information (which in the Twitterverse could

mean data that is minutes or hours old, given how fast trends emerge and

dissipate), the Streaming API provides a way to sample from

worldwide information in as close to real time as

you’ll ever be able to get. The twitter package exposes the Streaming API in

an easy-to-use manner in which you can filter the firehose based upon

keyword constraints, which is an intuitive and convenient way to access

this information. As opposed to constructing a twitter.Twitter connector, you construct a

twitter.TwitterStream connector,

which takes a keyword argument

that’s the same twitter.oauth.OAuth

type as previously introduced in Accessing Twitter’s API for Development Purposes

and Doing the OAuth Dance to Access Twitter’s API for Production

Purposes.

The sample code in Example 9-8

demonstrates how to get started with Twitter’s Streaming API.

# Finding topics of interest by using the filtering capablities it offers.import# Query termsq='CrossFit'# Comma-separated list of terms>>sys.stderr,'Filtering the public timeline for track="%s"'%(q,)# Returns an instance of twitter.Twittertwitter_api=oauth_login()# Reference the self.auth parametertwitter_stream=.TwitterStream(auth=twitter_api.auth)# See https://dev.twitter.com/docs/streaming-apisstream=twitter_stream.statuses.filter(track=q)# For illustrative purposes, when all else fails, search for Justin Bieber# and something is sure to turn up (at least, on Twitter)fortweetinstream:tweet['text']# Save to a database in a particular collection

Collecting Time-Series Data

Problem

You want to periodically query Twitter’s API for specific results or trending topics and store the data for time-series analysis.

Solution

Use Python’s built-in time.sleep function inside of an infinite loop to issue a query and store the

results to a database such as MongoDB if the use of the Streaming API as

illustrated in Sampling the Twitter Firehose with the Streaming API won’t

work.

Discussion

Although it’s easy to get caught up in pointwise queries on particular keywords at a particular instant in time, the ability to sample data that’s collected over time and detect trends and patterns gives us access to a radically powerful form of analysis that is commonly overlooked. Every time you look back and say, “I wish I’d known…” could have been a potential opportunity if you’d had the foresight to preemptively collect data that might have been useful for extrapolation or making predictions about the future (where applicable).

Time-series analysis of Twitter data can be truly fascinating given the ebbs and flows of topics and updates that can occur. Although it may be useful for many situations to sample from the firehose and store the results to a document-oriented database like MongoDB, it may be easier or more appropriate in some situations to periodically issue queries and record the results into discrete time intervals. For example, you might query the trending topics for a variety of geographic regions throughout a 24-hour period and measure the rate at which various trends change, compare rates of change across geographies, find the longest- and shortest-lived trends, and more.

Another compelling possibility that is being actively explored is correlations between sentiment as expressed on Twitter and stock markets. It’s easy enough to zoom in on particular keywords, hashtags, or trending topics and later correlate the data against actual stock market changes; this could be an early step in building a bot to make predictions about markets and commodities.

Example 9-9 is essentially a composition of Accessing Twitter’s API for Development Purposes, Example 9-3, and Example 9-7, and it demonstrates how you can use recipes as primitive building blocks to create more complex scripts with a little bit of creativity and copy/pasting.

importsysimportdatetimeimporttimeimportdefget_time_series_data(api_func,mongo_db_name,mongo_db_coll,secs_per_interval=60,max_intervals=15,**mongo_conn_kw):# Default settings of 15 intervals and 1 API call per interval ensure that# you will not exceed the Twitter rate limit.interval=0whileTrue:# A timestamp of the form "2013-06-14 12:52:07"now=str(datetime.datetime.now()).split(".")[0]ids=save_to_mongo(api_func(),mongo_db_name,mongo_db_coll+"-"+now)>>sys.stderr,"Write {0} trends".format(len(ids))>>sys.stderr,"Zzz...">>sys.stderr.flush()time.sleep(secs_per_interval)# secondsinterval+=1ifinterval>=15:break# Sample usageget_time_series_data(twitter_world_trends,'time-series','twitter_world_trends')

Extracting Tweet Entities

Problem

You want to extract entities such as @username mentions, #hashtags, and URLs from tweets for analysis.

Discussion

Twitter’s API now provides tweet entities as a standard field

for most of its API responses, where applicable. The entities field, illustrated in Example 9-10, includes user mentions,

hashtags, references to URLs, media objects (such as images and videos),

and financial symbols such as stock tickers. At the

current time, not all fields may apply for all situations. For example,

the media field will appear and be

populated in a tweet only if a user embeds the media using a Twitter

client that specifically uses a particular API for embedding the

content; simply copying/pasting a link to a YouTube video won’t

necessarily populate this field.

See the Tweet Entities API documentation for more details, including information on some of the additional fields that are available for each type of entity. For example, in the case of a URL, Twitter offers several variations, including the shortened and expanded forms as well as a value that may be more appropriate for displaying in a user interface for certain situations.

defextract_tweet_entities(statuses):# See https://dev.twitter.com/docs/tweet-entities for more details on tweet# entitiesiflen(statuses)==0:return[],[],[],[],[]screen_names=[user_mention['screen_name']forstatusinstatusesforuser_mentioninstatus['entities']['user_mentions']]hashtags=[hashtag['text']forstatusinstatusesforhashtaginstatus['entities']['hashtags']]urls=[url['expanded_url']forstatusinstatusesforurlinstatus['entities']['urls']]symbols=[symbol['text']forstatusinstatusesforsymbolinstatus['entities']['symbols']]# In some circumstances (such as search results), the media entity# may not appearifstatus['entities'].has_key('media'):media=[media['url']forstatusinstatusesformediainstatus['entities']['media']]else:media=[]returnscreen_names,hashtags,urls,media,symbols# Sample usageq='CrossFit'statuses=twitter_search(twitter_api,q)screen_names,hashtags,urls,media,symbols=extract_tweet_entities(statuses)# Explore the first five items for each...json.dumps(screen_names[0:5],indent=1)json.dumps(hashtags[0:5],indent=1)json.dumps(urls[0:5],indent=1)json.dumps(media[0:5],indent=1)json.dumps(symbols[0:5],indent=1)

Finding the Most Popular Tweets in a Collection of Tweets

Problem

You want to determine which tweets are the most popular among a collection of search results or any other batch of tweets, such as a user timeline.

Solution

Analyze the retweet_count

field of a tweet to determine whether or not a tweet was retweeted

and, if so, how many times.

Discussion

Analyzing the retweet_count

field of a tweet, as shown in Example 9-11,

is perhaps the most straightforward measure of a tweet’s popularity

because it stands to reason that popular tweets will be shared with

others. Depending on your particular interpretation of “popular,”

however, another possible value that you could incorporate into a

formula for determining a tweet’s popularity is its favorite_count,

which is the number of times a user has bookmarked a tweet.

For example, you might weight the retweet_count at 1.0 and the favorite_count at 0.1 to add a marginal amount

of weight to tweets that have been both retweeted and favorited if you

wanted to use favorite_count as a

tiebreaker. The particular choice of values in a formula is entirely up

to you and will depend on how important you think each of these fields

is in the overall context of the problem that you are trying to solve.

Other possibilities, such as incorporating an exponential decay that accounts for

time and weights recent tweets more heavily than less recent tweets, may

prove useful in certain analyses.

Note

See also Finding Users Who Have Retweeted a Status and Extracting a Retweet’s Attribution for some additional discussion that may be helpful in navigating the space of analyzing and applying attribution to retweets, which can be slightly more confusing than it initially seems.

importdeffind_popular_tweets(twitter_api,statuses,retweet_threshold=3):# You could also consider using the favorite_count parameter as part of# this heuristic, possibly using it to provide an additional boost to# popular tweets in a ranked formulationreturn[statusforstatusinstatusesifstatus['retweet_count']>retweet_threshold]# Sample usageq="CrossFit"twitter_api=oauth_login()search_results=twitter_search(twitter_api,q,max_results=200)popular_tweets=find_popular_tweets(twitter_api,search_results)fortweetinpopular_tweets:tweet['text'],tweet['retweet_count']

Warning

The retweeted attribute

in a tweet is not a shortcut for

telling you whether or not a tweet has been retweeted. It is a

so-called “perspectival” attribute that tells you whether or not the

authenticated user (which would be you in the case that you are

analyzing your own data) has retweeted a status, which is convenient

for powering markers in user interfaces. It is called a perspectival

attribute because it provides perspective from the standpoint of the

authenticating user.

Finding the Most Popular Tweet Entities in a Collection of Tweets

Problem

You’d like to determine if there are any popular tweet entities, such as @username mentions, #hashtags, or URLs, that might provide insight into the nature of a collection of tweets.

Solution

Extract the tweet entities with a list comprehension, count them, and filter out any tweet entity that doesn’t exceed a minimal threshold.

Discussion

Twitter’s API provides access to tweet entities directly in the

metadata values of a tweet through the entities field, as demonstrated in Extracting Tweet Entities. After extracting the entities,

you can compute the frequencies of each and easily extract the most

common entities with a collections.Counter

(shown in Example 9-12),

which is a staple in Python’s standard library and a considerable

convenience in any frequency analysis experiment with Python. With a

ranked collection of tweet entities at your fingertips, all that’s left

is to apply filtering or other threshold criteria to the collection of

tweets in order to zero in on particular tweet entities of

interest.

importfromcollectionsimportCounterdefget_common_tweet_entities(statuses,entity_threshold=3):# Create a flat list of all tweet entitiestweet_entities=[eforstatusinstatusesforentity_typeinextract_tweet_entities([status])foreinentity_type]c=Counter(tweet_entities).most_common()# Compute frequenciesreturn[(k,v)for(k,v)incifv>=entity_threshold]# Sample usageq='CrossFit'twitter_api=oauth_login()search_results=twitter_search(twitter_api,q,max_results=100)common_entities=get_common_tweet_entities(search_results)"Most common tweet entities"common_entities

Tabulating Frequency Analysis

Problem

You’d like to tabulate the results of frequency analysis experiments in order to easily skim the results or otherwise display them in a format that’s convenient for human consumption.

Solution

Use the prettytable package

to easily create an object that can be loaded with rows of

information and displayed as a table with fixed-width columns.

Discussion

The prettytable package is

very easy to use and incredibly helpful in constructing an easily

readable, text-based output that can be copied and pasted into any

report or text file (see Example 9-13). Just use pip install prettytable to install the package per the norms for Python package

installation. A prettytable.PrettyTable is especially handy

when used in tandem with a collections.Counter or other data structure

that distills to a list of tuples that can be ranked (sorted) for

analysis purposes.

Note

If you are interested in storing data for consumption in a

spreadsheet, you may want to consult the documention on the csv package that’s part of Python’s

standard library. However, be aware that there are some known issues

(as documented) regarding its support for Unicode.

fromprettytableimportPrettyTable# Get some frequency datatwitter_api=oauth_login()search_results=twitter_search(twitter_api,q,max_results=100)common_entities=get_common_tweet_entities(search_results)# Use PrettyTable to create a nice tabular displaypt=PrettyTable(field_names=['Entity','Count'])[pt.add_row(kv)forkvincommon_entities]pt.align['Entity'],pt.align['Count']='l','r'# Set column alignmentpt

Finding Users Who Have Retweeted a Status

Solution

Use the GET retweeters/ids

API endpoint to determine which users have retweeted the

status.

Discussion

Although the GET retweeters/ids API returns the IDs

of any users who have retweeted a status, there are a couple of subtle

caveats that you should know about. In particular, keep in mind that

this API reports only users who have retweeted by using Twitter’s

native retweet API, as opposed to users who have

copy/pasted a tweet and prepended it with “RT,” appended attribution

with “(via @exampleUser),” or used another common convention.

Most Twitter applications (including the twitter.com user interface) use the native retweet API, but some users may still elect to share a status by “working around” the native API for the purposes of attaching additional commentary to a tweet or inserting themselves into a conversation that they’d otherwise be broadcasting only as an intermediary. For example, a user may suffix “< AWESOME!” to a tweet to display like-mindedness about it, and although the user may think of this as a retweet, he is actually quoting the tweet as far as Twitter’s API is concerned. At least part of the reason for the confusion between quoting a tweet and retweeting a tweet is that Twitter has not always offered a native retweet API. In fact, the notion of retweeting is a phenomenon that evolved organically and that Twitter eventually responded to by providing first-class API support back in late 2010.

An illustration may help to drive home this subtle technical

detail: suppose that @fperez_org

posts a status and then @SocialWebMining retweets it. At this point in

time, the retweet_count of

the status posted by @fperez_org would be equal to 1, and

@SocialWebMining would have a tweet in its user timeline that indicates

a retweet of @fperez_org’s

status.

Now let’s suppose that @jyeee notices @fperez_org’s status by

examining @SocialWebMining’s user timeline through

twitter.com or an application like TweetDeck and clicks the retweet

button. At this point in time, @fperez_org’s status would have a

retweet_count equal to 2 and @jyeee

would have a tweet in his user timeline (just like @SocialWebMining’s

last status) indicating a retweet of @fperez_org.

Here’s the important point to understand: from the standpoint of any user browsing @jyeee’s timeline, @SocialWebMining’s intermediary link between @fperez_org and @jyeee is effectively lost. In other words, @fperez_org will receive the attribution for the original tweet, regardless of what kind of chain reaction gets set off involving multiple layers of intermediaries for a popular status.

With the ID values of any user who has retweeted the tweet in

hand, it’s easy enough to get profile details using the GET users/lookup API. See Resolving User Profile Information for more

details.

Given that Example 9-14 may not fully satisfy your needs, be sure to also carefully consider Extracting a Retweet’s Attribution as an additional step that you can take to discover broadcasters of a status. It provides an example that uses a regular expression to analyze the 140 characters of a tweet’s content to extract the attribution information for a quoted tweet if you are processing a historical archive of tweets or otherwise want to double-check the content for attribution information.

importtwitter_api=oauth_login()"""User IDs for retweeters of a tweet by @fperez_orgthat was retweeted by @SocialWebMining and that @jyeee then retweetedfrom @SocialWebMining's timeline\n"""twitter_api.statuses.retweeters.ids(_id=334188056905129984)['ids']json.dumps(twitter_api.statuses.show(_id=334188056905129984),indent=1)"@SocialWeb's retweet of @fperez_org's tweet\n"twitter_api.statuses.retweeters.ids(_id=345723917798866944)['ids']json.dumps(twitter_api.statuses.show(_id=345723917798866944),indent=1)"@jyeee's retweet of @fperez_org's tweet\n"twitter_api.statuses.retweeters.ids(_id=338835939172417537)['ids']json.dumps(twitter_api.statuses.show(_id=338835939172417537),indent=1)

Note

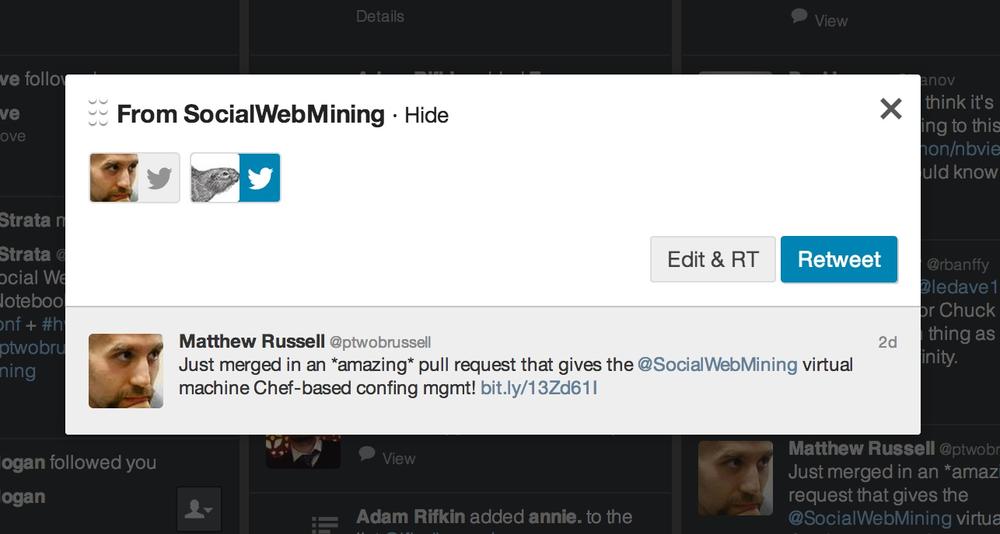

Some Twitter users intentionally quote tweets as opposed to using the retweet API in order to inject themselves into conversations and potentially be retweeted themselves, and it is still quite common to see the RT and via functionality widely used. In fact, popular applications such as TweetDeck include functionality for distinguishing between “Edit and RT” and a native “Retweet,” as illustrated in Figure 9-2.

Extracting a Retweet’s Attribution

Solution

Analyze the 140 characters of the tweet with regular expression heuristics for the presence of conventions such as “RT @SocialWebMining” or “(via @SocialWebMining).”

Discussion

Examining the results of Twitter’s native retweet API as described in Finding Users Who Have Retweeted a Status can provide the original attribution of a tweet in some, but certainly not all, circumstances. As noted in that recipe, it is sometimes the case that users will inject themselves into conversations for various reasons, so it may be necessary to analyze certain tweets in order to discover the original attribution. Example 9-15 demonstrates how to use regular expressions in Python to detect a couple of commonly used conventions that were adopted prior to the release of Twitter’s native retweet API and that are still in common use today.

importredefget_rt_attributions(tweet):# Regex adapted from Stack Overflow (http://bit.ly/1821y0J)rt_patterns=re.compile(r"(RT|via)((?:\b\W*@\w+)+)",re.IGNORECASE)rt_attributions=[]# Inspect the tweet to see if it was produced with /statuses/retweet/:id.# See https://dev.twitter.com/docs/api/1.1/get/statuses/retweets/%3Aid.iftweet.has_key('retweeted_status'):attribution=tweet['retweeted_status']['user']['screen_name'].lower()rt_attributions.append(attribution)# Also, inspect the tweet for the presence of "legacy" retweet patterns# such as "RT" and "via", which are still widely used for various reasons# and potentially very useful. See https://dev.twitter.com/discussions/2847# and https://dev.twitter.com/discussions/1748 for some details on how/why.try:rt_attributions+=[mention.strip()formentioninrt_patterns.findall(tweet['text'])[0][1].split()]exceptIndexError,e:pass# Filter out any duplicatesreturnlist(set([rta.strip("@").lower()forrtainrt_attributions]))# Sample usagetwitter_api=oauth_login()tweet=twitter_api.statuses.show(_id=214746575765913602)get_rt_attributions(tweet)tweet=twitter_api.statuses.show(_id=345723917798866944)get_rt_attributions(tweet)

Making Robust Twitter Requests

Problem

In the midst of collecting data for analysis, you encounter unexpected HTTP errors that range from exceeding your rate limits (429 error) to the infamous “fail whale” (503 error) that need to be handled on a case-by-case basis.

Solution

Write a function that serves as a general-purpose API wrapper and provides abstracted logic for handling various HTTP error codes in meaningful ways.

Discussion

Although Twitter’s rate limits are arguably adequate for most applications, they are generally inadequate for data mining exercises, so it’s common that you’ll need to manage the number of requests that you make in a given time period and also account for other types of HTTP failures, such as the infamous “fail whale” or other unexpected network glitches. One approach, shown in Example 9-16, is to write a wrapper function that abstracts away this messy logic and allows you to simply write your script as though rate limits and HTTP errors do not exist for the most part.

Note

See Constructing Convenient Function Calls

for inspiration on how you could use the standard library’s functools.partial function to simplify the

use of this wrapper function for some situations. Also be sure to

review the complete listing of

Twitter’s HTTP error codes. Getting All Friends or Followers for a User

provides a concrete implementation that illustrates how to use a

function called make_twitter_request that should simplify

some of the HTTP errors you may experience in harvesting Twitter

data.

importsysimporttimefromurllib2importURLErrorfromhttplibimportBadStatusLineimportjsonimportdefmake_twitter_request(twitter_api_func,max_errors=10,*args,**kw):# A nested helper function that handles common HTTPErrors. Return an updated# value for wait_period if the problem is a 500 level error. Block until the# rate limit is reset if it's a rate limiting issue (429 error). Returns None# for 401 and 404 errors, which requires special handling by the caller.defhandle_twitter_http_error(e,wait_period=2,sleep_when_rate_limited=True):ifwait_period>3600:# Seconds>>sys.stderr,'Too many retries. Quitting.'raisee# See https://dev.twitter.com/docs/error-codes-responses for common codesife.e.code==401:>>sys.stderr,'Encountered 401 Error (Not Authorized)'returnNoneelife.e.code==404:>>sys.stderr,'Encountered 404 Error (Not Found)'returnNoneelife.e.code==429:>>sys.stderr,'Encountered 429 Error (Rate Limit Exceeded)'ifsleep_when_rate_limited:>>sys.stderr,"Retrying in 15 minutes...ZzZ..."sys.stderr.flush()time.sleep(60*15+5)>>sys.stderr,'...ZzZ...Awake now and trying again.'return2else:raisee# Caller must handle the rate limiting issueelife.e.codein(500,502,503,504):>>sys.stderr,'Encountered%iError. Retrying in%iseconds'%\(e.e.code,wait_period)time.sleep(wait_period)wait_period*=1.5returnwait_periodelse:raisee# End of nested helper functionwait_period=2error_count=0whileTrue:try:returntwitter_api_func(*args,**kw)except.api.TwitterHTTPError,e:error_count=0wait_period=handle_twitter_http_error(e,wait_period)ifwait_periodisNone:returnexceptURLError,e:error_count+=1>>sys.stderr,"URLError encountered. Continuing."iferror_count>max_errors:>>sys.stderr,"Too many consecutive errors...bailing out."raiseexceptBadStatusLine,e:error_count+=1>>sys.stderr,"BadStatusLine encountered. Continuing."iferror_count>max_errors:>>sys.stderr,"Too many consecutive errors...bailing out."raise# Sample usagetwitter_api=oauth_login()# See https://dev.twitter.com/docs/api/1.1/get/users/lookup for# twitter_api.users.lookupresponse=make_twitter_request(twitter_api.users.lookup,screen_name="SocialWebMining")json.dumps(response,indent=1)

Resolving User Profile Information

Solution

Use the GET users/lookup API

to exchange as many as 100 IDs or usernames at a time for complete user

profiles.

Discussion

Many APIs, such as GET

friends/ids and GET

followers/ids, return opaque ID values that need to be

resolved to usernames or other profile information for meaningful

analysis. Twitter provides a GET

users/lookup API that can be used to resolve as many as 100

IDs or usernames at a time, and a simple pattern can be employed to

iterate over larger batches. Although it adds a little bit of complexity

to the logic, a single function can be constructed that accepts keyword

parameters for your choice of either usernames or IDs that are resolved

to user profiles. Example 9-17 illustrates such

a function that can be adapted for a large variety of purposes,

providing ancillary support for situations in which you’ll need to

resolve user IDs.

defget_user_profile(twitter_api,screen_names=None,user_ids=None):# Must have either screen_name or user_id (logical xor)assert(screen_names!=None)!=(user_ids!=None),\"Must have screen_names or user_ids, but not both"items_to_info={}items=screen_namesoruser_idswhilelen(items)>0:# Process 100 items at a time per the API specifications for /users/lookup.# See https://dev.twitter.com/docs/api/1.1/get/users/lookup for details.items_str=','.join([str(item)foriteminitems[:100]])items=items[100:]ifscreen_names:response=make_twitter_request(twitter_api.users.lookup,screen_name=items_str)else:# user_idsresponse=make_twitter_request(twitter_api.users.lookup,user_id=items_str)foruser_infoinresponse:ifscreen_names:items_to_info[user_info['screen_name']]=user_infoelse:# user_idsitems_to_info[user_info['id']]=user_inforeturnitems_to_info# Sample usagetwitter_api=oauth_login()get_user_profile(twitter_api,screen_names=["SocialWebMining","ptwobrussell"])#print get_user_profile(twitter_api, user_ids=[132373965])

Extracting Tweet Entities from Arbitrary Text

Problem

You’d like to analyze arbitrary text and extract tweet entities such as @username mentions, #hashtags, and URLs that may appear within it.

Solution

Use a third-party package like twitter_text to extract tweet entities from arbitrary text such as

historical tweet archives that may not contain tweet entities as

currently provided by the v1.1 API.

Discussion

Twitter has not always extracted tweet entities but you can

easily derive them yourself with the help of a third-party package

called twitter_text, as shown in

Example 9-18.

You can install twitter-text with

pip using the command pip install

twitter-text-py.

importtwitter_text# Sample usagetxt="RT @SocialWebMining Mining 1M+ Tweets About #Syria http://wp.me/p3QiJd-1I"ex=twitter_text.Extractor(txt)"Screen Names:",ex.extract_mentioned_screen_names_with_indices()"URLs:",ex.extract_urls_with_indices()"Hashtags:",ex.extract_hashtags_with_indices()

Getting All Friends or Followers for a User

Problem

You’d like to harvest all of the friends or followers for a (potentially very popular) Twitter user.

Solution

Use the make_twitter_request

function introduced in Making Robust Twitter Requests

to simplify the process of harvesting IDs by accounting for situations

in which the number of followers may exceed what can be fetched within

the prescribed rate limits.

Discussion

The GET followers/ids and

GET friends/ids provide an API that

can be navigated to retrieve all of the follower and friend IDs for a

particular user, but the logic involved in retrieving all of the IDs can

be nontrivial since each API request returns at most 5,000 IDs at a

time. Although most users won’t have anywhere near 5,000 friends or

followers, some celebrity users, who are often interesting to analyze,

will have hundreds of thousands or

even millions of followers. Harvesting all of these IDs can be

challenging because of the need to walk the cursor for each batch of

results and also account for possible HTTP errors along the way.

Fortunately, it’s not too difficult to adapt make_twitter_request and previously introduced

logic for walking the cursor of results to systematically fetch all of

these ids.

Techniques similar to those introduced in Example 9-19 could be incorporated into the template supplied in Resolving User Profile Information to create a robust function that provides a secondary step, such as resolving a subset (or all) of the IDs for usernames. It is advisable to store the results into a document-oriented database such as MongoDB (as illustrated in Problem) after each result so that no information is ever lost in the event of an unexpected glitch during a large harvesting operation.

Note

You may be better off paying a third party such as DataSift for faster access to certain kinds of data, such as the complete profiles for all of a very popular user’s (say, @ladygaga) followers. Before you attempt to collect such a vast amount of data, at least do the arithmetic and determine how long it will take, consider the possible (unexpected) errors that may occur along the way for very long-running processes, and consider whether it would be better to acquire the data from another source. What it may cost you in money, it may save you in time.

fromfunctoolsimportpartialfromsysimportmaxintdefget_friends_followers_ids(twitter_api,screen_name=None,user_id=None,friends_limit=maxint,followers_limit=maxint):# Must have either screen_name or user_id (logical xor)assert(screen_name!=None)!=(user_id!=None),\"Must have screen_name or user_id, but not both"# See https://dev.twitter.com/docs/api/1.1/get/friends/ids and# https://dev.twitter.com/docs/api/1.1/get/followers/ids for details# on API parametersget_friends_ids=partial(make_twitter_request,twitter_api.friends.ids,count=5000)get_followers_ids=partial(make_twitter_request,twitter_api.followers.ids,count=5000)friends_ids,followers_ids=[],[]fortwitter_api_func,limit,ids,labelin[[get_friends_ids,friends_limit,friends_ids,"friends"],[get_followers_ids,followers_limit,followers_ids,"followers"]]:iflimit==0:continuecursor=-1whilecursor!=0:# Use make_twitter_request via the partially bound callable...ifscreen_name:response=twitter_api_func(screen_name=screen_name,cursor=cursor)else:# user_idresponse=twitter_api_func(user_id=user_id,cursor=cursor)ifresponseisnotNone:ids+=response['ids']cursor=response['next_cursor']>>sys.stderr,'Fetched {0} total {1} ids for {2}'.format(len(ids),label,(user_idorscreen_name))# XXX: You may want to store data during each iteration to provide an# an additional layer of protection from exceptional circumstancesiflen(ids)>=limitorresponseisNone:break# Do something useful with the IDs, like store them to disk...returnfriends_ids[:friends_limit],followers_ids[:followers_limit]# Sample usagetwitter_api=oauth_login()friends_ids,followers_ids=get_friends_followers_ids(twitter_api,screen_name="SocialWebMining",friends_limit=10,followers_limit=10)friends_idsfollowers_ids

Analyzing a User’s Friends and Followers

Problem

You’d like to conduct a basic analysis that compares a user’s friends and followers.

Solution

Use setwise operations such as intersection and difference to analyze the user’s friends and followers.

Discussion

After harvesting all of a user’s friends and followers, you can conduct some primitive analyses using only the ID values themselves with the help of setwise operations such as intersection and difference, as shown in Example 9-20.

Given two sets, the intersection of the sets returns the items that they have in common, whereas the difference between the sets “subtracts” the items in one set from the other, leaving behind the difference. Recall that intersection is a commutative operation, while difference is not commutative.[35]

In the context of analyzing friends and followers, the intersection of two sets can be interpreted as “mutual friends” or people you are following who are also following you back, while the difference of two sets can be interpreted as followers who you aren’t following back or people you are following who aren’t following you back, depending on the order of the operands.

Given a complete list of friend and follower IDs, computing

these setwise operations is a natural starting point and can be the

springboard for subsequent analysis. For example, it probably isn’t

necessary to use the GET users/lookup

API to fetch profiles for millions of followers for a user as an

immediate point of analysis.

You might instead opt to calculate the results of a setwise operation such as mutual friends (for which there are likely much stronger affinities) and hone in on the profiles of these user IDs before spidering out further.

defsetwise_friends_followers_analysis(screen_name,friends_ids,followers_ids):friends_ids,followers_ids=set(friends_ids),set(followers_ids)'{0} is following {1}'.format(screen_name,len(friends_ids))'{0} is being followed by {1}'.format(screen_name,len(followers_ids))'{0} of {1} are not following {2} back'.format(len(friends_ids.difference(followers_ids)),len(friends_ids),screen_name)'{0} of {1} are not being followed back by {2}'.format(len(followers_ids.difference(friends_ids)),len(followers_ids),screen_name)'{0} has {1} mutual friends'.format(screen_name,len(friends_ids.intersection(followers_ids)))# Sample usagescreen_name="ptwobrussell"twitter_api=oauth_login()friends_ids,followers_ids=get_friends_followers_ids(twitter_api,screen_name=screen_name)setwise_friends_followers_analysis(screen_name,friends_ids,followers_ids)

Harvesting a User’s Tweets

Solution

Use the GET

statuses/user_timeline API endpoint to retrieve as many as 3,200 of the most recent tweets from a

user, preferably with the added help of a robust API wrapper such as

make_twitter_request (as introduced

in Making Robust Twitter Requests) since this series

of requests may exceed rate limits or encounter HTTP errors along the

way.

Discussion

Timelines are a fundamental concept in the Twitter developer ecosystem, and Twitter provides a convenient API endpoint for the purpose of harvesting tweets by user through the concept of a “user timeline.” Harvesting a user’s tweets, as demonstrated in Example 9-21, is a meaningful starting point for analysis since a tweet is the most fundamental primitive in the ecosystem. A large collection of tweets by a particular user provides an incredible amount of insight into what the person talks (and thus cares) about. With an archive of several hundred tweets for a particular user, you can conduct dozens of experiments, often with little additional API access. Storing the tweets in a particular collection of a document-oriented database such as MongoDB is a natural way to store and access the data during experimentation. For longer-term Twitter users, performing a time series analysis of how interests or sentiments have changed over time might be a worthwhile exercise.

defharvest_user_timeline(twitter_api,screen_name=None,user_id=None,max_results=1000):assert(screen_name!=None)!=(user_id!=None),\"Must have screen_name or user_id, but not both"kw={# Keyword args for the Twitter API call'count':200,'trim_user':'true','include_rts':'true','since_id':1}ifscreen_name:kw['screen_name']=screen_nameelse:kw['user_id']=user_idmax_pages=16results=[]tweets=make_twitter_request(twitter_api.statuses.user_timeline,**kw)iftweetsisNone:# 401 (Not Authorized) - Need to bail out on loop entrytweets=[]results+=tweets>>sys.stderr,'Fetched%itweets'%len(tweets)page_num=1# Many Twitter accounts have fewer than 200 tweets so you don't want to enter# the loop and waste a precious request if max_results = 200.# Note: Analogous optimizations could be applied inside the loop to try and# save requests. e.g. Don't make a third request if you have 287 tweets out of# a possible 400 tweets after your second request. Twitter does do some# post-filtering on censored and deleted tweets out of batches of 'count', though,# so you can't strictly check for the number of results being 200. You might get# back 198, for example, and still have many more tweets to go. If you have the# total number of tweets for an account (by GET /users/lookup/), then you could# simply use this value as a guide.ifmax_results==kw['count']:page_num=max_pages# Prevent loop entrywhilepage_num<max_pagesandlen(tweets)>0andlen(results)<max_results:# Necessary for traversing the timeline in Twitter's v1.1 API:# get the next query's max-id parameter to pass in.# See https://dev.twitter.com/docs/working-with-timelines.kw['max_id']=min([tweet['id']fortweetintweets])-1tweets=make_twitter_request(twitter_api.statuses.user_timeline,**kw)results+=tweets>>sys.stderr,'Fetched%itweets'%(len(tweets),)page_num+=1>>sys.stderr,'Done fetching tweets'returnresults[:max_results]# Sample usagetwitter_api=oauth_login()tweets=harvest_user_timeline(twitter_api,screen_name="SocialWebMining",\max_results=200)# Save to MongoDB with save_to_mongo or a local file with save_json...

Crawling a Friendship Graph

Problem

You’d like to harvest the IDs of a user’s followers, followers of those followers, followers of followers of those followers, and so on, as part of a network analysis—essentially crawling a friendship graph of the “following” relationships on Twitter.

Solution

Use a breadth-first search to systematically harvest friendship information that can rather easily be interpreted as a graph for network analysis.

Discussion

A breadth-first search is a common technique for exploring a graph and is one of the standard ways that you would start at a point and build up multiple layers of context defined by relationships. Given a starting point and a depth, a breadth-first traversal systematically explores the space such that it is guaranteed to eventually return all nodes in the graph up to the said depth, and the search explores the space such that each depth completes before the next depth is begun (see Example 9-22).

Keep in mind that it is quite possible that in exploring Twitter friendship graphs, you may encounter supernodes—nodes with very high degrees of outgoing edges—which can very easily consume computing resources and API requests that count toward your rate limit. It is advisable that you provide a meaningful cap on the maximum number of followers you’d like to fetch for each user in the graph, at least during preliminary analysis, so that you know what you’re up against and can determine whether the supernodes are worth the time and trouble for solving your particular problem. Exploring an unknown graph is a complex (and exciting) problem to work on, and various other tools, such as sampling techniques, could be intelligently incorporated to further enhance the efficacy of the search.

defcrawl_followers(twitter_api,screen_name,limit=1000000,depth=2):# Resolve the ID for screen_name and start working with IDs for consistency# in storageseed_id=str(twitter_api.users.show(screen_name=screen_name)['id'])_,next_queue=get_friends_followers_ids(twitter_api,user_id=seed_id,friends_limit=0,followers_limit=limit)# Store a seed_id => _follower_ids mapping in MongoDBsave_to_mongo({'followers':[_idfor_idinnext_queue]},'followers_crawl','{0}-follower_ids'.format(seed_id))d=1whiled<depth:d+=1(queue,next_queue)=(next_queue,[])forfidinqueue:follower_ids=get_friends_followers_ids(twitter_api,user_id=fid,friends_limit=0,followers_limit=limit)# Store a fid => follower_ids mapping in MongoDBsave_to_mongo({'followers':[_idfor_idinnext_queue]},'followers_crawl','{0}-follower_ids'.format(fid))next_queue+=follower_ids# Sample usagescreen_name="timoreilly"twitter_api=oauth_login()crawl_followers(twitter_api,screen_name,depth=1,limit=10)

Analyzing Tweet Content

Problem

Given a collection of tweets, you’d like to do some cursory analysis of the 140 characters of content in each to get a better idea of the nature of discussion and ideas being conveyed in the tweets themselves.

Solution

Use simple statistics, such as lexical diversity and average number of words per tweet, to gain elementary insight into what is being talked about as a first step in sizing up the nature of the language being used.

Discussion

In addition to analyzing the content for tweet entities and conducting simple frequency analysis of commonly occurring words, you can also examine the lexical diversity of the tweets and calculate other simple statistics, such as the average number of words per tweet, to better size up the data (see Example 9-23). Lexical diversity is a simple statistic that is defined as the number of unique words divided by the number of total words in a corpus; by definition, a lexical diversity of 1.0 would mean that all words in a corpus were unique, while a lexical diversity that approaches 0.0 implies more duplicate words.

Depending on the context, lexical diversity can be interpreted slightly differently. For example, in contexts such as literature, comparing the lexical diversity of two authors might be used to measure the richness or expressiveness of their language relative to each other. Although not usually the end goal in and of itself, examining lexical diversity often provides valuable preliminary insight (usually in conjunction with frequency analysis) that can be used to better inform possible follow-up steps.

In the Twittersphere, lexical diversity might be interpreted in a similar fashion if comparing two Twitter users, but it might also suggest a lot about the relative diversity of overall content being discussed, as might be the case with someone who talks only about technology versus someone who talks about a much wider range of topics. In a context such as a collection of tweets by multiple authors about the same topic (as would be the case in examining a collection of tweets returned by the Search API or the Streaming API), a much lower than expected lexical diversity might also imply that there is a lot of “group think” going on. Another possibility is a lot of retweeting, in which the same information is more or less being regurgitated. As with any other analysis, no statistic should be interpreted devoid of supporting context.

defanalyze_tweet_content(statuses):iflen(statuses)==0:"No statuses to analyze"return# A nested helper function for computing lexical diversitydeflexical_diversity(tokens):return1.0*len(set(tokens))/len(tokens)# A nested helper function for computing the average number of words per tweetdefaverage_words(statuses):total_words=sum([len(s.split())forsinstatuses])return1.0*total_words/len(statuses)status_texts=[status['text']forstatusinstatuses]screen_names,hashtags,urls,media,_=extract_tweet_entities(statuses)# Compute a collection of all words from all tweetswords=[wfortinstatus_textsforwint.split()]"Lexical diversity (words):",lexical_diversity(words)"Lexical diversity (screen names):",lexical_diversity(screen_names)"Lexical diversity (hashtags):",lexical_diversity(hashtags)"Averge words per tweet:",average_words(status_texts)# Sample usageq='CrossFit'twitter_api=oauth_login()search_results=twitter_search(twitter_api,q)analyze_tweet_content(search_results)

Summarizing Link Targets

Problem

You’d like to have a cursory understanding of what is being talked about in a link target, such as a URL that is extracted as a tweet entity, to gain insight into the nature of a tweet or the interests of a Twitter user.

Solution

Summarize the content in the URL to just a few sentences that can easily be skimmed (or more tersely analyzed in some other way) as opposed to reading the entire web page.

Discussion

Your imagination is the only limitation when it comes to trying to understand the human language data in web pages. Example 9-24 is an attempt to provide a template for processing and distilling that content into a terse form that could be quickly skimmed or analyzed by alternative techniques. In short, it demonstrates how to fetch a web page, isolate the meaningful content in the web page (as opposed to the prolific amounts of boilerplate text in the headers, footers, sidebars, etc.), remove the HTML markup that may be remaining in that content, and use a simple summarization technique to isolate the most important sentences in the content.

The summarization technique basically rests on the premise that the most important sentences are a good summary of the content if presented in chronological order, and that you can discover the most important sentences by identifying frequently occurring words that interact with one another in close proximity. Although a bit crude, this form of summarization works surprisingly well on reasonably well-written Web content.

importsysimportjsonimportnltkimportnumpyimporturllib2fromboilerpipe.extractimportExtractordefsummarize(url,n=100,cluster_threshold=5,top_sentences=5):# Adapted from "The Automatic Creation of Literature Abstracts" by H.P. Luhn## Parameters:# * n - Number of words to consider# * cluster_threshold - Distance between words to consider# * top_sentences - Number of sentences to return for a "top n" summary# Begin - nested helper functiondefscore_sentences(sentences,important_words):scores=[]sentence_idx=-1forsin[nltk.tokenize.word_tokenize(s)forsinsentences]:sentence_idx+=1word_idx=[]# For each word in the word list...forwinimportant_words:try:# Compute an index for important words in each sentenceword_idx.append(s.index(w))exceptValueError,e:# w not in this particular sentencepassword_idx.sort()# It is possible that some sentences may not contain any important wordsiflen(word_idx)==0:continue# Using the word index, compute clusters with a max distance threshold# for any two consecutive wordsclusters=[]cluster=[word_idx[0]]i=1whilei<len(word_idx):ifword_idx[i]-word_idx[i-1]<cluster_threshold:cluster.append(word_idx[i])else:clusters.append(cluster[:])cluster=[word_idx[i]]i+=1clusters.append(cluster)# Score each cluster. The max score for any given cluster is the score# for the sentence.max_cluster_score=0forcinclusters:significant_words_in_cluster=len(c)total_words_in_cluster=c[-1]-c[0]+1score=1.0*significant_words_in_cluster\*significant_words_in_cluster/total_words_in_clusterifscore>max_cluster_score:max_cluster_score=scorescores.append((sentence_idx,score))returnscores# End - nested helper functionextractor=Extractor(extractor='ArticleExtractor',url=url)# It's entirely possible that this "clean page" will be a big mess. YMMV.# The good news is that the summarize algorithm inherently accounts for handling# a lot of this noise.txt=extractor.getText()sentences=[sforsinnltk.tokenize.sent_tokenize(txt)]normalized_sentences=[s.lower()forsinsentences]words=[w.lower()forsentenceinnormalized_sentencesforwinnltk.tokenize.word_tokenize(sentence)]fdist=nltk.FreqDist(words)top_n_words=[w[0]forwinfdist.items()ifw[0]notinnltk.corpus.stopwords.words('english')][:n]scored_sentences=score_sentences(normalized_sentences,top_n_words)# Summarization Approach 1:# Filter out nonsignificant sentences by using the average score plus a# fraction of the std dev as a filteravg=numpy.mean([s[1]forsinscored_sentences])std=numpy.std([s[1]forsinscored_sentences])mean_scored=[(sent_idx,score)for(sent_idx,score)inscored_sentencesifscore>avg+0.5*std]# Summarization Approach 2:# Another approach would be to return only the top N ranked sentencestop_n_scored=sorted(scored_sentences,key=lambdas:s[1])[-top_sentences:]top_n_scored=sorted(top_n_scored,key=lambdas:s[0])# Decorate the post object with summariesreturndict(top_n_summary=[sentences[idx]for(idx,score)intop_n_scored],mean_scored_summary=[sentences[idx]for(idx,score)inmean_scored])# Sample usagesample_url='http://radar.oreilly.com/2013/06/phishing-in-facebooks-pond.html'summary=summarize(sample_url)"-------------------------------------------------"" 'Top N Summary'""-------------------------------------------------"" ".join(summary['top_n_summary'])"-------------------------------------------------"" 'Mean Scored' Summary""-------------------------------------------------"" ".join(summary['mean_scored_summary'])

Analyzing a User’s Favorite Tweets

Problem

You’d like to learn more about what a person cares about by examining the tweets that a person has marked as favorites.

Solution

Use the GET favorites/list

API endpoint to fetch a user’s favorite tweets and then apply techniques

to detect, extract, and count tweet entities to characterize the

content.

Discussion

Not all Twitter users take advantage of the bookmarking feature to identify favorites, so you can’t consider it a completely dependable technique for zeroing in on content and topics of interest; however, if you are fortunate enough to encounter a Twitter user who tends to bookmark favorites as a habit, you’ll often find a treasure trove of curated content. Although Example 9-25 shows an analysis that builds upon previous recipes to construct a table of tweet entities, you could apply more advanced techniques to the tweets themselves. A couple of ideas might include separating the content into different topics, analyzing how a person’s favorites have changed or evolved over time, or plotting out the regularity of when and how often a person marks tweets as favorites.

Keep in mind that in addition to favorites, any tweets that a user has retweeted are also promising candidates for analysis, and even analyzing patterns of behavior such as whether or not a user tends to retweet (and how often), bookmark (and how often), or both is an enlightening survey in its own right.

defanalyze_favorites(twitter_api,screen_name,entity_threshold=2):# Could fetch more than 200 by walking the cursor as shown in other# recipes, but 200 is a good sample to work with.favs=twitter_api.favorites.list(screen_name=screen_name,count=200)"Number of favorites:",len(favs)# Figure out what some of the common entities are, if any, in the contentcommon_entities=get_common_tweet_entities(favs,entity_threshold=entity_threshold)# Use PrettyTable to create a nice tabular displaypt=PrettyTable(field_names=['Entity','Count'])[pt.add_row(kv)forkvincommon_entities]pt.align['Entity'],pt.align['Count']='l','r'# Set column alignment"Common entities in favorites..."pt# Print out some other stats"Some statistics about the content of the favorities..."analyze_tweet_content(favs)# Could also start analyzing link content or summarized link content, and more.# Sample usagetwitter_api=oauth_login()analyze_favorites(twitter_api,"ptwobrussell")

Note

Check out http://favstar.fm for an example of a popular website that aims to help you find “the best tweets” by tracking and analyzing what is being favorited and retweeted on Twitter.

Closing Remarks

Although this cookbook is really just a modest collection when compared to the hundreds or even thousands of possible recipes for manipulating and mining Twitter data, hopefully it has provided you with a good springboard and a sampling of ideas that you’ll be able to draw upon and adapt in many profitable ways. The possibilities for what you can do with Twitter data (and most other social data) are broad, powerful, and (perhaps most importantly) fun!

Note

Pull requests for additional recipes (as well as enhancements to these recipes) are welcome and highly encouraged, and will be liberally accepted. Please fork this book’s source code from its GitHub repository, commit a recipe to this chapter’s IPython Notebook, and submit a pull request! The hope is that this collection of recipes will grow in scope, provide a valuable starting point for social data hackers, and accumulate a vibrant community of contributors around it.

Recommended Exercises

- Review the Twitter Platform API in depth. Are there APIs that you are surprised to find (or not find) there?

- Analyze all of the tweets that you have ever retweeted. Are you at all surprised about what you have retweeted or how your interests have evolved over time?

Juxtapose the tweets that you author versus the ones that you retweet. Are they generally about the same topics?

- Write a recipe that loads friendship graph data from MongoDB into a true graphical representation with NetworkX and employ one of NetworkX’s built-in algorithms, such as centrality measurement or clique analysis, to mine the graph. Chapter 7 provides an overview of NetworkX that you may find helpful to review before completing this exercise.

- Write a recipe that adapts visualizations from previous chapters for the purpose of visualizing Twitter data. For example, repurpose a graph visualization to display a friendship graph, adapt a plot or histogram in IPython Notebook to visualize tweeting patterns or trends for a particular user, or populate a tag cloud (such as Word Cloud Layout) with content from tweets.