Every programmer needs to know how to debug his programs. It is an easy task with plain Perl: just invoke the program with the -d flag to invoke the debugger. Under mod_perl, however, you have to jump through a few hoops.

In this chapter we explain how to correctly handle server, program, and user errors and how to keep your user loyal to your service by displaying good error messages.

We also demonstrate how you can peek at what is going on in a

mod_perl-enabled server while it is running: for example, monitoring

the value of a global variable, seeing what database connections are

open, tracing what modules were loaded and their paths, checking the

value of @INC, and much more.

It’s been said that there’s always one more bug in any given program. Bugs that show symptoms during the development cycle are usually easily found. As their number diminishes, the bugs become harder to find. Subtle interactions between software components can create bugs that aren’t easily reproduced. In such cases, tools and techniques that can help track down the offending code come in handy.

The Perl interpreter

distinguishes

between warnings and errors. Warnings are

messages that the Perl interpreter prints to

STDERR (or to Apache’s error log

under mod_perl). These messages indicate that Perl thinks there is a

problem with your code, but they do not prevent the code from

running. Errors are output in the same way as

warnings, but the program terminates after an error. For example,

errors occur if your code uses invalid syntax. If a die(

) occurs outside of any exception-handling

eval, it behaves just like an error, with a

message being output and program execution terminating.

For someone new to Perl programming, the warning and error messages output by Perl can be confusing and worrysome. In this section we will show you how to interpret Perl’s messages, and how to track down and solve the problems that cause them.

Just like errors, Perl’s optional warnings, if they are enabled, go to the error_log file. You have enabled them in your development server, haven’t you? We discussed the various techniques to enable warnings in Chapter 4 and Chapter 6, but we will repeat them in this section.

The code you write lives a dual life. In the first life it is written, tested, debugged, improved, tested, debugged, rewritten, retested, and debugged again. In the second life it’s just used.

A significant part of the script’s first life is spent on the developer’s machine. The second life is spent on the production server, where the code is supposed to be perfect.

When you develop the code you want all the help you can get to spot possible problems. By enabling warnings you will ensure that Perl gives you all the help it can to identify actual or potential problems in your code. Whenever you see an error or warning in the error_log, you must try to get rid of it.

But why bother, if the program runs and seems to work?

The Perl interpreter issues warnings because it thinks that something’s wrong with your code. The Perl interpreter is rarely wrong; if you ignore the warnings it provides, you may well encounter problems later, perhaps when the code is used on the production server.

If each invocation of a script generates any superfluous warnings, it will be very hard to catch real problems. The warnings that seem important will be lost amongst the mass of “unimportant” warnings that you didn’t bother to fix. All warnings are important, and all warnings can be dealt with.

On the other hand, on a production server, you really want to turn warnings off. And there are good reasons for this:

There is no added value in having the same warning showing up, again and again, triggered by thousands of script invocations. If your code isn’t very clean and generates even a single warning per script invocation, on the heavily loaded server you will end up with a huge error_log file in a short time.

The warning-elimination phase is supposed to be a part of the development process and should be done before the code goes live.

In any Perl script, not just under mod_perl, enabling runtime warnings has a performance impact.

mod_perl provides a very simple solution to handling warnings, so you should avoid enabling warnings in the scripts themselves unless you really have to. Let mod_perl control this mode globally. All you need to do is put the directive:

PerlWarn On

in httpd.conf on your development machine and the directive:

PerlWarn Off

on the live machine.

If there is a piece of code that generates warnings and you want to

disable them only in that code, you can do that too. The Perl special

variable $^W

allows you to

dynamically turn warnings mode on

and off.

{

local $^W = 0;

# some code that generates innocuous warnings

}Don’t forget to localize the

setting

inside a block. By localizing the variable you switch warnings off

only within the scope of the block and ensure that the original value

of $^W is restored upon exit from the block.

Without localization, the setting of $^W will

affect all the requests handled by the Apache

child process that changed this variable, for

all the scripts it executes—not just the

one that changed $^W!

Starting from Perl 5.6.0 you can use the

warnings

pragma:

{

no warnings;

# some code that generates innocuous warnings

}The diagnostics pragma can shed more light on

errors and warnings, as we will see in the following sections.

This pragma extends the terse diagnostics normally emitted during the compilation and runtime phases and augments them with the more verbose and endearing descriptions found in the perldiag manpage.

Like any other pragma, diagnostics is invoked with

use, by placing:

use diagnostics;

in your program. This also turns warnings mode on for the scope of the program.

This pragma is especially useful when you are new to Perl and want a better explanation of the errors and warnings. It’s also helpful when you encounter some warning you’ve never seen before—e.g., when a new warning has been introduced in an upgraded version of Perl.

You may not want to leave diagnostics mode on for your production server. For each warning, diagnostics mode generates about ten times more output than warnings mode. If your code generates warnings that go into the error_log file, with the diagnostics pragma you will use disk space much faster.

Diagnostics mode adds a large performance overhead in comparison with just having the warnings mode on. You can see the benchmark results in Chapter 9.

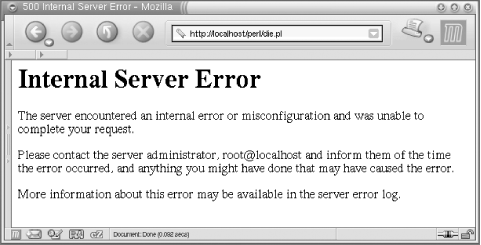

Say you’ve just installed a new script, and when you try it out you see the grey screen of death saying “Internal Server Error” (Figure 21-1). Or even worse, you’ve had a script running on a production server for a long time without problems, when the same grey screen starts to show up occasionally for no apparent reason.

How can you find out what the problem is, before you actually attempt to solve it?

The first problem is determining the location of the error message.

You have been coding in Perl for years, and whenever an error occurred in the past it was displayed in the same terminal window from which you started the script. But when you work with a web server, the errors do not show up in a terminal. In many cases, the server has no terminal to which to send the error messages.

Actually, the error messages don’t disappear; they

end up in the

error_log

file.

Its location is specified by the

ErrorLog

directive in httpd.conf. The default setting is:

ErrorLog logs/error_log

where logs/error_log is appended to the value of

the ServerRoot directive.

If you’ve followed the convention

we’ve used in this book and your

ServerRoot is:

ServerRoot /home/httpd/httpd_perl

the full path to the file will be /home/httpd/httpd_perl/logs/error_log.

Whenever you see “Internal Server Error” in a browser it’s time to look at this file.

There are cases when errors don’t go to the

error_log file. This can happen when the server

is starting and hasn’t gotten as far as opening the

error_log file for writing before it needs to

write an error message. In that case, Apache writes the messages to

STDERR

. If you have entered a

nonexistent directory path in your ErrorLog

directive in httpd.conf, the error message will

be printed to STDERR. If the error happens when

the server executes a PerlRequire,

PerlModule, or other startup-time directive you

might also see output sent to STDERR. If you

haven’t redirected Apache’s

STDERR, then the messages are printed to the

console (tty, terminal) from which you started the server.

Note that when you’re running the server in single-process mode (httpd -X), the usual startup message:

Apache/1.3.24 (Unix) mod_perl/1.26 configured

won’t appear in the error_log file. Also, any startup warnings will be printed to the console, since in this mode the server redirects its STDERR stream to the error_log file only at a later stage.

The first problem is solved: we know where the error messages are.

The second problem is, how useful is the error message?

The usefulness of the error message depends to some extent on the programmer’s coding style. An uninformative message might not help you spot and fix the error.

For example, let’s take a function that opens a file passed to it as a parameter for reading. It does nothing else with the file. Here’s the first version of the code:

my $r = shift;

$r->send_http_header('text/plain');

sub open_file {

my $filename = shift;

die "No filename passed" unless defined $filename;

open FILE, $filename or die;

}

open_file("/tmp/test.txt");Let’s assume that /tmp/test.txt

doesn’t exist, so the open( )

call will fail to open the file. When we call this script from our

browser, the browser returns an “Internal Server

Error” message and we see the following error

appended to error_log:

Died at /home/httpd/perl/test.pl line 9.

We can use the hint Perl kindly gave to us to find where in the code

die( )

was called. However, we still

won’t necessarily know what filename was passed to

this subroutine to cause the program termination.

If we have only one function call, as in the example above, the task

of finding the problematic filename is trivial. Now

let’s add one more open_file( )

function call and assume that of the two, only the file

/tmp/test.txt exists:

open_file("/tmp/test.txt");

open_file("/tmp/test2.txt");When you execute the above call, you will see:

Died at /home/httpd/perl/test.pl line 9.

Based on this error message, can you tell what file your program failed to open? Probably not. Let’s improve it by showing the name of the file that failed:

sub open_file {

my $filename = shift;

die "No filename passed" unless defined $filename;

open FILE, $filename or die "failed to open $filename";

}

open_file("/tmp/test2.txt");When we execute the above code, we see:

failed to open /tmp/test2.txt at

/home/httpd/perl/test.pl line 9.which obviously makes a big difference, since now we know what file we failed to open.

By the way, if you append a newline to the end of the message you

pass to die( ), Perl won’t report

the line number at which the error has happened. If you write:

open FILE, $filename or die "failed to open $filename\n";

the error message will be:

failed to open /tmp/test2.txt

which gives you very little to go on. It’s very hard to debug with such uninformative error messages.

The warn( )

function outputs an error message in the

same way as die( ), but whereas die(

) causes program termination, execution continues normally

after a warn( ). Just like with die(

), if you add a newline to the end of the message, the

filename and the line number from which warn( )

was called won’t be logged.

You might want to use warn( ) instead of

die( ) if the failure isn’t

critical. Consider the following code:

if (open FILE, $filename) {

# do something with the file

close FILE;

}

else {

warn "failed to open $filename";

}

# more code here...However, unless you have a really good reason to do otherwise, you

should generally die( ) when your code encounters

any problem whatsoever. It can be very hard to catch a problem that

manifests itself only several hundred lines after the problem was

caused.

A different approach for producing useful warnings and error messages

is to print the

function call stack

backtrace. The Carp

module comes to our aid with its cluck(

)

function. Consider the script in Example 21-1.

Example 21-1. warnings.pl

#!/usr/bin/perl -w

use strict;

use Carp ( );

local $SIG{_ _WARN_ _} = \&Carp::cluck;

correct( );

incorrect( );

sub correct { print_value("Perl"); }

sub incorrect { print_value( ); }

sub print_value {

my $var = shift;

print "My value is $var\n";

}

Carp::cluck( ) is assigned as a warnings signal

handler. Whenever a warning is triggered, this function will be

called. When we execute the script, we see:

My value is Perl Use of uninitialized value at ./warnings.pl line 15. main::print_value( ) called at ./warnings.pl line 11 main::incorrect( ) called at ./warnings.pl line 8 My value is

Take a moment to understand the stack trace in the warning. The

deepest calls are printed first. So the second line tells us that the

warning was triggered in print_value( ) and the

third line tells us that print_value( ) was called

by the subroutine incorrect( ):

script -> incorrect( ) -> print_value( )

When we look at the source code for the function incorrect(

), we see that we forgot to pass the variable to the

print_value( ) function. Of course, when you write

a subroutine like print_value( ),

it’s a good idea to check the passed arguments

before starting execution. We omitted that step to contrive an easily

debuggable example.

You can also call Carp::cluck( ) directly in your

code, and it will produce the call-stack backtrace for you. This is

usually very useful during the code development phase.

Carp::confess( )

is like Carp::cluck(

), but it acts as a die( ) function

(i.e., terminates the program) and prints the call-stack backtrace.

The functions Carp::carp(

)

and Carp::croak( )

are two other equivalents of

warn( ) and die( ),

respectivily, but they report about the caller of the function in

which they are used, rather the function itself.

In some cases the built-in caller(

)

function can be useful as well, but it

can be a bit cumbersome to use when you need to peek several levels

up the call stack.

When using the warn( ) and die(

) functions, be aware of the following pitfall. Here the

message passed to die( ) is printed with no

problems, assuming the file /does_not_exist

actually doesn’t exist:

panic% perl -e 'open F, "/does_not_exist" or die "cannot open the file"'

But now try the same code using the equivalent ||

operator:

panic% perl -e 'open F, "/does_not_exist" || die "cannot open the file"'

Nothing happens! The pitfall lies in the precedence of the

|| operator. The above call is equal to:

panic% perl -e 'open F, ("/does_not_exist" || die "cannot open the file")'where the left part returns true, and makes this call equivalent to:

panic% perl -e 'open F, "/does_not_exist"'

So the die( ) part has effectively disappeared.

Make sure you always use the low-precendence logical OR operator

or in this situation. Alternatively, you can use

parentheses, but this is less visually appealing:

panic% perl -e 'open(F, "/does_not_exist") || die("cannot open the file")'Only the first pair of parentheses is really needed here, but to be consistent we use them through the whole statement.

Now let’s return to improving the warning and error

messages. The failing code reports the names of the problematic

files, but we still don’t know the real reason for

the failure. Let’s try to improve the warn(

) example. The -r operator tests whether

the file is readable:

if (-r $filename) {

open FILE, $filename;

# do something with file

}

else {

warn "Couldn't open $filename - doesn't exist or is not readable";

}Now if we cannot read the file we do not even try to open it. But we still see a warning in error_log:

Couldn't open /tmp/test.txt - doesn't exist or is not readable at /home/httpd/perl/test.pl line 9.

The warning tells us the reason for the failure, so we don’t have to go to the code and check what it was trying to do with the file.

It could be quite a coding overhead to explain all the possible

failure reasons that way, but why reinvent the wheel? We already have

the reason for the failure stored in the $!

variable. Let’s go back to the open_file(

) function:

sub open_file {

my $filename = shift;

die "No filename passed" unless defined $filename;

open FILE, $filename or die "failed to open $filename: $!";

}

open_file("/tmp/test.txt");This time, if open( ) fails we see:

failed to open /tmp/test.txt: No such file or directory at /home/httpd/perl/test.pl line 9.

Now we have all the information we need to debug these problems: we

know what line of code triggered die( ), we know

what file we were trying to open, and we also know the reason,

provided by Perl’s $! variable.

Note that there’s a big difference between the following two commonly seen bits of Perl code:

open FILE, $filename or die "Can't open $filename: $!"; open FILE, $filename or die "Can't open $filename!";

The first bit is helpful; the second is just rude. Please do your part to ease human suffering, and use the first version, not the second.

To show our useful error messages in action, let’s cause an error. We’ll create the file /tmp/test.txt as a different user and make sure that it isn’t readable by Apache processes:

panic% touch /tmp/test.txt panic% chmod 0600 /tmp/test.txt # -rw-------

Now when we execute the latest version of the code, we see:

failed to open /tmp/test.txt: Permission denied at /home/httpd/perl/test.pl line 9.

Here we see a different reason: we created a file that doesn’t belong to the user the server runs as (usually nobody). It does not have permission to read the file.

Now you can see that it’s much easier to debug your

code if you validate the return values of the system calls and

properly code arguments to die( ) and

warn( ) calls. The open( )

function is just one of the many system calls Perl provides.

Second problem solved: we now have useful error messages.

So now you can code and see error messages from mod_perl scripts and modules as easily as if they were plain Perl scripts that you execute from a shell.

It’s a good idea to

keep the error_log open all the time in a

dedicated terminal using tail -f:

panic% tail -f /home/httpd/httpd_perl/logs/error_log

or less -S:

panic% less -S /home/httpd/httpd_perl/logs/error_log

You can use whichever one you prefer (the latter allows you to navigate around the file, search, etc.). This will ensure that you see all the errors and warnings as they happen.

Another tip is to create a shell alias, to make it easier to execute the above commands. In a C-style shell, use:

panic% alias err "tail -f /home/httpd/httpd_perl/logs/error_log"

In a Bourne-style shell, use:

panic% alias err='tail -f /home/httpd/httpd_perl/logs/error_log'

From now on, in the shell you set the alias in, executing:

panic% err

will execute tail -f /home/httpd/httpd_perl/logs/error_log. If you are using a C-style shell, put the alias into your ~/.cshrc file or its equivalent. For setting this alias globally to all users, put it into /etc/csh.cshrc or similar. If you are using a Bourne-style shell, the corresponding files are usually ~/.bashrc and /etc/profile.

If you spend a lot of time browsing the Internet, you will see many error messages, ranging from generic but useless messages like “An error has happened” to the cryptic ones that no one understands. If you are developing a user-friendly system, it’s important to understand that the errors are divided into at least two major groups: user related and server related. When an error happens, you want to notify either a user or a server administrator, according to the category of the error. In some cases you may want to notify both.

If you set a file-upload limit to 1 MB and a user tries to upload a file bigger than the limit, it is a user error. You should report this error to the user, explain why the error has happened, and tell the user what to do to resolve the problem. Since we are talking about the Web, the error should be sent to the user’s browser. A system administrator usually doesn’t care about this kind of error, and therefore probably shouldn’t be notified, but it may be an indication of an attempt to compromise the server, so that may be a reason to notify the administrator.

If the user has successfully uploaded a file, but the server has failed to save this file for some reason (e.g., it ran out of free disk space), the error should be logged in error_log if possible and the system administrator should be notified by email, pager, or similar means. Since the user couldn’t accomplish what she was trying to do, you must tell her that the operation failed. The user probably doesn’t care why the operation has failed, but she would want to know how to resolve it (e.g., in the worst case, tell her to try again later). The actual reason for the error probably shouldn’t be displayed—if you do, it will probably only confuse the user. Instead, you should nicely explain that something went wrong and that the system administrator has been notified and will take care of the problem as soon as possible. If the service is very mission-critical, you probably need to provide the user with some problem tracking number and a way to contact a human, so she will be able to figure out when the problem has been resolved. Alternatively, you may want to ask for the user’s email address and use this to follow up on the problem.

Some applications use:

use CGI::Carp qw(fatalsToBrowser);

which sends all the errors to the browser. This

module might be useful in development, if you have a problem

accessing your server using an interactive session, so you can see

the contents of the error_log file. But please

don’t leave this line in the production version of

your code. Instead, trap the errors and decide what to do about each

error separately. To trap

errors, you can use the eval(

) exception-handling mechanism:[1]

eval {

# do something

};

if ($@) {

# decide what to do about the error stored in $@

}which is equivalent to the C++/Java/other languages concept of:

try {

# do something

}

catch {

# do something about errors

}There are also CPAN modules, such as

Error and Exception::Class,

that use the same approach but provide a special interface for doing

exception handling (and also provide additional functionality).

Another technique is to assign a signal handler:

$SIG{_ _DIE_ _} = sub {

print STDERR "error: ", join("\n", @_), "\n";

exit;

};When die( ) is called, this anonymous function

will be invoked and the argument list to die( )

will be forwarded to it. So if later in the code you write:

die "good bye, cruel world";

the code will print to STDERR (which under

mod_perl usually ends up in error_log):

error: good bye, cruel world

and the normal program flow will be aborted, since the handler has

called exit( ).

If you don’t localize this setting as:

local $SIG{_ _DIE_ _} = sub {...};it affects the whole process. It also interferes with

Perl’s normal exception mechanism, shown earlier; in

fact, it breaks Perl’s exception handling, because a

signal handler will be called before you get the chance to examine

$@ after calling the eval

block.

You can attempt to work around this problem by checking the value of

$^S, which is true when the code is running in the

eval block. If you are using

Apache::Registry or a similar module, the code is

always executed within an eval block, so this is

not a good solution.

Since the signal handler setting is global, it’s

possible that some other module might try to assign its own signal

handler for _ _DIE_ _, and therefore there will be

a mess. The two signal handlers will conflict with each other,

leading to unexpected behavior. You should avoid using this

technique, and use Perl’s standard

eval exception-handling mechanism instead. For

more information about exception handling, see

http://perl.apache.org/docs/general/perl_reference/perl_reference.html#Exception_Handling_for_mod_perl.

Normally, Apache

runs one parent

process and several children. The parent starts new child processes

as required, logs errors, kills off child processes that have served

MaxRequestsPerChild, etc. But it is the child

processes that serve the actual requests from web browsers. Because

the multiprocess model can get in your way when

you’re trying to find a bug, sometimes running the

server in single-process mode (with -X) is very

important for testing during the development phase.

You may want to test that your application correctly handles global variables, if you have any. It is best to have as few globals as possible—ideally none—but sometimes you just can’t do without them. It’s hard to test globals with multiple servers executing your code, since each child has a different set of values for its global variables.

Imagine that you have a random( ) subroutine that

returns a random number, and you have the following script:

use vars qw($num); $num ||= random( ); print ++$num;

This script initializes the variable $num with a

random value, then increments it on each request and prints it out.

Running this script in a multiple-server environment will result in

something like 1, 9,

4, 19 (a different number each

time you hit the browser’s reload button), since

each time your script will be served by a different child. But if you

run in httpd -X single-server mode, you will get

6, 7, 8,

9... assuming that random( )

returned 6 on the first call.

But do not get too obsessive with this mode—working in single-server mode sometimes hides problems that show up when you switch to normal (multiple-server) mode.

Consider an application that allows you to change the configuration at runtime. Let’s say the script produces a form to change the background color of the page. This isn’t good design, but for the sake of demonstrating the potential problem we will assume that our script doesn’t write the changed background color to the disk—it simply stores it in memory, like this:

use CGI;

my $q = CGI->new( );

use vars qw($bgcolor);

$bgcolor ||= "white";

$bgcolor = $q->param('bgcolor') if $q->param('bgcolor');where $bgcolor is set to a default

"white" if it’s not yet set

(otherwise, the value from the previous setting is used). Now if a

user request updates the color, the script updates the global

variable.

So you have typed in "yellow" for the new

background color, and in response, your script prints back the HTML

with the background color yellow—you think

that’s it! If only it was so simple.

If you keep running in single-server mode you will never notice that

you have a problem. However, if you run the same code in normal

server mode, after you submit the color change you will get the

result as expected, but when you call the same URL again (not via

reload!) the chances are that you will get back the original default

color (white, in this case). Only the child that processed the

color-change request has its $bgcolor variable set

to "yellow“; the rest still have

"white“. This shows that the design is

incorrect—the information is stored in only one process,

whereas many may be running.

Remember that children can’t share information directly, except for data that they inherited from their parent when they were created and that hasn’t subsequently been modified.

There are many solutions to this example problem: you could use a hidden HTML form variable for the color to be remembered, or store it in some more permanent place on the server side (a file or database), or you could use shared memory, and so on.

Note that when the server is running in single-process mode, and the

response includes HTML with <img> tags, the

loading of the images will take a long time for browsers that try to

take an advantage of the KeepAlive feature (e.g.,

Netscape). These browsers try to open multiple connections and keep

them open. Because there is only one server process listening, each

connection has to finish before the next can start. Turn off

KeepAlive in httpd.conf to

avoid this effect. Alternatively (assuming that the image-size

parameters are included, so that a browser will be able to render the

rest of the page) you can press Stop after a few seconds.

In addition, you should be aware that when running with -X you will not see the status messages that the parent server normally writes to the error_log file (“Server started”, “Server stopped”, etc.). Since httpd -X causes the server to handle all requests itself, without forking any children, there is no controlling parent to write the status messages.

Most

Unix-style operating systems offer a

“tracing utility” that intercepts

and records the system calls that are called by a process and the

signals that are received by a process. In this respect it is similar

to gdb. The name of each system call, its

arguments, and its return value are printed to

STDERR or to the specified file.

The tracing utility is a useful diagnostic, instructional, and debugging tool. You can learn a lot about the underlying system while examining traces of the running programs. In the case of mod_perl, tracing improves performance by enabling us to spot and eliminate redundant system calls. It also useful in cases of problem debugging—for example, when some process hangs.

Depending on your operating system, you should have available one of the utilities strace, truss, tusc, ktrace, or similar. In this book we will use the Linux strace utility.

There are two ways to get a trace of the process with strace. One way is to tell strace to start the process and do the tracing on it:

panic% strace perl -le 'print "mod_perl rules"'

Another way is to tell strace to attach to a process that’s already running:

panic% strace -p PID

Replace PID with the process number you want to

check on.

Many other useful arguments are accepted by strace. For example, you can tell it to trace only specific system calls:

panic% strace -e trace=open,write,close,nanosleep \

perl -le 'print "mod_perl rules"'In this example we have asked strace to show us

only the calls to open( ), write( ),

close( ), and nanosleep( ),

which reduces the output generated by strace,

making it simpler to understand—providing you know what you are

looking for.

The generated traces are too long (unless filtered with

trace=tag) to be presented here completely. For

example, if we ask for only the write( ) system

calls, we get the following output:

panic% strace -e trace=write perl -le 'print "mod_perl rules"' write(1, "mod_perl rules\n", 15mod_perl rules ) = 15

The output of the Perl one-liner gets mixed with the trace, so the actual trace is:

write(1, "mod_perl rules\n", 15) = 15

Note that the newline was automatically appended because of the -l option on the Perl command line.

Each line in the trace contains the system call name, followed by its

arguments in parentheses and its return value. In the last example, a

string of 15 characters was written to STDOUT,

whose file descriptor is 1. And we can see that they were all

successfully written, since the write( ) system

call has returned a value of 15, the number of characters written.

The strace manpage provides a comprehensive explanation of how to interpret all parts of the traces; you may want to refer to this manpage to learn more about it.

When we are interested in mod_perl-level events, it’s quite hard to use system-level tracing, both because of the system trace’s verbosity and because it’s hard to find the boundary between events. Therefore, we need to do mod_perl-level tracing.

To enable mod_perl debug tracing, configure mod_perl with the

PERL_TRACE

option:

panic% perl Makefile.PL PERL_TRACE=1 ...

The trace levels can then be enabled via the

MOD_PERL_TRACE

environment

variable which can contain any combination of the following options.

- c

Trace directive handling during Apache (non-mod_perl) configuration-directive handling

- d

Trace directive handling during mod_perl directive processing during configuration read

- s

Trace processing of

<Perl>sections

- h

Trace Perl handler callbacks during the processing of incoming requests and during startup (

PerlChildInitHandler)- g

Trace global variable handling, interpreter construction,

ENDblocks, etc.

Alternatively, setting the environment variable to

all will include all the options listed above.

One way of setting this variable is by adding this directive to httpd.conf:

PerlSetEnv MOD_PERL_TRACE all

For example, if you want to see a trace of the

PerlRequire and PerlModule

directives as they are executed, use:

PerlSetEnv MOD_PERL_TRACE d

You can also use the command-line environment, setting:

panic% setenv MOD_PERL_TRACE all panic% ./httpd -X

If running under a Bourne-style shell, you can set the environment variable for only the duration of a single command:

panic% MOD_PERL_TRACE=all ./httpd -X

If using a different shell, you should try using the env utility, which has a similar effect:

panic% env MOD_PERL_TRACE=all ./httpd -X

For example, if you want to trace the processing of the

Apache::Reload setting during startup and you want

to see what happens when the following directives are processed:

PerlModule Apache::Reload PerlInitHandler Apache::Reload PerlSetVar ReloadAll Off PerlSetVar ReloadModules "Apache::* Book::*"

do:

panic% setenv MOD_PERL_TRACE d panic% ./httpd -X PerlModule: arg='Apache::Reload' loading perl module 'Apache::Reload'...ok loading perl module 'Apache'...ok loading perl module 'Tie::IxHash'...not ok init `PerlInitHandler' stack perl_cmd_push_handlers: @PerlInitHandler, 'Apache::Reload' pushing `Apache::Reload' into `PerlInitHandler' handlers perl_cmd_var: 'ReloadAll' = 'Off' perl_cmd_var: 'ReloadModules' = 'Apache::* Book::*'

We have removed the rest of the trace and separated the output trace into four groups, each equivalent to the appropriate setting from our configuration example. So we can see that:

PerlModule Apache::Reload

loads the Apache::Reload and

Apache modules but fails to load

Tie::IxHash, since we don’t have

it installed (which is not a fatal error in the case of

Apache::Reload).

The following initializes the PerlInitHandler

stack, as it wasn’t yet used, and pushes

Apache::Reload there:

PerlInitHandler Apache::Reload

The last two directives call perl_cmd_var( ) to

set the Perl variables that can be retrieved in the code with

dir_config( ), as explained in Chapter 4:

PerlSetVar ReloadAll Off PerlSetVar ReloadModules "Apache::* Book::*"

Now let’s look at the trace of the handlers called during the execution of this code:

use strict;

my $r = shift;

$r->send_http_header("text/plain");

$r->print("Hello");We set MOD_PERL_TRACE to trace handler calls with

h:

panic% setenv MOD_PERL_TRACE h

panic% ./httpd -X &

panic% tail -f /home/httpd/httpd_perl/logs/error_log

running 1 server configured stacked handlers for /perl/test.pl...

calling &{PerlInitHandler->[0]} (1 total)

&{PerlInitHandler->[0]} returned status=0

`PerlInitHandler' push_handlers( ) stack is empty

PerlInitHandler handlers returned 0

running 1 server configured stacked handlers for /perl/test.pl...

calling &{PerlPostReadRequestHandler->[0]} (1 total)

&{PerlPostReadRequestHandler->[0]} returned status=0

`PerlPostReadRequestHandler' push_handlers( ) stack is empty

PerlPostReadRequestHandler handlers returned 0

`PerlTransHandler' push_handlers( ) stack is empty

PerlTransHandler handlers returned -1

`PerlInitHandler' push_handlers( ) stack is empty

PerlInitHandler handlers returned -1

`PerlHeaderParserHandler' push_handlers( ) stack is empty

`PerlAccessHandler' push_handlers( ) stack is empty

PerlAccessHandler handlers returned -1

`PerlTypeHandler' push_handlers( ) stack is empty

PerlTypeHandler handlers returned -1

running 1 server configured stacked handlers for /perl/test.pl...

calling &{PerlFixupHandler->[0]} (1 total)

registering PerlCleanupHandler

&{PerlFixupHandler->[0]} returned status=-1

`PerlFixupHandler' push_handlers( ) stack is empty

PerlFixupHandler handlers returned -1

running 1 server configured stacked handlers for /perl/test.pl...

calling &{PerlHandler->[0]} (1 total)

&{PerlHandler->[0]} returned status=0

`PerlHandler' push_handlers( ) stack is empty

PerlHandler handlers returned 0

`PerlLogHandler' push_handlers( ) stack is empty

PerlLogHandler handlers returned -1

running registered cleanup handlers...

perl_call: handler is a cached CV

`PerlCleanupHandler' push_handlers( ) stack is empty

PerlCleanupHandler handlers returned -1You can see what handlers were registered to be executed during the processing of this simple script. In our configuration we had these relevant directives:

PerlInitHandler Apache::Reload PerlPostReadRequestHandler Book::ProxyRemoteAddr PerlFixupHandler Apache::GTopLimit

And you can see that they were all called:

calling &{PerlInitHandler->[0]} (1 total)

&{PerlInitHandler->[0]} returned status=0

calling &{PerlPostReadRequestHandler->[0]} (1 total)

&{PerlPostReadRequestHandler->[0]} returned status=0

calling &{PerlFixupHandler->[0]} (1 total)

registering PerlCleanupHandler

&{PerlFixupHandler->[0]} returned status=-1In addition, when Apache::GTopLimit was running,

it registered a PerlCleanupHandler, which was

executed at the end:

running registered cleanup handlers... perl_call: handler is a cached CV

Since we were executing an Apache::Registry

script, the PerlHandler was executed as well:

running 1 server configured stacked handlers for /perl/test.pl...

calling &{PerlHandler->[0]} (1 total)

&{PerlHandler->[0]} returned status=0

`PerlHandler' push_handlers( ) stack is empty

PerlHandler handlers returned 0So if you debug your handlers, you can see what handlers were called, whether they have registered some new handlers on the fly, and what the return status from the executed handler was.

It’s a known fact that programmers spend a lot of time debugging their code. Sometimes we spend more time debugging code than writing it. The lion’s share of the time spent on debugging is spent on finding the cause of the bug and trying to reproduce the bug at will. Usually it takes little time to fix the problem once it’s understood.

A typical Perl program relies on many other modules written by other developers. Hence, no matter how good your code is, often you have to deal with bugs in the code written by someone else. No matter how hard you try to avoid learning to debug, you will have to do it at some point. And the earlier you acquire the skills, the better.

There are several levels of debugging complexity. The basic level is when Perl terminates the program during the compilation phase, before it tries to run the resulting byte code. This usually happens because there are syntax errors in the code, or perhaps because a used module is missing. Sometimes it takes quite an effort to solve these problems, since code that uses Apache core modules generally won’t compile when executed from the shell. Later we will learn how to solve syntax problems in mod_perl code quite easily.

Once the program compiles and starts to run, various runtime errors

may happen, usually when Perl tries to interact with external

resources (e.g., trying to open a file or to open a connection to a

database). If the code validates whether such external resource calls

succeed and aborts the program with die( ) if they

do not (including a useful error message, as we explained at the

beginning of the chapter), there is nothing to debug here, because

the error message gives us all the needed information. These are not

bugs in our code, and it’s expected that they may

happen. However, if the error message is incomplete (e.g., if you

didn’t include $! in the error

message when attempting to open a file), or the program continues to

run, ignoring the failed call, then you have to figure out where the

badly written code is and correct it to abort on the failure,

properly reporting the problem.

Of course, there are cases where a failure to do something is not fatal. For example, consider a program that tries to open a connection to a database, and it’s known that the database is being stopped every so often for maintenance. Here, the program may choose to try again and again until the database becomes available and aborts itself only after a certain timeout period. In such cases we hope that the logic is properly implemented, so it won’t lead to mysterious, hard-to-detect bugs.

If the running program is properly handling external resource calls,

it may still be prone to internal logical errors—i.e., when the

program doesn’t do what you thought you had

programmed it to do. These are somewhat harder to solve than simple

syntax errors, especially when there is a lot of code to be inspected

and reviewed, but it’s just a matter of time. Perl

can help a lot; typos can often be found simply by enabling warnings.

For example, if you wanted to compare two numbers, but you omitted

the second = character so that you had something

like if ($yes = 1) instead of if

($yes

= = 1), with warnings enabled,

Perl will warn you that you may have meant = =.

The next level is when the program does what it’s

expected to do most of the time, but occasionally misbehaves. Often

you’ll find that print( )

statements or the Perl debugger can help, but inspection of the code

generally doesn’t. Sometimes it’s

easy to debug with print( ), dumping your data

structures to a log file at some point, but typing the debug messages

can become very tedious. That’s where the Perl

debugger comes into its own.

While print( ) statements always work, running the

Perl debugger for CGI-style scripts might be quite a challenge. But

with the right knowledge and tools handy, the debugging process

becomes much easier. Unfortunately, there is no one easy way to debug

your programs, as the debugging depends entirely on your code. It can

be a nightmare to debug really complex and obscure code, but as your

style matures you can learn ways to write simpler code that is easier

to debug. You will probably find that when you write simpler, clearer

code it does not need so much debugging in the first place.

One of the most difficult cases to debug is when the process just terminates in the middle of processing a request and aborts with a “Segmentation fault” error (possibly dumping core, by creating a file called core in the current directory of the process that was running). Often this happens when the program tries to access a memory area that doesn’t belong to it. This is something that you rarely see with plain Perl scripts, but it can easily happen if you use modules whose guts are written in C or C++ and something goes wrong with them. Occasionally you will come across a bug in mod_perl itself (mod_perl is written in C and makes extensive use of XS macros).

In the following sections we will cover a selection of problems in detail, thoroughly discussing them and presenting a few techniques to solve them.

While developing code, we sometimes make syntax errors, such as forgetting to put a comma in a list or a semicolon at the end of a statement.

One approach to locating syntactically incorrect code is to execute the script from the shell with the -c flag:

panic% perl -c test.pl

This tells Perl to check the syntax but not to run the code

(actually, it will execute BEGIN blocks,

END blocks, and use( ) calls,

because these are considered as occurring outside the execution of

your program, and they can affect whether your program compiles

correctly or not).[2]

When checking syntax in this way it’s also a good idea to add the -w switch to enable warnings:

panic% perl -cw test.pl

If there are errors in the code, Perl will report the errors and tell you at which line numbers in your script the errors were found. For example, if we create a file test.pl with the contents:

@list = ('foo' 'bar');and do syntax validation from the command line:

panic% perl -cw test.pl

String found where operator expected at

test.pl line 1, near "'foo' 'bar'"

(Missing operator before 'bar'?)

syntax error at test.pl line 1, near "'foo' 'bar'"

test.pl had compilation errors.we can learn from the error message that we are missing an operator

before the 'bar' string, which is of course a

comma in this case. If we place the missing comma between the two

strings:

@list = ('foo', 'bar');and run the test again:

panic% perl -cw test.pl Name "main::list" used only once: possible typo at test.pl line 1. test.pl syntax OK

we can see that the syntax is correct now. But Perl still warns us that we have some variable that is defined but not used. Is this a bug? Yes and no—it’s what we really meant in this example, but our example doesn’t actually do anything, so Perl is probably right to complain.

The next step is to execute the script, since in addition to syntax errors there may be runtime errors. These are usually the errors that cause the “Internal Server Error” response when a page is requested by a client’s browser. With plain CGI scripts (running under mod_cgi) it’s the same as running plain Perl scripts—just execute them and see if they work.

The whole thing is quite different with scripts that use

Apache::* modules. These can be used only from

within the mod_perl server environment. Such scripts rely on other

code, and an environment that isn’t available if you

attempt to execute the script from the shell. There is no Apache

request object available to the code when it is executed from the

shell.

If you have a problem when using Apache::*

modules, you can make a request to the script from a browser and

watch the errors and warnings as they are logged to the

error_log file. Alternatively, you can use the

Apache::FakeRequest module, which tries to emulate

a request and makes it possible to debug some scripts outside the

mod_perl environment, as we will see in the next

section.

Apache::FakeRequest

is

used to set up an empty Apache request object that can be used for

debugging. The Apache::FakeRequest methods just

set internal variables with the same names as the methods and returns

the values of the internal variables. Initial values for methods can

be specified when the object is created. The print( )

method prints to STDOUT.

Subroutines for Apache constants are also defined so that you can use

Apache::Constants while debugging, although the

values of the constants are hardcoded rather than extracted from the

Apache source code.

Example 21-2 is a very simple module that prints a brief message to the client’s browser.

Example 21-2. Book/Example.pm

package Book::Example;

use Apache::Constants qw(OK);

sub handler {

my $r = shift;

$r->send_http_header('text/plain');

print "You are OK ", $r->get_remote_host, "\n";

return OK;

}

1;You cannot debug this module unless you configure the server to run it, by calling its handler from somewhere. So, for example, you could put in httpd.conf:

<Location /ex>

SetHandler perl-script

PerlHandler Book::Example

</Location>Then, after restarting the server, you could start a browser, request the location http://localhost/ex, and examine the output. Tedious, no?

With the help of Apache::FakeRequest, you can

write a little

script that will emulate

a request and return the output (see Example 21-3).

Example 21-3. fake.pl

#!/usr/bin/perl

use Apache::FakeRequest ( );

use Book::Example ( );

my $r = Apache::FakeRequest->new('get_remote_host'=>'www.example.com');

Book::Example::handler($r);When you execute the script from the command line, you will see the following output as the body of the response:

You are OK www.example.com

As you can see, when Apache::FakeRequest was

initialized, we hardcoded the Apache method get_remote_host(

) with a static value.

At the time of this writing, Apache::FakeRequest

is far from being complete, but you may still find it useful.

If while developing your code you have to switch back and forth between the normal and fake modes, you may want to start your code in this way:

use constant MOD_PERL => $ENV{MOD_PERL};

my $r;

if (MOD_PERL) {

$r = Apache->request;

} else {

require Apache::FakeRequest;

$r = Apache::FakeRequest->new;

}When you run from the command line, the fake request will be used; otherwise, the usual method will be used.

The universal

debugging tool across nearly

all platforms and programming languages is printf(

) (or equivalent output functions). This function can send

data to the console, a file, an application window, and so on. In

Perl we generally use the print( ) function. With

an idea of where and when the bug is triggered, a developer can

insert print( ) statements into the source code to

examine the value of data at certain stages of execution.

However, it is rather difficult to anticipate all the possible

directions a program might take and what data might cause trouble. In

addition, inline debugging code tends to add bloat and degrade the

performance of an application and can also make the code harder to

read and maintain. Furthermore, you have to comment out or remove the

debugging print( ) calls when you think that you

have solved the problem, and if later you discover that you need to

debug the same code again, you need at best to uncomment the

debugging code lines or, at worst, to write them again from scratch.

The constant

pragma helps

here. You can leave some debug printings in production code, without

adding extra processing overhead, by using constants. For example,

while developing the code, you can define a constant

DEBUG whose value is 1:

package Foo; use constant DEBUG => 1; ... warn "entering foo" if DEBUG; ...

The warning will be printed, since DEBUG returns

true. In production you just have to turn off the constant:

use constant DEBUG => 0;

When the code is compiled with a false DEBUG

value, all those statements that are to be executed if

DEBUG has a true value will be removed on the fly

at compile time, as if they never existed. This

allows you to keep some of the important debug statements in the code

without any adverse impact on performance.

But what if you have many different debug categories and you want to be able to turn them on and off as you need them? In this case, you need to define a constant for each category. For example:

use constant DEBUG_TEMPLATE => 1; use constant DEBUG_SESSION => 0; use constant DEBUG_REQUEST => 0;

Now if in your code you have these three debug statements:

warn "template" if DEBUG_TEMPLATE; warn "session" if DEBUG_SESSION; warn "request" if DEBUG_REQUEST;

only the first one will be executed, as it’s the only one that has a condition that evaluates to true.

Let’s look at a few examples where we use

print( ) to debug some problem.

In one of our applications, we wrote a function that returns a date from one week ago. This function (including the code that calls it) is shown in Example 21-4.

Example 21-4. date_week_ago.pl

print "Content-type: text/plain\n\n";

print "A week ago the date was ",date_a_week_ago( ),"\n";

# return a date one week ago as a string in format: MM/DD/YYYY

sub date_a_week_ago {

my @month_len = (31, 28, 31, 30, 31, 30, 31, 31, 30, 31, 30, 31);

my($day, $month, $year) = (localtime)[3..5];

for (my $j = 0; $j < 7; $j++) {

$day--;

if ($day = = 0) {

$month--;

if ($month = = 0) {

$year--;

$month = 12;

}

# there are 29 days in February in a leap year

$month_len[1] =

($year % 400 = = 0 or ($year % 4 = = 0 and $year % 100))

? 29 : 28;

# set $day to be the last day of the previous month

$day = $month_len[$month - 1];

}

}

return sprintf "%02d/%02d/%04d", $month, $day, $year+1900;

}This code is pretty straightforward. We get today’s date and subtract 1 from the value of the day we get, updating the month and the year on the way if boundaries are being crossed (end of month, end of year). If we do it seven times in a loop, at the end we should get a date from a week ago.

Note that since localtime( ) returns the year as a

value of current_year-1900 (which means that we

don’t have a century boundary to worry about), if we

are in the middle of the first week of the year 2000, the value of

$year returned by localtime( ) will be

100 and not 0, as one might

mistakenly assume. So when the code does $year--

it becomes 99, not -1. At the

end, we add 1900 to get back the correct four-digit year format. (If

you plan to work with years before 1900, add 1900 to

$year before the for loop.)

Also note that we have to account for leap years, where there are 29 days in February. For the other months, we have prepared an array containing the month lengths. A specific year is a leap year if it is either evenly divisible by 400 or evenly divisible by 4 and not evenly divisible by 100. For example, the year 1900 was not a leap year, but the year 2000 was a leap year. Logically written:

print ($year % 400 = = 0 or ($year % 4 = = 0 and $year % 100))

? 'Leap' : 'Not Leap';Now when we run the script and check the result, we see that

something is wrong. For example, if today is 10/23/1999, we expect

the above code to print 10/16/1999. In fact, it

prints 09/16/1999, which means that we have lost a

month. The above code is buggy!

Let’s put a few debug print( )

statements in the code, near the $month variable:

sub date_a_week_ago {

my @month_len = (31, 28, 31, 30, 31, 30, 31, 31, 30, 31, 30, 31);

my($day, $month, $year) = (localtime)[3..5];

print "[set] month : $month\n"; # DEBUG

for (my $j = 0; $j < 7; $j++) {

$day--;

if ($day = = 0) {

$month--;

if ($month = = 0) {

$year--;

$month = 12;

}

print "[loop $i] month : $month\n"; # DEBUG

# there are 29 days in February in a leap year

$month_len[1] =

($year % 400 = = 0 or ($year % 4 = = 0 and $year % 100))

? 29 : 28;

# set $day to be the last day of the previous month

$day = $month_len[$month - 1];

}

}

return sprintf "%02d/%02d/%04d", $month, $day, $year+1900;

}When we run it we see:

[set] month : 9

This is supposed to be the number of the current month

(10). We have spotted a bug, since the only code

that sets the $month variable consists of a call

to localtime( ). So did we find a bug in Perl?

Let’s look at the manpage of the localtime(

) function:

panic% perldoc -f localtime

Converts a time as returned by the time function to a 9-element array with the time

analyzed for the local time zone. Typically used as follows:

# 0 1 2 3 4 5 6 7 8

($sec,$min,$hour,$mday,$mon,$year,$wday,$yday,$isdst) = localtime(time);

All array elements are numeric, and come straight out of a struct tm. In particular

this means that $mon has the range 0..11 and $wday has the range 0..6 with Sunday as

day 0. Also, $year is the number of years since 1900, that is, $year is 123 in year

2023, and not simply the last two digits of the year. If you assume it is, then you

create non-Y2K-compliant programs--and you wouldn't want to do that, would you?

[more info snipped]This reveals that if we want to count months from 1 to 12 and not 0

to 11 we are supposed to increment the value of

$month. Among other interesting facts about

localtime( ), we also see an explanation of

$year, which, as we’ve mentioned

before, is set to the number of years since 1900.

We have found the bug in our code and learned new things about

localtime( ). To correct the above code, we just

increment the month after we call localtime( ):

my($day, $month, $year) = (localtime)[3..5]; $month++;

Other places where programmers often make mistakes are conditionals and loop statements. For example, will the block in this loop:

my $c = 0;

for (my $i=0; $i <= 3; $i++) {

$c += $i;

}be executed three or four times?

If we plant the print( ) debug statement:

my $c = 0;

for (my $i=0; $i <= 3; $i++) {

$c += $i;

print $i+1,"\n";

}and execute it:

1 2 3 4

we see that it gets executed four times. We could have figured this

out by inspecting the code, but what happens if instead of

3, there is a variable whose value is known only

at runtime? Using debugging print( ) statements

helps to determine whether to use < or

<= to get the boundary condition right.

Using idiomatic Perl makes things much easier:

panic% perl -le 'my $c=0; $c += $_, print $_+1 for 0..3;'

Here you can plainly see that the loop is executed four times.

The same goes for conditional statements. For example, assuming that

$a and $b are integers, what is

the value of this statement?

$c = $a > $b and $a < $b ? 1 : 0;

One might think that $c is always set to zero,

since:

$a > $b and $a < $b

is a false statement no matter what the values of

$a and $b are. But

C$ is not set to zero—it’s

set to 1 (a true value) if $a >

$b; otherwise, it’s set to

undef (a false value). The reason for this

behavior lies in operator precedence. The operator

and (AND) has lower precedence than the operator

= (ASSIGN); therefore, Perl sees the statement

like this:

($c = ($a > $b) ) and ( $a < $b ? 1 : 0 );

which is the same as:

if ($c = $a > $b) {

$a < $b ? 1 : 0;

}So the value assigned to $c is the result of the

logical expression:

$a > $b

Adding some debug printing will reveal this problem. The solutions are, of course, either to use parentheses to explicitly express what we want:

$c = ($a > $b and $a < $b) ? 1 : 0;

or to use a higher-precedence AND operator:

$c = $a > $b && $a < $b ? 1 : 0;

Now $c is always set to 0 (as

presumably we

intended).[3]

Sometimes

we need to peek into complex data

structures, and trying to print them out can be tricky.

That’s where Data::Dumper comes

to the rescue. For example, if we create this complex data structure:

$data = {

array => [qw(apple banana clementine damson)],

hash => {

food => "vegetables",

drink => "juice",

},

};how do we print it out? Very easily:

use Data::Dumper; print Dumper $data;

What we get is a pretty-printed $data:

$VAR1 = {

'hash' => {

'food' => 'vegetables',

'drink' => 'juice'

},

'array' => [

'apple',

'banana',

'clementine',

'damson'

]

};Suppose while writing this example we made a mistake and wrote:

array => qw(apple banana clementine damson),

instead of:

array => [qw(apple banana clementine damson)],

When we pretty-printed the contents of $data we

would immediately see our mistake:

$VAR1 = {

'banana' => 'clementine',

'damson' => 'hash',

'HASH(0x80cd79c)' => undef,

'array' => 'apple'

};That’s not what we want—we have spotted the bug and can easily correct it.

You can use:

print STDERR Dumper $data;

or:

warn Dumper $data;

instead of printing to STDOUT, to have all the debug messages in the error_log file. This makes it even easier to debug your code, since the real output (which should normally go to the browser) is not mixed up with the debug output when the code is executed under mod_perl.

Don’t strive for elegant, clever code. Try to develop a good coding style by writing code that is concise, yet easy to understand. It’s much easier to find bugs in concise, simple code, and such code tends to have fewer bugs.

The “one week ago” example from the previous section is not concise. There is a lot of redundancy in it, and as a result it is harder to debug than it needs to be. Here is a condensed version of the main loop:

for (0..6) {

next if --$day;

$year--, $month=12 unless --$month;

$day = $month != 2

? $month_len[$month-1]

: ($year % 400 = = 0 or ($year % 4 = = 0 and $year % 100))

? 29

: 28;

}This version may seem quite difficult to understand and even harder to maintain, but for those who are used to reading idiomatic Perl, part of this code is easier to understand.

Larry Wall, the author of Perl, is a linguist. He tried to define the syntax of Perl in a way that makes working in Perl much like working in English. So it’s a good idea to learn Perl’s coding idioms—some of them might seem odd at first, but once you get used to them, you will find it difficult to understand how you could have lived without them. We’ll present just a few of the more common Perl coding idioms here.

You should try to write code that is readable and avoids redundancy. For example, it’s better to write:

unless ($i) {...}than:

if ($i = = 0) {...}if you want to just test for truth.

Use a concise, Perlish style:

for my $j (0..6) {...}instead of the syntax used in some other languages:

for (my $j=0; $j<=6; $j++) {...}It’s much simpler to write and comprehend code like this:

print "something" if $debug;

than this:

if ($debug) {

print "something";

}A good style that improves understanding and readability and reduces the chances of having a bug is shown below, in the form of yet another rewrite of our “one week ago” code:

for (0..6) {

$day--;

next if $day;

$month--;

unless ($month){

$year--;

$month=12

}

if($month = = 2){ # February

$day = ($year % 400 = = 0 or ($year % 4 = = 0 and $year % 100))

? 29 : 28;

} else {

$day = $month_len[$month-1];

}

}This is a happy medium between the excessively verbose style of the first version and the very obscure second version.

After debugging this obscure code for a while, we came up with a much simpler two-liner, which is much faster and easier to understand:

sub date_a_week_ago {

my($day, $month, $year) = (localtime(time-7*24*60*60))[3..5];

return sprintf "%02d/%02d/%04d", $month+1, $day, $year+1900;

}Just take the current date in seconds since

epoch as time( ) returns,

subtract a week in seconds (7661 × 24 × 60

× 60),[4] and feed the result to localtime(

). Voilà—we have the date of one week ago!

Why is the last version important, when the first one works just fine? Not because of performance issues (although this last one is twice as fast as the first), but because there are more chances to have a bug in the first version than there are in the last one.

Of course, instead of inventing the date_a_week_ago(

) function and spending all this time debugging it, we

could have just used a standard module from CPAN to provide the same

functionality (with zero debugging time). In this case,

Date::Calc comes to the rescue,[5] and we will write

the code as:

use Date::Calc;

sub date_a_week_ago {

my($year,$month,$day) =

Date::Calc::Add_Delta_Days(Date::Calc::Today, -7);

return sprintf "%02d/%02d/%04d", $month, $day, $year;

}We simply use Date::Calc::Today( ), which returns

a list of three values—year, month, and day—which are

immediately fed into the function

Date::Calc::Add_Delta_Days( ). This allows us to

get the date N days from now in either

direction. We use -7 to ask for a date from one week ago. Since we

are relying on this standard CPAN module, there is not much to debug

here; the function has no complicated logic where one can expect

bugs. In contrast, our original implementation was really difficult

to understand, and it was very easy to make mistakes.

We will use this example once again to stress that it’s better to use standard modules than to reinvent them.

As we saw earlier, it’s almost

always possible to debug code with the help of print(

). However, it is impossible to anticipate all the possible

paths of execution through a program, and difficult to know what code

to suspect when trouble occurs. In addition, inline debugging code

tends to add bloat and degrade the performance of an application,

although most applications offer inline debugging as a compile-time

option to avoid these performance hits. In any case, this information

tends to be useful only to the programmer who added the

print( ) statements in the first place.

Sometimes you must debug tens of thousands of lines of Perl in an

application, and while you may be a very experienced Perl programmer

who can understand Perl code quite well just by looking at it, no

mere mortal can even begin to understand what will actually happen in

such a large application until the code is running. So to begin with

you just don’t know where to add your trusty

print( ) statements to see what is happening

inside.

The most effective way to track down a bug is often to run the program inside an interactive debugger. Most programming languages have such tools available, allowing programmers to see what is happening inside an application while it is running. The basic features of any interactive debugger allow you to:

Stop at a certain point in the code, based on a routine name or source file and line number (this point is called a break point).

Stop at a certain point in the code, based on conditions such as the value of a given variable (this is called a conditional break point).

Perform an action without stopping, based on the criteria above.

View and modify the values of variables at any time.

Provide context information such as stack traces and source views.

It takes practice to learn the most effective ways of using an interactive debugger, but the time and effort will be paid back many times in the long run.

Perl comes with an interactive debugger called perldb. Giving control of your Perl program to the interactive debugger is simply a matter of specifying the -d command-line switch. When this switch is used, Perl inserts debugging hooks into the program syntax tree, but it leaves the job of debugging to a Perl module separate from the Perl binary itself.

We will start by reviewing a few of the basic concepts and commands provided by Perl’s interactive debugger. These examples are all run from the command line, independent of mod_perl, but they will still be relevant when we work within Apache.

It might be useful to keep the perldebug manpage handy for reference while reading this section, and for future debugging sessions on your own.

The interactive debugger will attach to the current terminal and present you with a prompt just before the first program statement is executed. For example:

panic% perl -d -le 'print "mod_perl rules the world"' Loading DB routines from perl5db.pl version 1.0402 Emacs support available. Enter h or `h h' for help. main::(-e:1): print "mod_perl rules the world" DB<1>

The source line shown is the line that Perl is about to execute. To single step—i.e., execute one line at a time—use the next command (or just n). Each time you enter something in the debugger, you must finish by pressing the Return key. This will cause the line to be executed, after which execution will stop and the next line to be executed (if any) will be displayed:

main::(-e:1): print "mod_perl rules the world" DB<1> n mod_perl rules the world Debugged program terminated. Use q to quit or R to restart, use O inhibit_exit to avoid stopping after program termination, h q, h R or h O to get additional info. DB<1>

In this case, our example code is only one line long, so we have finished interacting after the first line of code is executed. Let’s try again with a slightly longer example:

my $word = 'mod_perl'; my @array = qw(rules the world); print "$word @array\n";

Save the script in a file called domination.pl and run it with the -d switch:

panic% perl -d domination.pl main::(domination.pl:1): my $word = 'mod_perl'; DB<1> n main::(domination.pl:2): my @array = qw(rules the world); DB<1>

At this point, the first line of code has been executed and the

variable $word has been assigned the value

mod_perl. We can check this by using the

p (print) command:

main::(domination.pl:2): my @array = qw(rules the world); DB<1> p $word mod_perl

The print

command is similar to

Perl’s built-in print( )

function, but it adds a trailing newline and outputs to the

$DB::OUT file handle, which is normally opened on

the terminal from which Perl was launched. Let’s

continue:

DB<2> n main::(domination.pl:4): print "$word @array\n"; DB<2> p @array rulestheworld DB<3> n mod_perl rules the world Debugged program terminated. Use q to quit or R to restart, use O inhibit_exit to avoid stopping after program termination, h q, h R or h O to get additional info.

Unfortunately, p @array printed

rulestheworld and not rules the

world, as we would prefer, but that’s

absolutely correct. If you print an

array

without expanding it first into a string it will be printed without

adding the content of the $"

variable (otherwise known as $LIST_SEPARATOR, if

the English pragma is being used) between the

elements of the array.

If you type:

print "@array";

the output will be rules the world, since the

default value of the $" variable is a single

space.

You should have noticed by now that there is some valuable information to the left of each executable statement:

main::(domination.pl:4): print "$word @array\n"; DB<2>

First is the current package name (in this case,

main::). Next is the current filename and

statement line number (domination.pl and 4, in

this example). The number presented at the prompt is the command

number, which can be used to recall commands from the session

history, using the ! command followed by this

number. For example, !1 would repeat the first

command:

panic% perl -d -e0 main::(-e:1): 0 DB<1> p $] 5.006001 DB<2> !1 p $]5.006001 DB<3>

where $] is Perl’s version

number. As you can see, !1 prints the value of

$], prepended by the command that was executed.

Notice that the code given to Perl to debug (with

-e) was 0—i.e., a

statement that does nothing. To use Perl as a calculator, and to

experiment with Perl expressions, it is common to enter

perl -de0, and then type in expressions and

p (print) their results.

Things start to get more interesting as the code gets more

interesting. In the script in Example 21-5,

we’ve increased the number of source files and

packages by including the standard Symbol module,

along with an invocation of its gensym( )

function.

Now let’s debug it:

panic% perl -d test_sym.pl main::(test_sym.pl:3): my $sym = Symbol::gensym( ); DB<1> n main::(test_sym.pl:5): print "$sym\n"; DB<1> n GLOB(0x80c7a44)

Note that the debugger did not stop at the first line of the file.

This is because use

... is a

compile-time statement, not a runtime statement. Also notice there

was more work going on than the debugger revealed.

That’s because the next command

does not enter subroutine calls, it steps over.

To step into

subroutine code,

use the step

command (or its abbreviated form,

s):

panic% perl -d test_sym.pl main::(test_sym.pl:3): my $sym = Symbol::gensym( ); DB<1> s Symbol::gensym(/usr/lib/perl5/5.6.1/Symbol.pm:86): 86: my $name = "GEN" . $genseq++; DB<1>

Notice the source line information has changed to the

Symbol::gensym package and the

Symbol.pm file. We can carry on by hitting the

Return key at each prompt, which causes the debugger to repeat the

last step or next command.

It won’t repeat a print

command, though. The debugger will eventually return from the

subroutine back to our main program:

DB<1>

Symbol::gensym(/usr/lib/perl5/5.6.1/Symbol.pm:87):

87: my $ref = *{$genpkg . $name};

DB<1>

Symbol::gensym(/usr/lib/perl5/5.6.1/Symbol.pm:88):

88: delete $$genpkg{$name};

DB<1>

Symbol::gensym(/usr/lib/perl5/5.6.1/Symbol.pm:89):

89: $ref;

DB<1>

main::(test_sym.pl:5): print "$sym\n";

DB<1>

GLOB(0x80c7a44)Our line-by-line debugging approach has served us well for this small program, but imagine the time it would take to step through a large application at the same pace. There are several ways to speed up a debugging session, one of which is known as setting a breakpoint.

The breakpoint

command (b) is used

to tell the debugger to stop at a named subroutine or at any line of

any file. In this example session, at the first debugger prompt we

will set a breakpoint at the Symbol::gensym

subroutine, telling the debugger to stop at the first line of this

routine when it is called. Rather than moving along with

next or step, we give the

continue command (c), which

tells the debugger to execute the script without stopping until it

reaches a breakpoint:

panic% perl -d test_sym.pl main::(test_sym.pl:3): my $sym = Symbol::gensym( ); DB<1> b Symbol::gensym DB<2> c Symbol::gensym(/usr/lib/perl5/5.6.1/Symbol.pm:86): 86: my $name = "GEN" . $genseq++;

Now let’s imagine we are debugging a large

application where Symbol::gensym might be called

from any one of several places. When the subroutine breakpoint is

reached, by default the debugger does not reveal where it was called

from. One way to find out this information is with the stack

Trace

command (T):

DB<2> T $ = Symbol::gensym( ) called from file `test_sym.pl' line 3

In this example, the call stack is only one level deep, so only that

call is printed. We’ll look at an example with a

deeper stack later. The leftmost character reveals the context in

which the subroutine was called. $ represents

scalar context; in other examples you may see @,

which represents list context, or ., which

represents void context. In our case we called:

my $sym = Symbol::gensym( );

which calls the Symbol::gensym( ) in scalar

context.

Now let’s make our test_sym.pl

example a little more complex. First, we add a

Book::World1 package declaration at the top of the

script, so we are no longer working in the main::

package. Next, we add a subroutine named do_work(

), which invokes the familiar

Symbol::gensym, along with another function called