Chapter 4. Cultivate Human–Machine Harmony

MAYBE YOU’VE WATCHED THE viral video “I’m Poppy” on YouTube. Take a trip down that rabbit hole, and what you’ll see is as mesmerizing as it is unsettling. Whether the slight delay between Poppy’s lips and the audio, the hint of autotune in her voice, or the silvery sheen of her skin and hair, something seems off. She tells us that she’s “from the internet.” She has stilted conversations with a mannequin named Charlotte. Charlotte speaks to Poppy in a computerized voice, and Poppy answers with her own kind of “artificial intelligence.” Part of the reason, perhaps, that Poppy has piqued collective curiosity is that people aren’t quite sure what to make of her; she brings attention to the boundary shift between human and machine.

The line between humans and technology seems like it’s becoming blurrier. Social media profiles prompt us to consider how we present ourselves in new contexts. We shuffle through identities more rapidly than ever, moving from anonymous to avatar in a blink. We have real conversations with fake people, or maybe a mashup of chatbot and human—we’re no longer sure. Robots that look like people challenge our idea of personhood, relationships, and citizenship.

Technology is urgently insisting that we reconsider what it means to be human. It turns out to be much more complicated than “let humans do human things and machines do machine things.” Human and machine things are jumbled together. Although some embrace the potential for new ways of being, the low buzz of anxiety is all around us. We get a glimpse of it in news stories about the future of work and in academic studies about the uncanny valley. Warnings from Elon Musk or Stephen Hawking about the coming singularity give pause. We see entrepreneurs scramble to cash in on easy-win human things to disrupt, like scheduling meetings or customer service conversations. Designers cast a nervous glance to AI, not sure if it’s a collaborator or replacement.

In a world without hard-and-fast rules about what is human and what isn’t, how do we design technology? Machines shape our identity; AI makes this truth more acute. And emotional well-being is nothing if not a quest toward our best selves. Technology will almost certainly influence how we feel about ourselves.

Machines will shape our relationships with other people, whether a screen in between or something more. When it comes to emotional well-being, relationships are nearly everything. Every model of happiness, from Martin Seligman’s PERMA to the Ryff Scale of Psychological Well-Being to the OECD Better Life Index, centers on relationships. Harvard University’s 80-year Grant and Gluek Study revealed that it’s not money, career, or status that determined a good life; it’s love. Good relationships are associated with better health, greater happiness, and a longer life. Study after study shows that friends are crucial to happiness. Yes, human friends, but very likely artificial friends, too.

So, in this chapter, we consider how to cultivate harmony between humans and machines in a world without strict boundaries anymore. We draw from an evolving philosophical consideration of the relationship between humans and technology. We also consider the psychology of personality and relationships to consider how it might help us humanize technology in a way that doesn’t dehumanize humans.

Understanding the New Humans

As designers, researchers, and developers, we are keenly concerned with designing for people. Empathy is the essential underpinning of our practice. So, we cultivate it. We observe people using technology. We challenge ourselves to experience the world in different ways through website prompts (Figure 4-1), workshop exercises, simulations, and maybe even virtual reality (VR). Hopefully, we are hiring diverse teams and building an inclusive process. More and more, we try to divine some understanding from data. Maybe we’ve even trained AI on social media data using IBM Watson’s Personality Insights.

Figure 4-1. Empathy prompts nudge us toward human understanding (source: empathyprompts.net)

Then, we create stories from our research called personas, characters that we can call to mind and that guide our work. We conjure a kind of design muse to remind us of the real people out there somewhere. It’s a challenge to understand people in aggregate or as individuals. Who exactly are we designing for?

USERS VERSUS HUMANS

Well, we design for users, of course. A quaint term from the days when people sat down in front of a machine for a set amount of time. An old-fashioned term for when there was a divide between going online and real life. A limited term circumscribing people by their use of technology. There’s life, and then there’s this moment when someone is interacting with our thing. And then, for that flicker of an instant, a person becomes a user.

A user is just one small version of ourselves at a moment in time. How do we understand users? We do our best to understand our fellow humans in these moments, usually through a few different lenses. Let’s examine these a bit closer:

- Broad characteristics

- Whether we like to admit it, the starting point is often broad strokes. The business team notes that even though we know our core users are men aged 50 years and older, we are aiming to bring on millennial men and women. The marketing team tells us that customers love Instagram; they are very visual and super tech-savvy. The millennials on the tech team might then be asked to step into the role of instant expert, speaking for an entire generation. A developer or designer chimes in that teens don’t care about privacy. Packed into demographics or other technographic or even behavioral characteristics are assumptions upon assumptions that are at best reductive and at worst harmful.

- Real people in aggregate

- Another way to understand people is to put data from many people together into kind of a puree. We might find that 50% go down a certain path or that 35% of people who purchase one product will purchase another. Or, we might find that our most profitable customers rank highly for conscientiousness on the OCEAN model of personality. That might help us make design decisions. Even though extrapolating individual truth from broad trends in analytics data is dangerously reductionist, we do it all the time. We fall back on averages even though it flattens out experience.

- One person

- Sometimes, the experience is framed around one person to focus the design discussions. It might be based on a real person we have in our head—maybe a friend or a person we encountered during research. It might be someone we imagine, an ideal customer. It might be someone sketched out in a persona, a blend of imagination and data points. I can easily imagine design teams referencing a character from a VR experience in the not-so-distant future. The user springs to life in a new way, limited by the people the team can conjure up.

- Data-double

- When we track data for an individual, usually to personalize the experience in some way, our view is trained on a data-double—a mix of past actions, reported demographics, and stated preferences that comes together as a portrait of a person. Sometimes, we get a glimpse of this imperfect replica in the ads served up or social media feeds, but mostly it’s hidden. Often, it’s how potential partners, employers, benefits officers, and many others get to know us first. Even though we might be loath to admit it, this data-double haunts our design process.

- Social performance

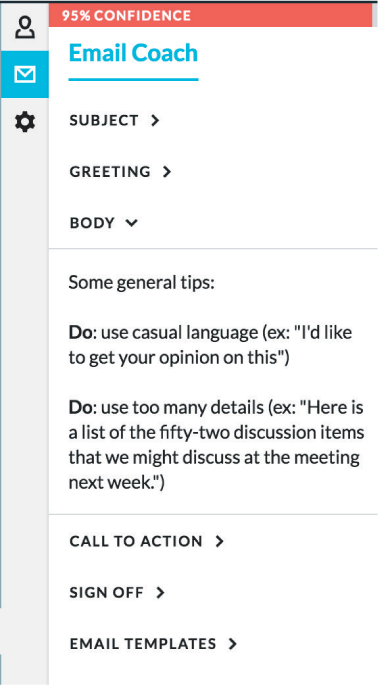

- We are constantly role-swapping across all channels. Whether we are sampling a slice of life in a qualitative interview or capturing tone of voice in email over time, much of what we gather is a performance of some kind. Maybe we know this in the back of our minds. People are guarded; they aren’t always genuine. Sometimes, we are confronted with it through data. If you look at your contacts using Crystal Knows, a virtual email coach, you’ll see that almost everyone is an “optimist” or a “motivator” if personality is based only on the tone in their emails (Figure 4-2).

Figure 4-2. If we are all so positive on email, why aren’t we happier? (source: Crystal Knows)

Lately, I’ve been trying out different research methods to learn more about this complexity. Usually, it involves exercises designed for self-reflection—personality quizzes, writing prompts, and paired activities. Then, we attempt to unearth who we are in all kinds of online contexts. The idea is to understand the difference and potential points of disconnect between identities. Here are just a few activities to try:

- Unboxing yourself

- Every day, we get clues about how big companies and tech platforms frame who we are, but we rarely tune in. In this activity, each person looks at three different memory systems. First, note down predictive text suggestions on your smartphone. Second, list out 5 to 10 ads or types of ads that you see across more than one website. Third, look at reminiscence databases, like Facebook Memories or Timehop. Based on what you find, select only five items (we have a big selection of miniatures and LEGOs) that represent this version of you and place them in a box. Now try designing personalization based on knowing just those items.

- Browser history day in the life

- This activity can get a little too personal, so I begin with some example browser histories. Often people will volunteer to contribute. Everyone gets a browser history as a prompt to construct a day in the life for that individual, illuminated with a detailed description. If we are bravely using our own browser histories, it’s pretty easy to reconstruct a day. You’ll see where you’ve been researching a thorny issue for a project, where you were goofing off, cringe-worthy moments during which you clicked something you wish you hadn’t. Use this as an input for personas.

Glimmers of who we are according to the platforms we use are already evident in our daily life. Some of it we craft for ourselves, by adopting different identities in different contexts. So long as we are aware of exactly what we reference when we talk about users, all of these views might have some value as we design. It would be short-sighted not to acknowledge the limitations, though. Identity is shaped by technology in ways that go beyond a consumer in the moment of interacting or an ad target.

HUMANS, ON A SPECTRUM

Humans are forever in a state of becoming. This truth is at the core of the research on well-being. Why else would we try to feel more and do better? Psychologist Dan Gilbert notes that “Human beings are works in progress that mistakenly think they are finished.” Sometimes, when we design technology, we forget this though. The goal is to define and narrow, which leaves us at a loss as to how to account for something as fluid as identity.

Humans are more than archetypes and data points. We do have some ways to account for human complexity, however. Ethnographic interviews and empathy exercises strive to render that fullness, but that work can get lost in translation. A glimpse of how humans and technology are coevolving ends up reduced to touchpoints.

Another way to humanize the humans is to acknowledge that we relate to technology in a range of different ways that shapes how we see ourselves and interpret the world. Marshall McLuhan argued that all technology extends our body or mind in some way. For instance, cars extend our physical range so that we can travel farther. Cars also extend our emotional range, by exposing us to more of the world. Good to remember, difficult to model.

For those of us tasked with sorting out these relationships, it helps to get specific about the variations. The work of philosopher Don Ihde, who builds on Martin Heidegger’s notion of readiness-to-hand, is a good starting point. In Technology and the Lifeworld (Indiana University Press, 1990), Ihde models four ways that technology mediates between people and the world:

- Embodiment

- When technology merges to some degree with the human, but withdraws as soon as it’s removed. A hammer, for example, becomes a part of our hand but only while we use it.

- Hermeneutic

- When technology represents an aspect of the world, like a thermometer that allows us to read the temperature.

- Background

- When technology shapes an experience that we are not consciously aware of, like central heating or a refrigerator.

- Alterity

- When technology is a separate presence, which includes anything from an ATM to a Furby to a RIBA healthcare robot.

More recently, philosopher Peter-Paul Verbeek, in his work What Things Do: Philosophical Reflections on Technology, Agency, and Design (Penn State University Press, 2005), identified two more potential ways that technology mediates experience:

- Cyborg

- When technologies merge with a person to form a single, cohesive hybrid. Think biohacks or implantables.

- Composite

- When technology not only represents reality but constructs it as well. From radio telescopes to sentiment-based data visualizations, this kind of meaning-making relationship depicts a new view of reality.

We already look at people through different lenses in research, through demographics or data points or stories. If we use the work of Ihde and Verbeek as a model, those profiles might include the following aspects of selfhood:

- Hybrid

- With technologies like cochlear implants that enable deaf people to hear again or neuro-implants for deep-brain stimulation, body and technology can be intimately connected. Human and technology approach the world as a combined force. In a hybrid relationship, you might not be aware of the relationship most of the time.

- Augmented

- Technology that adds a second layer to the world, anything from Google Glass (Figure 4-3) to Twilio, or even GPS, is augmentation. We are aware of that layer, but it can feel like an extension of our mind or body. The technology augments an ability we already have or fills in gaps. When we talk about technology giving us superpowers, we are often addressing an augmented self.

Figure 4-3. The place between hybrid and augmentation is often awkward (source: Google Glass)

- Extended

- The extended self exists between augmentation and imagination. Rather than just a layer that offers a new view or new capabilities, here our conception of self becomes bigger. Our identity expands. Russell Belk coined the term to reflect the relationship people have with their possessions.1 Sometimes, this happens through ownership, as in, “I’m an Apple person.” Sometimes, an extended self comes to life through use. You might come to think of yourself as a blogger, or even more narrowly as an Instagrammer.

- Imagined

- From an avatar that we consciously call into existence for a game to a curated identity for social media, this is an identity that we consciously craft. The imagined self can be something that is fashioned intentionally or that develops over time. The focus can be oriented toward other people or purely for yourself. You might think of the imagined self as a subset of the extended self. The optimized self exists in this space, too. Part aspirational, part motivational, this version shifts depending on what’s being tracked. It’s an idealized conception of selfhood, based on quantification.

- Immersed

- Smart environments perceive a version of our selves. When technology is fused with the world outside of us, it still needs to make sense of us. In the immersion relation, we are represented by the data doppelgänger technology detects. Whether a smartwatch reading our stress levels or a website remembering what we shopped for last summer, the world bends toward this version of our selves.

Humans and technology are irrevocably mixed together. Let’s think of this relationship on a spectrum. At one end of the spectrum, technology merges with our self; tech and human are a hybrid. Brain interfaces, but also implants and wearable medical devices, might qualify. At the opposite end of the spectrum is an immersed relationship in which technology merges with the world. From sensors in streetlamps to facial recognition built in to security systems, we experience it as clearly separate from ourselves.

The middle is murky. Technology can extend capabilities in all kinds of ways, sometimes fading into the background and sometimes not. Often, we flicker between states. You might feel like your phone is part of yourself, a representation of others as voices in your head or a best friend there when you need it.

Technology frames our identity in ways that persist long after we click, tap, or talk. We’ve internalized it. So much so that it’s difficult to say where technology ends and humans begin. At the same time, we cultivate relationships with technology that are externalized, too.

NEW HUMAN–TECH RELATIONSHIPS

When we think about our relationship with technology, we don’t think of ourselves on a spectrum where we are part human, part tech. Most of us prefer to think that humans are separate. Paradoxically, this might explain the appeal of humanized robots. It seems like we are happiest when the boundaries are clearly drawn. When a robot looks too much like a human, it’s creepy. When it’s not clearly separated from us, it’s confusing.

As uncomfortable as this boundary shifting might be, we should begin to embrace the mess. I suspect it’s the path to better human-tech relationships going forward. Here are a few of the ways it can take shape.

- Technology as stand-in

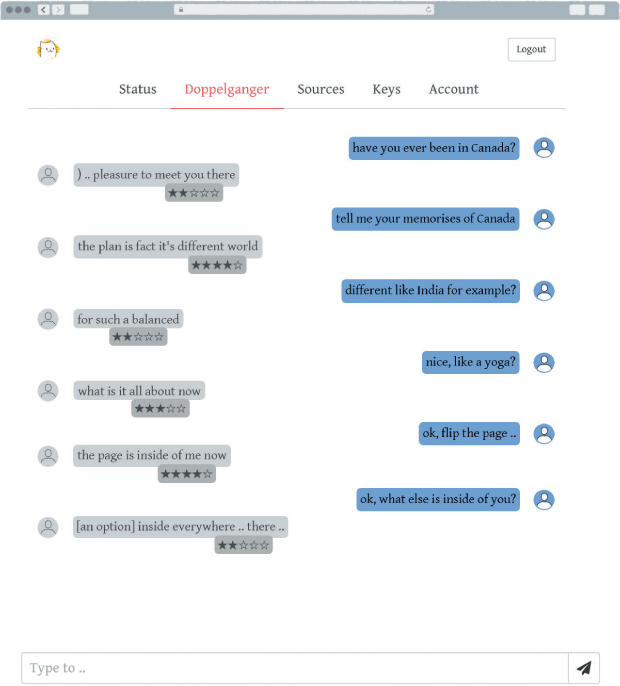

- Telepresence robots and gaming avatars might take your place from time to time. It’s about to get more sophisticated, though. When Eugenia Kudya lost her friend Roman Mazurenko to a tragic accident, she created a memorial bot. Fashioned from text messages, the AI was enough of a likeness to help friends and family have a last conversation.2 The result was an app, Replika, that you can use to create a double of yourself, or a very customized friend. UploadMe goes further, creating a second self from your social media and interactions (Figure 4-4). Technology that functions as another you, whether placeholder or fictional character or realistic replica, is a distinct possibility.

Figure 4-4. Your double isn’t quite ready to stand in (source: uploadme.ai)

- Technology as assistant

- Technology that functions as a personal assistant or doubles as an employee fills this subordinate role. You are in charge, and the bot has agency in a narrow frame. X.ai’s chatbots schedule meetings, Pana arranges business travel, Mezi shops for you. Google Assistant is billed as “your own personal Google, always ready to help.” On a sliding scale of personality, many of these bots keep personality to a minimum, mostly because they exist to smooth over tedious tasks or difficult situations. The tech assistant is part you and part not-you, its role limited by particular tasks.

- Technology as collaborator

- In this relationship human and machine work together. Jazz musician Dan Tepfer made headlines recently by playing alongside algorithms at a Yamaha Disklavier. “I play something on the piano, it goes into the computer, it immediately sends back information to the piano to play.... Now the piano is actually creating the composition with me.”3 The same trend is happening in the visual arts and poetry. Whether training the AI or a more flexible give and take, human and tech are co-creators. It’s not a stretch to imagine this kind of collaborative relationship in many fields, from medicine to design. The tech collaborator is more colleague than personal assistant, but it’s a role bound by a set of tasks or specialized field.

- Technology as friend (or foe)

- No shortage of robot companions await us, from the child-sized, Pixar-inspired Kuri, to the intimidating Spot Mini from Boston Dynamics, to Softbank’s family of robots (Figure 4-5). Whether a soft robot seal, like PARO, or a childlike presence, like the robot assistant Jibo, machine-friends seem to sense our thoughts or emotions. Their capabilities are less tightly constrained to a small or specific set of tasks. The machine-friend feels less like you or an extension of you, and more like a friend or family member. Like friends or family members, relationships can go wrong. If our machine-friends don’t get us, it might feel like a betrayal. At the same time, we might be a little more forgiving of weaknesses, just as we are for human friends.

Figure 4-5. Friends can be a little strange; usually the best ones are (source: Softbank)

- Technology as lover

- In the movie Her, a lonely guy very plausibly falls in love with his operating system. Plausible because it’s already happened. In 2006, psychologist Robert Epstein had a months-long email affair with Svetlana, who turned out to be a bot.4 Research backs up the notion that we could feel a sense of intimacy with a bot. In The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places (Cambridge University Press, 1996), Byron Reeves and Clifford Nass describe a wide range of studies that show how people react to computers as social actors. As soon as bots begin to recognize emotional cues and respond with empathy, it’s inevitable that we’ll engage with them differently and maybe even begin to fall for them.

Robots might not love us but with so many different kinds of love—from romantic to obsessive, from friendship to transcendent—perhaps it will just become another variation on a theme. No matter your comfort level with this type of human–machine relationship, it certainly is worthy of our consideration as we design technology that will fill in these roles.

All of these types of relationships can support well-being. There won’t be one type of “right” relationship with machines, just like there’s not one right relationship with fellow humans. Relationships might be narrowly defined to a task or richly intimate, or something in between. And some will go wrong, and we’ll need to know how to spot the warning signs of a relationship gone off the rails.

THE TROUBLE WITH ARTIFICIAL FRIENDS

As we conflate human and machine, confusion arises. One pitfall is the emotional uncanny valley or bots that overstep boundaries. Since the 1970s, we’ve used robotics professor Masahiro Mori’s concept of the uncanny valley to explain why robots that look too realistic make humans uncomfortable. I believe we’ll begin to see an emotional uncanny valley, a new kind of unease as bot personalities begin to feel too human. In an experiment by Jan-Phillip Stein and Peter Ohler, people were exposed to virtual reality characters making small talk. When people thought that the characters were not voiced by humans, they became deeply uncomfortable.5 When bots speak or act too much like a human, it creates that same unease we feel when bots look too human.

And then there’s stereotypes. As a society, we might have been conditioned to expect cheerful female voices in helpful roles. Most assistant apps, including Alexa, Cortana, and Siri (and likely your car’s GPS), are all female by default. Not only are we perpetuating stereotypes but we also may be conditioning the next generation to be rude or demanding toward women. Sara Wachter Boettcher, in her book Technically Wrong: Sexist Apps, Biased Algorithms, and Other Threats of Toxic Tech (W. W. Norton & Company, 2017), has chronicled the bias baked in to the design of chatbot personalities, tracing some of the problem back to the composition of design teams. Some of this bias might be due to the pressure on teams to make successful products. Marketing teams might insist on “female” bots because people seem more apt to interact. Inadvertent or not, perpetuating and profiting from stereotypes will not cultivate harmony among humans and machines.

Another complication with creating technology to be a friend is transparency. How do we know when we are speaking to a human? Most companies aim for chatbots to seem as human as possible. For instance, Amy and Andrew Ingram, the meeting scheduling bots of X.ai, are scripted for empathy. Xiaoice, Microsoft’s natural-language chatbot for the Weibo community, seems so real that people fool themselves into thinking it just might be.

Other bots are actually humans masked by the bot. Facebook’s M assistant was only partly bot, with humans filling in the gaps. Pana, the travel assistant, connects with a human after just a few interactions. Invisible Girlfriend, at first, obscured the human behind the chatbot interface (now the company provides conversations “powered by creative writers” but still encourages people to “build your girlfriend”). A faked bot impersonating a faked girlfriend (or boyfriend)—no wonder we have trouble sorting it out.

Perhaps at some point blurring the lines between human and machine might become irrelevant, but we aren’t at that point yet. So, we spend our days wondering who to trust. As poet and programmer Brian Christian writes, “We go through digital life, in the twenty-first century, with our guards up. All communication is a Turing test. All communication is suspect.”6 Given that we conflate human and machine in a variety of contexts, from online characters to chatbots, how do we strike a meaningful balance?

Let Machines Be Machines

From a vaguely feel-good affirmation of values, to making tech warm and fuzzy, to giving it a fully formed personality or a life-like body, humanizing technology is a concept that’s open to interpretation. Usually, it means designing tech with human characteristics.

Whether physical appearance or personality, an element of humanity builds trust. Humanized robots might partly be a vanity project, too. We’re creating them, after all, so why not in our likeness? Even if it doesn’t make much sense based on what the machine will do, our impulse is to humanize.

All the same, we like it when bots showing the barest hint of humanity fail. As much as we might enjoy the Roomba vacuuming up after our pets, there’s a certain charm when it gets stuck. Tutorials on how to make Siri curse like a sailor abound, even though you probably don’t need them. The little thrill we get when a chatbot is clueless or a robot slips on a banana peel (Figure 4-6) might be related to the pratfall effect, or the tendency to feel more affection toward a person who makes a mistake. But it’s a bit different; there’s a bit of schadenfreude in the mix. And maybe a bit of fear, too. We are drawn to humanized machines, but we don’t want them to be too human.

Figure 4-6. When robots fail, we might feel sympathy, but it’s tinged with glee (source: Boston Dynamics)

So, here we are torn between wanting our robots to be a bit more human and fearing them when they do seem more human. When do we choose to add a human touch? And how much is the right amount?

WHEN LESS HUMAN IS MORE HUMANE

Less is more when it comes the far ends of the human–machine spectrum. Technology embedded in our world or merged with our body doesn’t need a personality. It might be funny to think of your cochlear implant whispering in your ear, but the day-to-day reality would be nightmarish. Embedded sidewalk sensors don’t need to be brilliant conversationalists, or any kind of conversationalist at all. Not only would that become annoying, but it would likely be a dangerous distraction as well. Whether technology merges with us or merges with the environment, it doesn’t require much of a human touch. Here, machines can be machines.

A chip implant is clearly merged with us. A city streetlight is clearly merged with the world. It’s not always so neatly differentiated. Might household objects, like your refrigerator or your thermostat, benefit from a little personality? Do wearables such as smartwatches and sensor-laden clothing always need to be so discreet? When a product is newly introduced, the lines are often unclear.

WHEN YOUR ROBOT BECOMES A DISHWASHER

Think for a minute about dishwashers. People dream of robots that will do household chores—I know I do—and there’s no shortage of takes on the dishwasher. Robot arms that can load the dishwasher, chatbots that can activate an extra rinse cycle, even a sex robot that also turns on (so to speak) your dishwasher are all possibilities.

We invent robot vacuum cleaners, robot lawnmowers, robot cat litter boxes, but then at some point these robots stop being robots. When these machines become mundane, we no longer add the word “robot.” As roboticist Leila Takayama points out in her 2018 TED talk, “What’s It Like To Be a Robot?”, we simply call it a dishwasher. And if it loses the “robot,” that’s a clue that it doesn’t need to have its own carefully crafted personality.

Although we can’t always predict when, or if, a robot dishwasher will become a dishwasher, there are some signals to observe. If you can answer yes to the following, your robot might be on its way to becoming a regular household object.

Does the technology automate a background task? A dishwasher runs while you do other things. Maybe when you get a new dishwasher, you are temporarily obsessed with the new machine, but the enthusiasm wanes. Soon enough, the dishwasher recedes into the background.

Does it replace a repetitious task? Washing dishes is a task that needs to be repeated every day. For most people, it’s not a source of joy, at least not most of the time. Unless it’s a holiday gathering, washing dishes is not a special occasion. However, repetition can veer into meaningful ritual, with its own set of special practices.

Does it replace something that is low affect? This one is trickier. Even though there might be people who have strong feelings about washing and drying dishes, for many people, emotions around washing dishes are low-intensity or low-complexity, at least most of the time. And if you create a loveable dishwashing robot, those emotions might take hold.

As simple as this model seems, it doesn’t always apply. Your mundane task might be my joy. You might long for a leaf-raking robot, while I’d miss the crisp fall air, the colorful leaves, the meditative quality, the interruptions to dive in to a pile of leaves once in a while, and the sense of accomplishment. Leaf raking is not an everyday task, so it’s less likely to become burdensome. If I had a leaf-raking robot, maybe I’d prefer it to work with me side by side. But leaf raking also leads us to other questions that we can consider when we think about humanizing tech.

When might it be unlikely that your robot will become a household or other type of everyday object? Obviously, we can start with the opposite of the three previous questions. If it’s not automating a background or repetitive task, it might not become a mundane tech product running in the background. If emotions are intense, complex, and conflicted, it might never become a dishwasher. A few other telltale signs can guide us:

Is it a rich experience? An experience that is not only emotionally complex, but sensorially rich as well, might mean that it will never cross over into mundane territory for some people. My experience of fall leaves is rich; for other people it’s not.

Is it tied to a human sense of identity? Perhaps you fancy yourself a leaf lover? Your love of fall is an important part of who you are? Maybe it’s guided your decision to live in a place where you can experience it?

Does it mediate social connection? Joining a group of leaf peepers or spending time with the neighborhood kids leaf jumping might mean that rakes, leaf blowers, tarps, and all the trappings might not merge into the background for some small and maybe strange subset of people.

Of course, for these kinds of experiences you might not be interested in adding a tech layer at all. Or you might sometimes want the full emotional experience and sometimes not. Sometimes, you just need to get the leaves raked in time for the first snowfall, after all.

When we think about how far to go with humanizing a machine, there probably will never be a clear answer. But we can think about the humble dishwasher. Your robot might already be a dishwasher, or it might become a dishwasher. When that happens, personality takes a backseat.

Plotting the relationship on the human–machine spectrum, we can avoid the temptation to give everything—from your internet-connected coffee pot to your medical tattoo—a brand-lovable personality. If you are designing a travel avatar or a chatbot therapist or a robot companion for the elderly, perhaps you still feel you must develop a personality. Or, perhaps you are getting pressure to make your brand friendly so that people will spend more time or money with your organization. Then go with a light touch.

Humanize Machines, Just a Little Bit

Our relationships with people gradually emerge over time. A good relationship with technology uses the same approach. We don’t share every detail of our lives with every person in our lives. In fact, a little mystery is often better. Yet, in our tech relationships it can feel like each actor comes at us fully formed. Whatever the technology, it’s a safe bet that it knows things about us that even our partners don’t. Engaging with us as a full-fledged personality takes it even further.

So, a light touch means sketching in as few details as possible. This way it lets the humans fill in some of the details. They will anyway, after all. I think of Lev Tolstoy’s manner of using one detail, like Anna Karenina’s plump hands, to characterize without being too heavy handed. Or Anton Chekhov’s sisters in The Cherry Orchard who emerge gradually through dialogue. You don’t need to have studied Russian literature to understand gradually getting to know someone, but perhaps it is something of a lost art with all of us in such a rush to connect.

A light touch, besides supporting humans being humans living good lives, will end up being better for business, too. People tend to ally themselves with brands that speak to their values and resonate emotionally. And they tend to choose brands that they trust. More and more, this will mean gradually growing a relationship recognizing who they are, in a way they are comfortable with, over time.

HOW OBJECTS BECOME HUMANLY

Humans readily attribute personality to all kinds of living and nonliving things. Maybe it means you interpret a meow as an accusation, or you speak to your plants, or you even narrate the thoughts you attribute to your car. The drive to anthropomorphize is strong, even if we don’t build a personality. What prompts people toward mind attribution? Let’s explore that:

- A face, or even eyes alone

- Humans are hardwired to see faces everywhere. Our ability to read faces is crucial in helping us distinguish between friend or foe. This ability sometimes extends to nonhumans. The mere presence of eyes cue us to believe that we are being watched, much as a poster with eyes can make us feel watched. If an object has eyes, we attribute a mind to it.

- An unpredictable action or movement

- We assign a mind to nonhumans when we need to explain behavior that we don’t understand. If your computer freezes up or your light switch goes wonky, you might be tempted to describe it as devious or capricious. Clocky, an alarm clock with a hint of a face that rolls around the room if you press snooze, is an example of a product that seems designed to be anthropomorphized (Figure 4-7). Nicholas Epley describes a study in which some participants were told that Clocky would predictably run away, while others were told that it was unpredictable.7 Those who believed it was unpredictable were more apt to attribute some kind of mind to the device. Humans are unpredictable, and if an object is unpredictable it seems human, too.

Figure 4-7. So unpredictable that it seems human (source: Clocky)

- A voice

- People are voice-activated in a way. When we encounter technologies with a voice, we respond as we would to another person. J. Walter Thompson Innovation Group London and Mindshare Futures published a study in which 100 consumers were given Amazon Echos for the first time. The research found that people develop an emotional attachment. As time goes on, the attachment becomes more acute: “Over a third of regular voice technology users say they love their voice assistant so much they wish it were a real person.”8 As we hear machines talk, we respond in a social way, even mirroring the emotional tenor. Voice is not just a shorthand for human; it creates an emotional connection.

- A mammal

- Species that seem closer to humans receive more governmental protections. People are more likely to donate to causes that benefit animals rather than plants, vertebrates rather than invertebrates. The closer the species is to human, the more readily we attribute a mind. When machines look like seals, or puppies, or humans, we are more likely to assign them thoughts, feelings, and agency.

- Things we love

- We are much more likely to anthropomorphize things we love. The more we like our car, the more likely we are to assign it a personality. This same impulse can lead musicians to consider their instrument a friend. It’s why we narrate our pets. And the lonelier we are, the more likely we are to anthropomorphize the objects in our lives. Given that we are facing a loneliness epidemic for certain populations, it seems natural that many people will anthropomorphize technology no matter what.

- A backstory

- A story fills in the gaps and makes us believe in personhood. Robot Ethics 2.0 describes an experiment by Kate Darling, Palash Nandy, and Cynthia Breazeal in which they created backstories for some Hexbugs, a tiny bug-like robot, and not others. Then, they asked people to strike them with a mallet. The bugs with a backstory prompted hesitation, even when people knew the stories to be fiction.9

It might sound extreme, but we experience this every day. Compare your experience of comments by someone you don’t know and someone you know very well. Even when it’s ripped out of context, you know the backstory and are likely to be more empathetic to your friend.

Humans do the work of characterization with little prompting, but how to proceed when tasked with creating a bot? In the next few sections, let’s go step by step.

CHOOSING A MEANINGFUL METAPHOR

In college, I was a server at a little Italian restaurant. It’s all kind of a blur really, except for a few shifts. One of the most memorable was when my favorite high school literature teacher walked in and sat in my section. I freaked out and hid until someone else could cover the table for me. He was out of context, and so was I. He was a cool teacher and I was (in my mind, at least) a star student, not casual diner and clumsy server. Maybe you had this same feeling when you first started using Facebook. Close friends, an acquaintance from a recent party, coworkers, exes, family members all jumbled up together. It just didn’t seem right.

If we must design a personality, we face the first conundrum. Do we choose one role? Or do we let the personality evolve? I think we can find our answer if we look to how much context shifts. Is your technology going to be relevant primarily in one context? Or will it drift to others? The more likely it is to stay put, the better a metaphor might work as a starting point.

Beginning with a metaphor sets expectations for the relationship. Metaphors can be broad: mother, best friend, coworker, pet. It might be better to get more specific, though. Teacher, therapist, accountant, security guard each give people a framework that’s familiar. A relationship helps designers to define behavior, create a communication style, even prioritize features. Better still, it can also help evolve the product personality going forward.

A metaphor helps us to consider the following:

What kind of role does it play in the short and long term?

What is the communication style?

What will it know?

How will it behave?

What details make it cohesive?

Where is there room to grow?

How might it change over time?

The metaphor can guide the relationship, similar to how we might use a persona. The metaphor can be a way to explore what could go wrong, too. A poorly behaved dog, an arrogant boss, an overbearing friend tease out touchy subjects and implicit bias. Even so, a relationship metaphor is still not very specific. Is it enough? If we rely on only a metaphor, it may just veer into caricature.

How might we develop strong bots, loosely characterized? The next step would be to look at its personality.

ADDING JUST A FEW PERSONALITY TRAITS

Personality is a kind of complex, adaptive system itself. Characteristic patterns of thinking, feeling, and believing come together as a whole to shape our personality. The latest thinking on personality recognizes that some traits persist, but many more evolve. When designing a personality is appropriate, then the next step is to assign a few characteristic traits with an eye toward evolving.

The core framework psychologists rely on today is the OCEAN model. Developed by several groups of academic researchers, independent of one another, and refined over time, it helps psychologists understand personality. Also called the Five Factors, this model is thought to have relevance across cultures. Each dimension exists on a spectrum, lending more nuance.

Here are the Five Factors:

- Openness

- On one end of openness are curiosity, imagination, and inventiveness, and on the opposite end are consistency and cautiousness. Score high on openness, and you might be unpredictable or unfocused; score low, and you might seem dogmatic.

- Conscientiousness

- Very conscientious means you are efficient and organized. If you are easy-going and flexible, you are probably less so. Too much conscientiousness can veer into stubborn behavior; too little, and you might seem unreliable.

- Extraversion

- This is sociable and passionate on the one end of the spectrum, and reserved on the other end. Attention-seeking and dominant are the pitfalls on one extreme; aloof and disconnected on the other.

- Agreeableness

- Agreeableness is the tendency to be helpful, supportive, and compassionate. A little too agreeable, and you can seem naïve or submissive; less agreeable means that you might be argumentative or too competitive.

- Neuroticism

- Are you more sensitive or secure? High neuroticism is characterized as the tendency to experience negative emotions like anger and anxiety too easily. Low neuroticism is seen as more emotionally even-keeled.

OCEAN is already in use in bot character guides, I’m sure. Facebook reactions roughly map to OCEAN. Cambridge Analytica (in)famously used it in the 2016 elections. But there’s no shortage of additional frameworks.

We all know, and maybe fervently declare or decry, a Myers-Briggs personality type. Although Myers-Briggs is based on thoughts and emotions, the Keirsey Temperament Sorter assesses behavior types. The DISC assessment is another dimensional model widely used in organizations, marking dominance, inducement, submission, and compliance. 16PF is used in education and career counseling. The Insights Discovery Wheel, based on Jungian archetypes, is favored in marketing and branding circles.

No personality model explains everything about human personality. The ubiquity of workplace assessments and social media quizzes no doubt undermines their credibility. The power of personality frameworks to predict behavior is vastly overstated. The result often veers toward pigeonholing people. As a shorthand for human complexity, personality frameworks are unquestionably unsatisfactory.

But even if personality frameworks aren’t perfect, the infatuation we have shows that it fills a gap. It prompts insights into our own personalities and our relationships with others. It shows that we view the world differently from others. A personality framework is better as a starting point for self-discovery than a finish line. Likewise for bots.

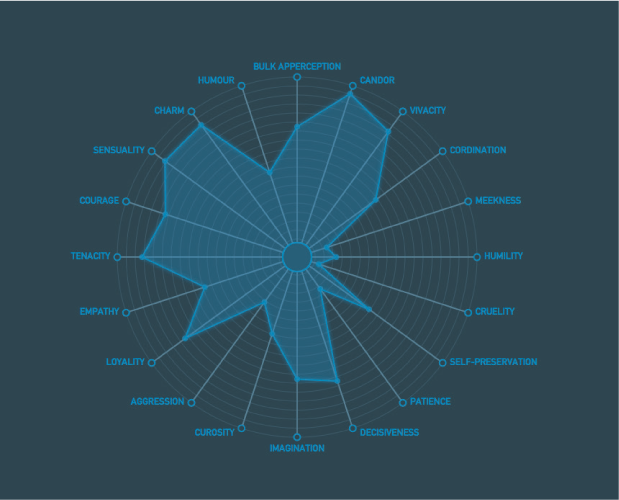

It’s tempting to obsessively map out every aspect of a chatbot personality Westworld-style (Figure 4-8). But it might just be better for our relationships if we scale back a bit. Rather than being too prescriptive, a few characteristics might be more evocative. Because we know that people will ascribe personalities to objects in the world, it leaves that space open. People want to be able to grow and change, so the same goes for our robots.

Figure 4-8. Exponential personality dimensions, Westworld-style (source: silentrob @GitHub)

Perhaps we should let people select personalities? Personality is probably how people will choose whether they want Jibo, Kuri, or Pepper anyway. The differences in the underlying technology, and even how each one looks, are minimal. Then, how far do we take it? In the game SIMS, players get 25 personality points to spend in five categories. Maybe giving people some agency is appropriate in certain circumstances, especially when the relationship is more imaginary than concrete.

EVOLVING OVER TIME

Even though I admit to scurrying away at seeing a teacher out of context, I don’t want to be seen one-dimensionally either. We assign ourselves personality characteristics—compassionate, good at math, introverted—but we also resist those categories. It’s more than just pushing back on a stereotype, too. We grow and change over time. When it comes to designing technology, it helps to think of how that role might evolve.

A singular model sticks to one role and circumscribed personality traits. Imagine a health bot that is like a personal trainer. It always plays a motivational role. It might have a personality, but it remains fairly consistent and predictable. Even as it gets to know more about you, it still fits into that same place in your life within the same range of behaviors. As with a real-life health coach or personal trainer, your relationship is bounded by certain context and conventions.

An adaptive model shifts based on context. Rather than playing one role, a health bot might have a core personality that’s like a coach but the tone might be more nurturing or tougher depending on the circumstances. As with a human coach, you may appreciate other aspects of its wit and wisdom. Perhaps it helps you set up or keep health appointments, more like an assistant or family member at times. Over time, maybe the coach becomes a friend.

Let’s think about your car, for example. The car is in that murky middle where it is partly an extension of yourself and partly a separate entity, so a personality could work. The relationship evolves over time, as does the personality of the car. It recognizes each family member and gets to know each. If we were to truly create a more human model, the car would learn gradually, though, rather than receiving a massive collection of personal data points all at once. There would be gaps, some that would be sketched in gradually and some that would remain a little blurry. The personality of the car isn’t the same later in life as at the start, but core elements remain. This aligns with the latest thinking on human personality, too.

As we shift from commands to conversation, our experience of tech will shift as well. Just as we should design to accommodate our own positive growth, we should attempt to design bots that are flexible and adaptable. An empathetic experience is more than a cheerful personality, it’s an evolving identity. And that means our relationships will evolve, too.

Build Relationships, Not Dependency

As the tech in our lives learns how to read facial expressions, creatively express ideas, and answer complex, even philosophical, questions, we’ll begin to feel as though it finally understands us. We are likely to develop a relationship that resembles the way we relate to friends, coworkers, or pets. We’ll develop a meaningful connection.

Developing a good relationship takes time. We rely on our parents’ financial advice because our relationship runs deep. We pay special attention to our best friend’s taste in music because we have gotten to know them over the years. We trust our doctor based on her years of experience treating us. By studying relationships, we can see that trust builds.

Cultivating a relationship doesn’t follow a series of linear steps. A fulfilling relationship is more like progressive transformation for all parties. If just one person (or machine) has more power, if only one grows and learns, if only one adapts, the relationship is bound to fail. Good relationships are about mutual metamorphosis.

Some relationship models are simple. According to anthropology professor Helen Fisher (Why We Love: The Nature and Chemistry of Romantic Love [Henry Holt and Co., 2004), there are three stages to falling in love: lust, attraction, and attachment. Friendships, too, pass through stages of interest, attunement, and intimacy. Or in the internet translation, meet someone who is just as crazy as you are, introduce all the ways you are crazy to each other, then build more craziness together, or something like that.

Communication professor Mark Knapp’s relational development model is the most detailed, with 10 phases for coming together and coming apart:

Initiating: Making an impression

Experimenting: Getting to know each other

Intensifying: Strengthening the relationship

Integrating: Identifying as a social unit, a “we”

Bonding: Committing to each other, often publicly

Differentiating: Reestablishing separate identities

Circumscribing: Setting boundaries

Stagnating: Remaining in a holding pattern

Avoiding: Starting to detach

Terminating: Coming apart

Phases 1 through 5 build the relationship, and 6 through 10 describe the decline, but it’s not quite as rigid as all that. Integrating, bonding, differentiating, and circumscribing are all part of it. A good relationship is not just a crescendo to blissful union. Coming together and coming apart are the ebb and flow of a good relationship.

MODELING THE RELATIONSHIP

The tendency in tech is toward compression. The relationship arc is often: (1) Hello; (2) Tell Me Everything About Yourself; (3) Here’s What You Need. We go from first date to marriage in just a few minutes. We are in a rush to build trust to drive desirable behaviors like a quick purchase or more time spent with the app. What if, instead, a longer relationship arc became the norm? Just as in our human relationships, we’d pass through different stages in our relationship technology, if given the chance. Let’s synthesize and simplify to see what a successful tech relationship might look like.

1. (Ambient) Awareness

Sometimes, we get to know other people before we even meet, whether this is by seeing them around the neighborhood or getting a glimpse on a dating app or a story shared by a friend. Through observation, hearsay, or indirect interaction, we start by knowing something about a person. The same goes for our relationship with machines. Starting with a bit of background data is fine; too much becomes creepy.

This includes first impressions, during which we display our best selves while simultaneously trying to detect clues about the other person or machine. Person to person, this can be as straightforward as what you wear or what you say. Or it can be as nuanced as picking up on facial expressions or body language. In the tech world, this might manifest in the care we take with a landing page or the first step of onboarding.

3. (Mutual) Attunement

After a few months of shared experience, we might begin to get in sync. In the current model, the sharing is markedly one-sided, though. So much so that we wouldn’t tolerate it in a human relationship. Our devices gather data about our behaviors and emotions, silently judge us, and make predictions that are often way off base or embarrassingly accurate. Sure, they might have such deep knowledge about us that the predictions become better, maybe so much so that it feels caring. It still might not be the best model.

A person who is attuned to another anticipates emotional needs, understands the context, and responds appropriately. Not only do people draw from a range of mirroring mechanisms at a physiological level, we invest enormous effort in trying to resonate. In some relationships, like parenting a toddler, attunement can be more one-sided. In most adult relationships, attunement is gradual, respectful, hard-won, and two-sided.

So, how do we move from out of the parent–toddler phase, in which we are the toddler and our machines the parent? Design for attunement. Just as we reveal information about ourselves to the various devices in our lives, our tech needs to reveal to us what it knows and how it works. Ongoing transparency with room for human agency is the only ethical way to become attuned.

Tech mediates human-to-human attunement already. Strava lets us share our routes, the Hug Shirt transmits an embrace using sensors and a mobile app, the Internet Tea Pot conveys an elderly person’s routines to a family member. To develop human–tech attunement, we need to develop that same level of progressive trust.

4. (Healthy) Attachment

Shared experience builds relationships over time. Developing rituals around the relationship can contribute, too. At some point in the not-so-far-off future, some bots and devices will develop convincing empathy by detecting, interpreting, and adapting to human emotions. If the relationship evolves over time, like a healthy human relationship, we can get to positive attachment. Not over-reliance, in which there’s a power imbalance, but instead an emotionally durable coexistence.

5. (Sustainable) Relationship

After we attach, that’s not the end of things. Relationships change in all kinds of ways over time. Think of the rare 50-year marriage or the unusual friendship that started at school and somehow survived to a 20-year college reunion. Then, think of all the relationships that didn’t last. When long-term relationships fail, it’s often because a change becomes untenable to one or both (or more) people. On the other hand, successful long-term relationships adapt to accommodate change. Right now, machines are not nimble enough to adapt to us over time while helping us to grow. But, on a good day, I think we’ll get to this place.

These phases of a relationship are big picture. There are frustrations and triumphs, challenges and reconciliations, small gestures for maintenance and grand efforts at revival. Some friendships are constrained to a certain context or role; others expand beyond that. Friendships can be intense and then wane and then flare up again or disappear entirely. You might be attracted to people (or machines) like you, or you might seek out opposite but complementary traits. These phases are only a starting point for framing human–machine relationships.

For now, most human–machine relationships barely make it to the second phase. The reasons are complicated. One key reason is that we simply aren’t thinking about a long-term relationship, so let’s train our human vision on relationship milestones first.

UNDERSTAND MILESTONES AND MOMENTS

Recently, I led a workshop designing cars that care, or autonomous cars that know a bit about our emotions. Cars make an interesting study with which to understand human–machine relationships. Maybe it’s because I grew up in the Motor City with lots of family working in the auto industry. Maybe it’s just because I like epic road trips despite bouts of motion sickness. But I suspect it’s because cars are liminal spaces, between home and work, day and night, personal and public.

Cars, at least in American culture, mark big milestones, too. When you get your license, you get your first old beater. A cross-country move might mean saying goodbye to a car. A new job with a commute often means a new car as well. Having children? Children leaving home? Those occasions are marked with cars, too. Even as we move away from single ownership, this may hold true.

If we are thinking about relationships, these big milestones define the relationship. Introductions, a cross-country move, a new driver, and saying goodbye are the big moments. It’s small moments, too, like a bad day or a stressful commute or when the car messes up. It’s routines like a daily commute. To give these milestones the attention they richly deserve, I’ve taken advantage of a few techniques, with a twist.

- Relationship timeline

A journey might not be the right framework for a long-term relationship. Plus, it’s much easier to see the shape of a journey in retrospect. Looking back on a period in your life, it’s easier to make sense of it. It ends up as a story with beginning, middle, and end. The implications seem clear in hindsight. The goals seem obvious. Relationships, when viewed in retrospect, shape us, but while we’re living them it’s quite a different story. So, a timeline should be flexible.

In workshops, I introduce the adaptation of Knapp’s relational model as a starting point. You can simply go with what you know from interviews or other research, too. Discuss how you’ll handle key moments in the relationship and how these might evolve over time. Here are a few questions to get started:

What will we know about each other before we meet?

How will each actor get to know the other?

How will each show that it knows the other?

When will you introduce each other to new people?

What will change over time? What should stay the same?

How is trust lost and regained?

How much should interests grow together—or remain separate?

After you’ve covered some basics, next move on to the milestones. These are the common relationship builders or busters associated with life events.

- Eight milestones

The crazy-eight technique, a workshop staple, is easy to adapt for sketching relationship moments. Dividing sheets of paper into eight sections, describe eight relationship milestones. Staying with our car example, maybe it’s the first time you picked up a friend in the car or the first time you had a fender bender.

If you have each member of a group creating their own crazy-eight sketches in a workshop setting, have the group come together to discuss the most common milestones and the most unusual. At first, focus on only a few of what will invariably be many possibilities. The impulse will be to find common threads, and this isn’t necessarily negative. But I’d encourage looking for extremes and edges, too. Don’t stop at just those few; come back later. This relationship is a living thing, which you’ll revisit often as circumstances change and new paradigms arise.

After you agree on a few milestones, the group can begin to develop each in more detail. Consider the following:

Who is a part of this milestone?

What objects are associated with the milestone?

What emotions characterize it?

Are rituals associated with the milestone?

What forms of expression are acceptable?

What range of behaviors are associated with the milestone?

When you have a few milestones identified, you’ll notice that these aren’t just events that happen. They have the potential to change the relationship. That’s why you should look at crucible moments next.

- Crucible moments

A crucible moment is a defining moment that shapes our identity or worldview or relationship. Often prompted by challenging events like a demanding job, a prolonged illness, a military deployment, or the death of a loved one, these moments are more than that. They are moments of transformation. The crucible moment is a clarifying one, a sudden epiphany or a gradual realization that transforms.

Often crucible moments are associated with milestones. For instance, you might think about the time when you got your first ticket. That’s a milestone. But then there’s the time when you received two speeding tickets in one day (just theoretically speaking, of course), which led to a change of heart. You (by which I mean me) decided to consciously adopt a more accepting attitude toward being late. That realization, whether made in a puddle of tears at the side of the Garden State Parkway or lying awake in the middle of the night, is a crucible moment.

Now maybe your product doesn’t actively participate in that crucible moment. Or maybe it suggests a coping strategy or enhances a small triumph or facilitates awareness. If we are truly designing for emotion, the point is that we care about understanding these moments. Crucible moments, because they are in the mind and in the heart, won’t be observable in the data. Empathetic listening is the only way.

- Boundary check

Milestones help us build trust and cement bonds. Crucible moments are those moments when product and person come together in a meaningful way. Boundaries can be just as important. Boundaries are the limits we set for ourselves and, in this case, our tech. They protect our sense of personal identity and help guard against being overwhelmed. A few questions to consider as we plot out this relationship would be the following:

How will you disagree?

What will you call each other?

What kinds of interactions are okay and not okay?

How will you ask for consent?

How will you know if you continue to have consent?

What you will share and not share with each other?

How often will you communicate?

As in every other aspect of our relationship, boundaries are likely to shift over time. Absolutes often as do boundaries that are too vague. Setting boundaries means being specific and direct. Clearly communicating what data is used and how it’s used is a start. Giving people agency to set boundaries and reevaluate from time to time is a given for a healthy relationship, with other humans or with tech.

BUILD CIVIC CONNECTION

You’ll likely notice that many of the relationship models are focused on making only one person comfortable. Personal assistants, virtual butlers, and bot companions focus on just one person as the center of the universe. The danger is that we create narcissistic products and services, where individuals are insulated from the world with their needs always central and their beliefs always validated. Filter bubbles are just the first manifestation we’re seeing.

What if we challenged ourselves to think about the consequences of this approach? Rather than creating one-way relationships in which the machine is a servant anticipating one person’s needs, solving that individual’s problems, and making them feel good, we need to design for less isolation and narcissism. Here are a couple of techniques to try.

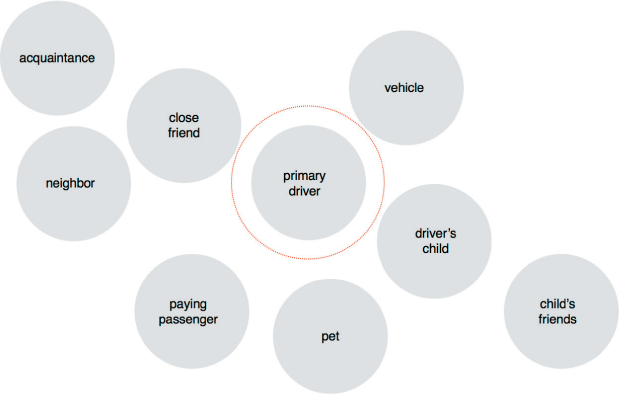

- Shifting centers

One way to do this is by shifting the primary focus. First, put your individual in the center. Add the bot as a node. Then, begin to fill in the other aspects of the network (Figure 4-9). If we are talking about an autonomous car, perhaps we have the driver or primary owner/renter at the center. The car is a node. Next, we consider passengers: adults and kids, close friends and awkward dates, relatives and relative strangers. Following that, we add other people who share the vehicle, if it’s not owned by one person or core group. Finally, add other drivers on the road.

Now move the center. Shift to focus on one of the other nodes in this network. Give that person, or organization or community, the same attention as you would the primary user. Look at how the priorities in both the long term and short term change. Consider how to balance the needs of that primary user with other actors in the network.

Figure 4-9. The circle whose center is everywhere and circumference is nowhere

- The network effect

- Let’s borrow from the principle of emotional contagion for this exercise. Using the same network diagram, list the emotions associated with the one (or two) primary actors for one scenario or one aspect of the experience. Then, start to consider how those emotions might move through the network. Suppose that a car showed care by taking over if the driver becomes sleepy, and the driver felt relief and gratitude. How do those emotions affect passengers? Does it have an indirect effect on the emotional climate of the roadway? Of a city?

- Device narration

- Role playing from another perspective can sometimes end up hurtful, so this activity takes a cue from social and emotional learning (SEL) prompts. Write a stream-of-consciousness narrative from the device’s perspective for a typical day. People talk to their cars. People already lend a voice to their Roombas or treat Alexa as a member of their family. So, this activity comes readily and reveals the quirks, the irritations, and the most beloved aspects of the relationship.

By thinking in terms of networks and giving other actors in the network a voice, we can begin to better understand implications. Rather than creating an emotionally satisfying experience to just one person, we can start to think about the larger social impact.

MAKING IT LEGIBLE

When Google first debuted Duplex making a hair appointment, it didn’t reveal that it was a bot to the caller on the other end. With umms and pauses and uptalk, the chatbot caught everyone off guard by seeming just a little too human. By design, it seemed to use quirks like “I gotcha” and “mm-hmm” so that the human on the other end would stay on the line to complete the call. Even if the script was changed, as Google indicates it will do, to something like, “Hi, uh, I’m the Google assistant and I’m calling to make an appointment,” it might make people less likely to hang up as they would on any other robocall. I wonder what the humans on the other end of the call feel when they find out they’ve spoken with a bot.

Bots that try to pass as human have the real potential to manipulate emotion and damage trust. If technology cheapens relationships, tricks us, makes us lose our trust so that we don’t know what to believe, it will damage our future selves and our human relationships. And it won’t achieve the kind of meaningful role that technology can play in our lives.

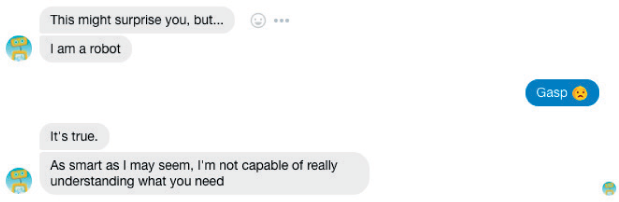

A more honest approach might remind us that these devices are actors with human characteristics. Woebot, a cognitive behavioral therapy bot, can be a good model (Figure 4-10). Even though Woebot does seem to remember when you felt anxious, it counteracts that impression by noting what it can’t do. By way of introduction, it notes that it “helps that I have a computer for a brain and a perfect memory…with a little luck I may spot a pattern that humans can sometimes miss.”

Figure 4-10. The best bot is an honest bot (source: Woebot)

Humanizing technology is not so much about creating a realistic character or anthropomorphizing bots. Humanizing means giving our relationship more human characteristics by cultivating trust so that our interests gradually align, continue with respect, and change together.

Avoiding Automating Humans

Machines are becoming more human-like. Chatbots are going off script, learning with each interaction how to communicate with greater nuance. Robots are using facial recognition to detect signs of emotion in people. At least one robot, Sophia of Hanson Robotics, has been granted citizenship.

Humans are changing, too, in ways we barely register. Tech introduced anonymity into our interactions pre-internet, of course. The fast-food drive-thru makes it easy to forget that we are speaking to a human on the other side of a static-y microphone, so it seems “natural” to bark out orders. Twitchy habits like repetitively hitting refresh are hard to shake. Adjusting your speech to make sense to Alexa or Siri is a given. Let’s face it, in some ways, we are becoming a bit more machine-like.

AUTOMATIC FOR THE PEOPLE

Most people wish for more ways to save time and emotional energy. Whether you are slammed at work, or a sleep-deprived new parent, or simply living life as a busy, busy human, there’s only so much of you to go around. Wouldn’t it be great to have a little help? That’s almost the definition of most of our tech today.

Do Not Pay is a legal chatbot that helps people challenge parking tickets and claim compensation for flights. Companies such as Narrative Science can replicate some of the work of human journalists.10 Arterys can perform magnetic resonance imaging of blood flow through the heart faster than a human radiologist.11 When AI partners with humans, the result can be feelings of competence.

Often, it’s more complicated than that. When it comes to connections, we’ve tried to scale intimacy. Most of us have moved beyond the Dunbar number, or that sweet spot of 150 people that anthropologist Robin Dunbar says is the maximum number of stable relationships we can support. Keeping up with the constant connectivity does not come naturally.

Rather than culling relationships, we keep them. This means that we rely on automation to extend our reach because we don’t have the bandwidth to support conversational depth. Sometimes it’s transparent, or nearly so. I know that “personal” email newsletter isn’t personal. I’ve learned that your LinkedIn invite is canned. No worries. It might not be too much of a stretch to get used to automated customer care conversations, after all.

But that certainly won’t be the end of it; we like easy, and the connections keep coming. The more we streamline, the more we stand to lose in our relationships. MIT professor Sherry Turkle explains, “Getting to know other people, appreciating them, is not necessarily a task enhanced by efficiency.”12 We try anyway, though. Cut-and-paste platitudes, canned email templates, and predictive emojis make our communication go more smoothly. More and more of our interactions are automated, with a hint of authenticity (Figure 4-11). Tech ethicist David Polgar refers to it as “botifying” humans.

Soon enough, we might be tempted to automate more communication. Think about Facebook’s compilation videos presenting friendship slideshows, likely chosen for how much you’ve reacted to one another’s posts. They evoke intimacy while aiming to provoke engagement. It’s a glimpse of how the feelings we show can be conflated with the feelings we experience. Just as we lose the ability to navigate cities without GPS, we might begin to lose the ability to navigate our emotions.

Figure 4-11. Better to coach than to automate (source: Crystal Knows)

How can we avoid driving humans to be more machine-like? Brett Frishman and Evan Selinger propose an inverse Turing test to determine to what extent humans are becoming indistinguishable from machines (Re-Engineering Humanity, Cambridge University Press, 2018). Perhaps we can test our designs prerelease for how much they might “botify” human identity and connections, those critical elements for our emotional well-being. Or, it might be a checklist like this:

Is human-to-human communication replaced with human-to-machine or machine-to-human or machine-to-machine communication?

Are human-to-human relationships being excessively streamlined?

Are people compelled to speak in an oversimplified manner for extended periods of time?

Did the human consent to communication with or through a bot?

Is an emotional relationship cultivated purely for the gain of the organization?

If you answered yes to any of these questions, you might just be mechanizing humans. Each answer opens up a world of ethical issues that the industry is confronting. Ethical implications aside, automating intimacy isn’t likely to sustain relationships. Knowing that meaningful relationships are core to emotional well-being, it’s clear that the more we automate ourselves, the less happy we’ll be.

New Human–Machine Relationship Goals

IKEA’s Space 10 future-living lab asked 12,000 people around the world how they might design AI-infused machines.13 The results are a study in contradictions. Most people wanted machines to be more human-like than robot-like. At the same time, most still preferred obedience to other characteristics. People are paradoxical; it’s one thing that makes us human, I suppose.

It’s easy to debunk the survey. The questions offer simple, binary choices. Do you prefer “autonomous and challenging” or “obedient and assisting”? Um, no. Surveys, in general, don’t offer the opportunity to think through implications or reflect on contradictions. We are simply humans, mechanically answering the questions put in front of us. Even so, the results show that “human” is the ultimate measure of success.

In our rush to humanize, though, we still don’t fully understand what’s human ourselves. We know human cognition is quite distinct from machine cognition. Human life, vulnerable to disease and possessed of innate instincts, is not mechanical. Human emotion is profoundly different from anything a machine can detect. Yet here we are, designing a new class of beings.

When people interact with a bot, they will form a relationship whether we design a personality or not. Rather than serving up a fully formed, light-hearted personality, we need to leave room for empathetic imagination. Rather than one-sided relationships, we’d do well to consider rewarding complexity. Rather than replicating humans, we can complement them.

For now, the list of household bots is short, but it will no doubt grow many times over. Within a decade, virtual and embodied bots will live among us, getting our coffee, teaching our children, driving us about. I hope they will also be engaging us in meaningful conversations, making us aware of our emotions, holding us true to our values. Maybe even writing beautiful poems or creating thought-provoking art.

If we design our bots with only an eye toward a function, we might be more productive. Or, conversely, have more leisure. But if we design them to be emotionally intelligent, we might just make progress in areas that are crucial to our human future—connection, care, and compassion.

1 Russell Belk, “Possessions and the Self,” Journal of Consumer Research, September, 1988.

2 Casey Newton, “Speak, Memory,” The Verge, 2017.

3 Nate Chinen, “Fascinating Algorithm: Dan Tepfer’s Player Piano Is His Composing Partner,” NPR Music, July 24, 2017.

4 Radiolab, “Clever Bots,” Season 10, Episode 1, 2011.

5 Jan-Phillip Stein and Peter Ohler, “Venturing into the Uncanny Valley of the Mind,” Cognition, March 2017.

6 Brian Christian, The Most Human Human: What Talking with Computers Teaches Us About What It Means to Be Alive, Knopf Doubleday, 2011.

7 Nicholas Epley, Mindwise: How We Understand What Others Think, Believe, Feel, Want, Knopf 2014.

8 J. Walter Thompson Innovation Group and Mindshare Futures, “Speak Easy,” April 5, 2017.

9 Kate Darling, “Who’s Johnny? Anthropomorphic Framing in Human-Robot Interaction, Integration, and Policy,” Robot Ethics 2.0: From Autonomous Cars to Artificial Intelligence. Oxford University Press, 2017.

10 Tom Simonite, “Robot Journalist Finds New Work on Wall Street,” Technology Review, January 9, 2015.

11 Matt McFarland, “What Happens When Automation Comes for Highly Paid Doctors,” CNN Tech, July 14, 2017.

12 Sherry Turkle, Reclaiming Conversation: The Power of Talk in the Digital Age, Penguin, 2015.

13 IKEA Space10, “Do You Speak Human?” May 2017.

Get Emotionally Intelligent Design now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.