June 2019

Intermediate to advanced

308 pages

7h 21m

English

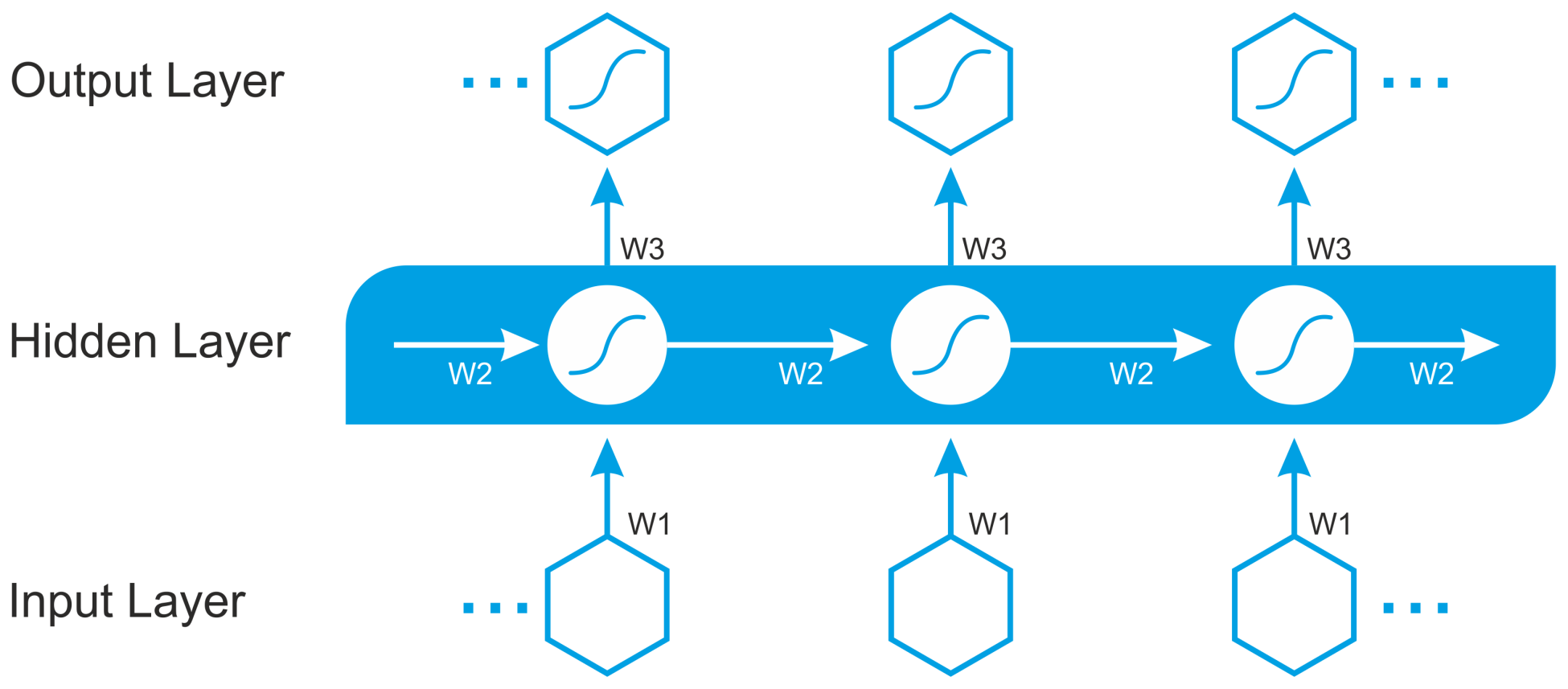

In RNNs, connections between units form a directed cycle. The RNN architecture was originally conceived by Hochreiter and Schmidhuber in 1997. RNN architectures have standard MLPs, plus added loops so that they can exploit the powerful nonlinear mapping capabilities of the MLP. They also have some form of memory. The following diagram shows a very basic RNN that has an input layer, two recurrent layers, and an output layer:

However, this basic RNN suffers from gradient vanishing and the exploding problem, and cannot model long-term dependencies. These architectures include LSTM, gated recurrent units (GRUs), bidirectional-LSTM, ...

Read now

Unlock full access