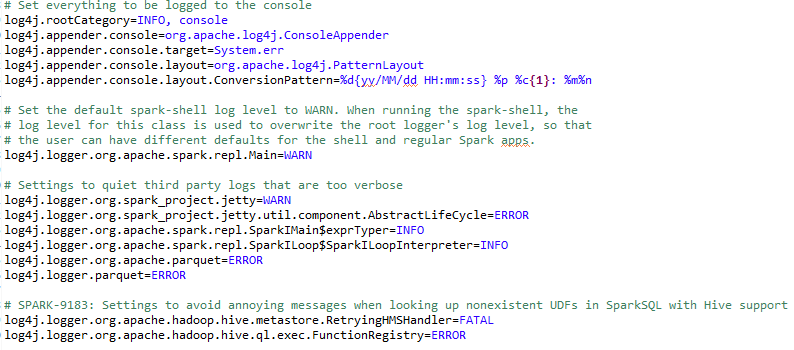

We have already discussed this topic in Chapter 14, Time to Put Some Order - Cluster Your Data with Spark MLlib. However, let's replay the same contents to make your brain align with the current discussion Debugging Spark applications. As stated earlier, Spark uses log4j for its own logging. If you configured Spark properly, Spark gets logged all the operation to the shell console. A sample snapshot of the file can be seen from the following figure:

Set the default spark-shell log level to WARN. When running the spark-shell, the log level for this class is used ...