April 2017

Intermediate to advanced

318 pages

7h 40m

English

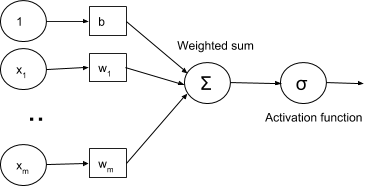

Sigmoid and ReLU are generally called activation functions in neural network jargon. In the Testing different optimizers in Keras section, we will see that those gradual changes, typical of sigmoid and ReLU functions, are the basic building blocks to developing a learning algorithm which adapts little by little, by progressively reducing the mistakes made by our nets. An example of using the activation function σ with the (x1, x2, ..., xm) input vector, (w1, w2, ..., wm) weight vector, b bias, and Σ summation is given in the following diagram:

Keras supports a number of activation functions, and a full list is available ...