October 2018

Beginner

362 pages

9h 32m

English

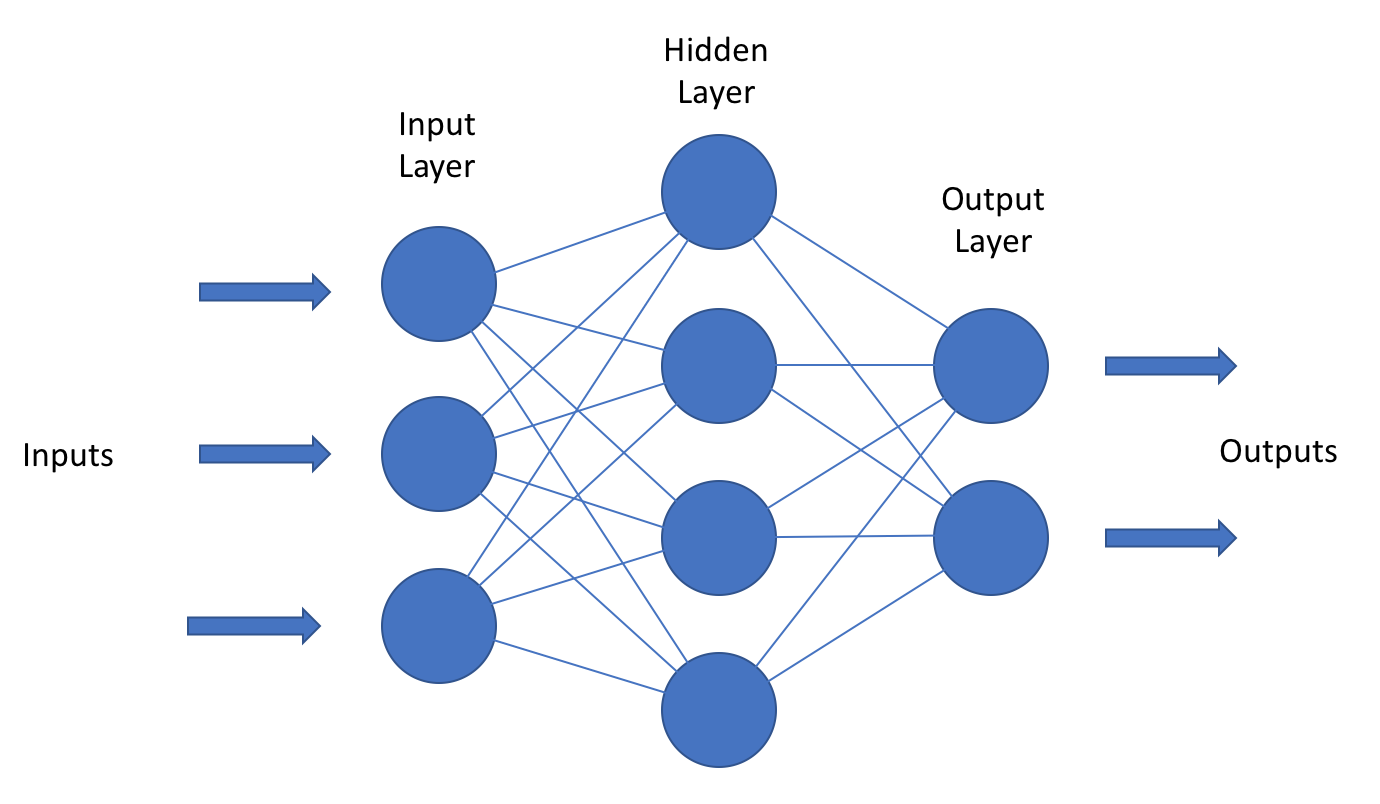

We refer to networks by the amount of fully connected layers that they have, minus the input layer. The network in the following figure, therefore, would be a two-layer neural network. A single-layer network would not have an input layer; sometimes, you'll hear logistic regressions described as a special case of a single-layer network, one utilizing a sigmoid activation function. When we talk about deep neural networks in particular, we are referring to networks that have several hidden layers as shown in the following diagram:

Networks are typically sized in terms of the number of parameters that they have ...

Read now

Unlock full access