June 2020

Intermediate to advanced

382 pages

11h 39m

English

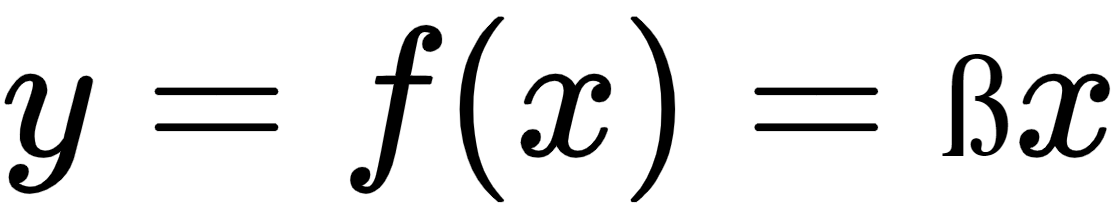

In ReLU, a negative value for x results in a zero value for y. It means that some information is lost in the process, which makes training cycles longer, especially at the start of training. The Leaky ReLU activation function resolves this issue. The following applies for Leaky ReLu:

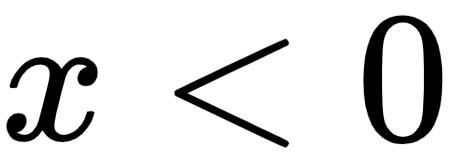

; for

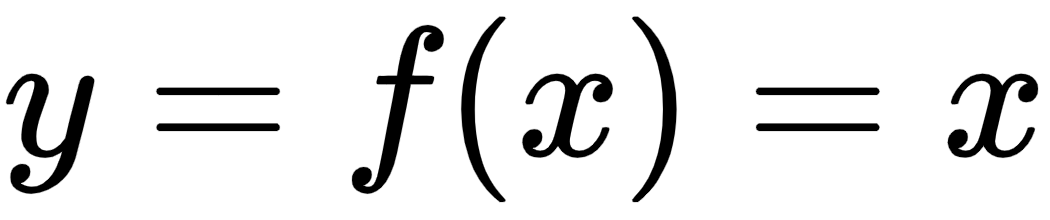

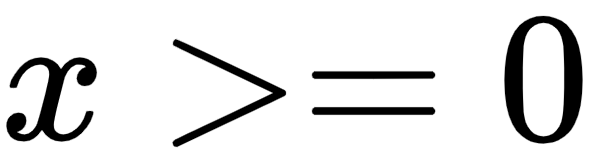

; for

for

for

This is shown in the following diagram: ...

Read now

Unlock full access